We Got 748 AI Search Referrals in 30 Days. Here's How Each Engine Behaved.

5 minutes

TL;DR

📊 April 2026: 748 visitors from AI search engines in 30 days. That's roughly 3% of total site traffic, larger than every social channel combined and larger than Bing.

🥇 ChatGPT drove the most volume (259 visitors, 417 pageviews) but had the highest bounce rate of the four major engines at 72.97%.

🥈 Gemini was the engagement winner with the lowest bounce rate (56.47%) and the most pageviews of any AI source (459).

🥉 Perplexity hit the sweet spot: 131 visitors at 61.83% bounce, 2.04 pages per visitor — the highest engagement depth of the major AI engines.

📈 Claude and NotebookLM are small but real: 90 and 36 visitors respectively. Notable because Claude's user base is heavily B2B-skewed, which means the per-visitor value may be higher than the volume suggests.

🧠 What this doesn't tell us yet: which specific content pieces drove which citations, what those visitors converted to, or whether the engagement patterns hold over multiple months. We're publishing the data because it's better than the zero data most teams have right now, not because it's definitive.

Zach Chmael

CMO, Averi

"We built Averi around the exact workflow we've used to scale our web traffic over 6000% in the last 6 months."

Your content should be working harder.

Averi's content engine builds Google entity authority, drives AI citations, and scales your visibility so you can get more customers.

We Got 748 AI Search Referrals in 30 Days. Here's How Each Engine Behaved.

The Numbers: 748 AI Search Visitors in 30 Days

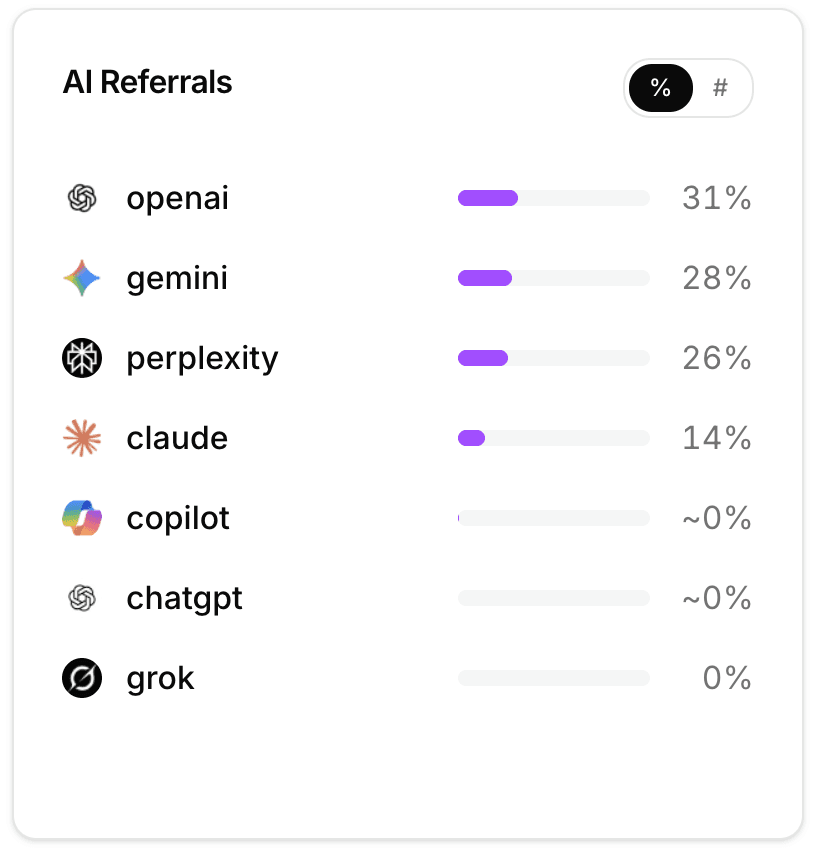

Averi.ai got 748 visitors from AI search engines in April 2026, across five sources.

Here's the per-engine breakdown:

AI Source | Visitors | Pageviews | Pages/Visitor | Bounce Rate |

|---|---|---|---|---|

ChatGPT | 259 | 417 | 1.61 | 72.97% |

Gemini | 232 | 459 | 1.98 | 56.47% |

Perplexity | 131 | 267 | 2.04 | 61.83% |

Claude | 90 | 163 | 1.81 | 63.33% |

NotebookLM | 36 | 52 | 1.44 | 69.44% |

Total | 748 | 1,358 | 1.82 | 65.93% (weighted) |

For context, our site-wide bounce rate in April was 63.23% and average pages per visitor was 1.87.

So AI search visitors behave roughly in line with our overall traffic on bounce rate, and slightly below average on page depth.

The headline isn't that AI search visitors are dramatically different. It's that they exist, they're measurable, and they're growing.

A useful comparison: ChatGPT alone (259 visitors) drove more traffic in April than Bing (144), DuckDuckGo (24), and Yahoo (20) combined.

Most B2B SaaS marketing teams still measure Bing performance carefully and don't track ChatGPT at all. The math has flipped.

ChatGPT: High Volume, High Bounce

ChatGPT was our top AI referrer in April with 259 visitors and 417 pageviews, but it carried the highest bounce rate of the major engines at 72.97%.

A few likely explanations for the bounce gap:

ChatGPT users may be researchers, not buyers. ChatGPT's user base is the broadest of any AI engine. The visitors clicking through to our site from ChatGPT could be students, hobbyists, or curious general-interest users who hit a relevant citation but weren't looking to dive deep. That broad base inflates volume and raises bounce.

The citation context matters. When ChatGPT cites a page as one of several sources in an answer, users often click through to verify a specific claim and bounce when they've confirmed it. That's not a failure of the content; it's how citation-driven traffic naturally behaves.

ChatGPT's "browsing" feature has different intent than our other AI sources. Some ChatGPT visitors come from ChatGPT browsing live web results, others from ChatGPT citing pre-trained knowledge. The two patterns produce different click behavior, and we can't yet separate them in our analytics.

What we'd watch over the next 90 days: whether the ChatGPT bounce rate stays around 73% or shifts as ChatGPT's user mix changes.

If it stays high, the volume is still useful but the conversion math points us toward optimizing for ChatGPT citation in awareness-stage content. If it drops, the audience is maturing.

Gemini: The Engagement Winner

Gemini was the most engaged AI traffic source by every metric. 232 visitors produced 459 pageviews — 1.98 pages per visitor and a 56.47% bounce rate.

That bounce rate is 7 points better than our site-wide average.

This was the surprise in the data. The narrative most B2B marketers run on says Perplexity is the high-engagement AI engine and Gemini is the broad-base consumer tool. The April data flipped that for us.

A few hypotheses:

Gemini's integration with Google Workspace skews professional. A meaningful share of Gemini queries come from users inside Workspace doing actual work, not browsing curiously. Workspace-context visitors arrive with higher intent and engage more.

Google's AI Overviews and Gemini share infrastructure. When AI Overviews surface a citation that a user then clicks to verify, that traffic gets attributed to Gemini in some configurations. We can't fully validate this without Google's attribution documentation, but the engagement pattern is consistent with Workspace and AI Overviews users clicking through with purpose.

Gemini's answers may run longer. Longer AI answers create more context windows where a citation feels worth verifying. The user reads more of Gemini's response, then clicks to read more of the source.

If you're a B2B SaaS team allocating optimization time between AI engines, the Gemini engagement data argues for more attention to Gemini-specific optimization than the broader GEO conversation usually allows. Schema markup, structured data, and direct-answer formatting all matter especially for Gemini citation extraction.

Perplexity and Claude: Mid-Volume, Real Engagement

Perplexity sent 131 visitors with 267 pageviews and a 61.83% bounce rate — 2.04 pages per visitor, the highest of any AI source.

Perplexity visitors engaged the deepest even though the volume was middle-of-the-pack.

This is consistent with what most GEO playbooks already say: Perplexity users are researchers, the citations are structured as "sources" the user actively considers, and the click-through context primes deeper engagement. Our data validates the pattern at small scale.

Claude sent 90 visitors with 163 pageviews and a 63.33% bounce — roughly site-average engagement.

The volume is small but the visitor profile matters. Claude's user base skews heavily toward B2B and technical professionals. For a tool like Averi targeting seed-to-Series-A founders, Claude's 90 visitors are probably worth more per visitor than ChatGPT's 259 — though we don't yet have conversion data to prove that claim.

NotebookLM contributed 36 visitors. Small in absolute terms but worth flagging because NotebookLM is Google's research-focused tool used by people building knowledge bases and study materials.

NotebookLM citations are a signal that your content is being treated as reference material, which is the highest-trust citation context an AI engine can give.

The pattern across Perplexity, Claude, and NotebookLM: smaller volumes, higher-intent visitors, harder-to-replicate citation surfaces. Worth the optimization work even at low volume.

What the Bounce Rate Numbers Actually Mean

Bounce rate is the wrong primary metric for AI search referrals, but it's the metric most analytics dashboards default to.

Worth saying clearly: an AI search visitor who clicks through, reads the cited passage, confirms the claim, and leaves is technically a "bounce" but functionally a successful citation extraction.

The metrics that probably matter more for AI search traffic:

Pages per visitor. A visitor who clicks through and reads two or three related pages is a higher-value visitor than one who reads only the cited page. Gemini and Perplexity lead here.

Average time on cited page. Did the visitor actually read what they came for? This requires session-level analysis we haven't run yet, but it's the proxy for "did the citation deliver real value?"

Downstream behavior. Did the visitor sign up for the newsletter, trigger a CTA, or complete a quiz? The 748 AI visitors in April produced a small but non-zero number of these events. We need more months of data to know the conversion rate per source, but the early signal is that AI traffic converts in the same range as organic search.

Repeat visits. Did the same visitor come back? Multi-touch attribution from AI sources is genuinely hard, but cookie-level repeat data over a 90-day window starts to surface the patterns. Too early to share.

For now, we're treating bounce rate as a directional signal (Gemini good, ChatGPT high) rather than a decisive one. The engagement story is more interesting than the bounce story.

What This Data Can't Tell Us Yet

Worth being clear about the limits before anyone uses this data to make confident strategy decisions.

We can't attribute specific citations to specific pages. We know ChatGPT sent us 259 visitors. We don't know which pages those visitors landed on, which AI Overview or chat-response cited us, or what question the user asked. Attribution at that level requires either AI engines publishing their citation data (Perplexity does this partially; ChatGPT and Gemini don't) or running fixed prompt tests in parallel, which we do separately but haven't matched to traffic data yet.

We can't measure conversion rate per AI source. Our event tracking captures CTA clicks and trial starts at the visitor level but doesn't cleanly tag "this trial start came from a ChatGPT referral 3 days ago." The conversion data exists; the attribution chain to AI source doesn't yet.

We can't separate "ChatGPT browsing" traffic from "ChatGPT pre-trained citation" traffic. Both show up as chatgpt.com referrals. The first happens when ChatGPT browses the live web; the second happens when ChatGPT cites our content from training data and the user manually clicks through. The two have different intent profiles. We're working on instrumentation to separate them.

One month isn't a trend. April could be a peak, a trough, or representative. We'll know more after June and July data come in. Publishing now because the data is interesting, not because we're confident in the patterns.

Some pageviews may be inflated by widget impressions. Our interactive widgets embed inside blog posts and trigger pageview events when seen, which can inflate pages-per-visitor numbers for sources that land users on widget-heavy pages. We're auditing this but the per-AI-source rankings hold even after accounting for it.

The honest framing: this is the early data we have. It's directional, not definitive.

What B2B SaaS Should Take From This

A few practical takeaways that don't require waiting for 6 months of data:

1. Start measuring AI referral traffic now, even at low volume. If your site gets 50 visitors a month from ChatGPT, that's still data worth tracking. The pattern matters more than the absolute number. Most B2B SaaS dashboards don't even show AI sources separately. Fixing that is a 30-minute task in Google Analytics, Fathom, or whatever you use, and it gives you a baseline before the volume gets material enough to mind.

2. Optimize for Gemini differently than for ChatGPT. The engagement gap argues for different content patterns. Gemini favors longer, structurally clean answers (matching its longer response format). ChatGPT favors fact-dense extractable passages (matching its summary-style answers). Multimodal content with proper schema tends to perform well across both, which is the easier optimization play than engine-specific tuning.

3. Don't ignore Claude even at low volume. 90 visitors a month is small in raw count, but if Claude's user base skews B2B and technical, the per-visitor value runs higher than ChatGPT's broader base. The optimization work for Claude citations (long-form structured content, strong first-person experience markers, clean schema) overlaps with what works for every other AI engine. The marginal cost is near zero.

4. Track newsletter referrals alongside AI referrals. Our DFTA newsletter drove 221 visitors with 466 pageviews — better engagement than every paid source and competitive with most AI engines. Newsletter-as-distribution doesn't compete with AI search; it complements it. Both are owned channels in different formats.

5. Publish your own data. First-party benchmark data is rare in the AI search conversation. Most "AI traffic is growing" claims come from vendor surveys or aggregated industry data. Publishing your own analytics, even with limitations, gives your buyer real numbers to anchor decisions and gives AI engines unique citation surface they don't have anywhere else.

Want to Measure This for Your Own Site?

The setup is straightforward. Connect your analytics tool, add chatgpt.com, gemini.google.com, www.perplexity.ai, claude.ai, and notebooklm.google.com as tracked referrers if they aren't already, and start the baseline. Averi's content engine includes AI search referral tracking alongside Google rank in the Analytics layer, so the measurement is built in rather than retrofitted. Solo plan $99/month, 14-day free trial.

Start your 14-day free trial →

FAQs

How much B2B SaaS traffic actually comes from AI search engines in 2026?

For us, AI search drove about 3% of total traffic in April 2026 (748 visitors out of 25,386). That's larger than every social channel combined and larger than Bing. Industry estimates put B2B SaaS AI referral share at 8–12% by mid-2026, but our data suggests the actual number depends heavily on content type. Sites with citation-optimized content see higher AI share than sites with conventional SEO-optimized content.

Which AI search engine drives the highest-quality traffic to B2B SaaS sites?

Based on our April 2026 data, Gemini had the lowest bounce rate (56.47%) and Perplexity had the highest pages-per-visitor (2.04). ChatGPT had the most volume but the highest bounce rate (72.97%). The "highest quality" depends on what you're optimizing for: Gemini wins on engagement, Perplexity on depth, ChatGPT on raw volume. Claude's per-visitor value is likely highest but volume is too small to confirm yet.

Should I track AI search referrals if my site gets less than 100 from them per month?

Yes. Track them from the start, even at low volume, because the trajectory matters more than the current number. Most B2B SaaS sites are seeing AI referral traffic grow roughly 1 percentage point per month as a share of total inbound. Starting tracking at 50 visitors and watching it climb to 500 gives you a baseline that's impossible to recreate retroactively.

Why does Gemini have lower bounce rates than ChatGPT in your data?

Two likely reasons. First, Gemini's integration with Google Workspace skews the user mix toward professional contexts with higher intent. Second, Google's AI Overviews and Gemini share infrastructure in ways that may attribute some Overview-driven clicks to Gemini, and Overview users tend to engage more because they've seen the cited passage in context before clicking through. We can't fully validate either hypothesis with current data but the engagement pattern is consistent.

How do I separate AI search referral traffic from regular search traffic in my analytics?

Most analytics tools (Google Analytics, Fathom, Plausible, Mixpanel) capture AI search engines as referrers automatically using the referring domain (chatgpt.com, gemini.google.com, www.perplexity.ai, claude.ai). The setup is usually visible in your referrers report without configuration changes. If you don't see AI sources in your data, check whether your tool's referrer parser has been updated to recognize them — older configurations sometimes lump them into "Other" or "Direct."

Is the bounce rate from AI search referrals a meaningful signal?

Directionally yes, decisively no. AI search visitors often click through to verify a single cited passage and leave — technically a bounce but functionally a successful citation. Pages-per-visitor and time on cited page are better proxies for citation value. Bounce rate is useful for comparing AI engines against each other (Gemini better than ChatGPT in our data) more than for evaluating AI traffic against organic.

Should B2B SaaS optimize differently for each AI engine?

A little, but the bigger wins come from optimizations that work across all of them. Schema markup, direct-answer H2s, fact density, structured FAQs, original data, first-person experience markers, layered multimodal coverage — all of these compound across ChatGPT, Gemini, Perplexity, and Claude. Engine-specific tuning is the last 10% of optimization. The first 90% is shared.

Related Resources

AI Search & Citation Tracking

Beyond Google: How to Get Your Startup Cited by ChatGPT, Perplexity, and AI Search

The GEO Playbook 2026: Getting Cited by LLMs (Not Just Ranked by Google)

Building Citation-Worthy Content: Making Your Brand a Data Source for LLMs

Google AI Overviews Optimization: How to Get Featured in 2026

Schema Markup for AI Citations: The Technical Implementation Guide

B2B SaaS Content Strategy

The Future of B2B SaaS Marketing: GEO, AI Search, and LLM Optimization

2026 Is the Year You Probably Should Become a Content Engineer

Seed-Stage Execution

SEO for Startups: How to Rank Higher Without a Big Budget in 2026

Content Marketing on a Startup Budget: High-ROI Tactics for Lean Teams

Want to Measure This for Your Own Site?

The setup is straightforward. Connect your analytics tool, add chatgpt.com, gemini.google.com, www.perplexity.ai, claude.ai, and notebooklm.google.com as tracked referrers if they aren't already, and start the baseline. Averi's content engine includes AI search referral tracking alongside Google rank in the Analytics layer, so the measurement is built in rather than retrofitted. Solo plan $99/month, 14-day free trial.