How Averi Hit 10M+ Google Impressions on a 1-Person Team

8 minutes

TL;DR

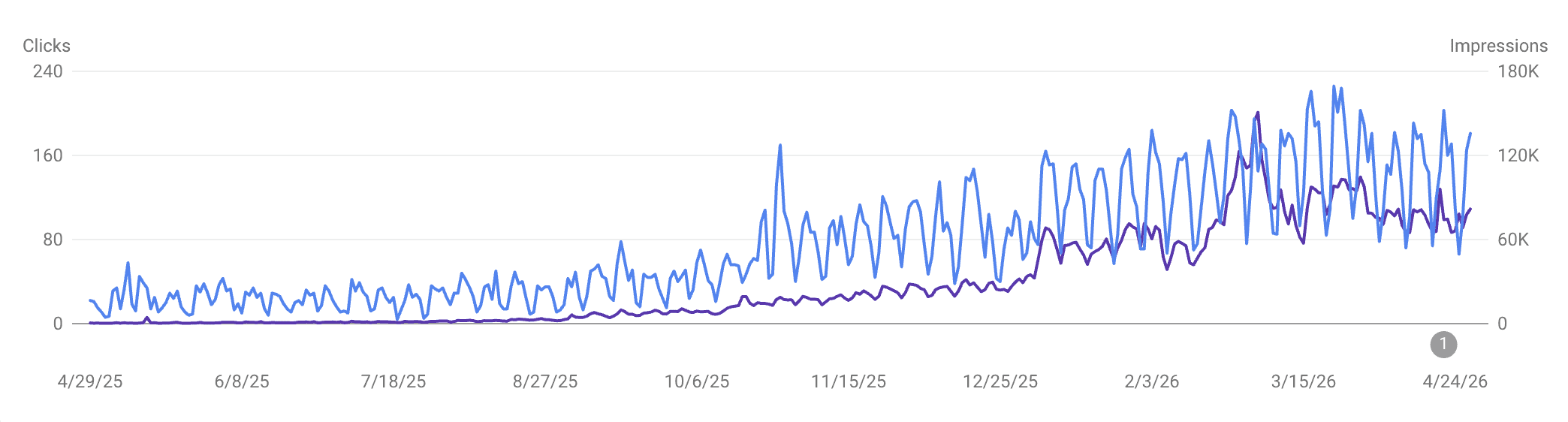

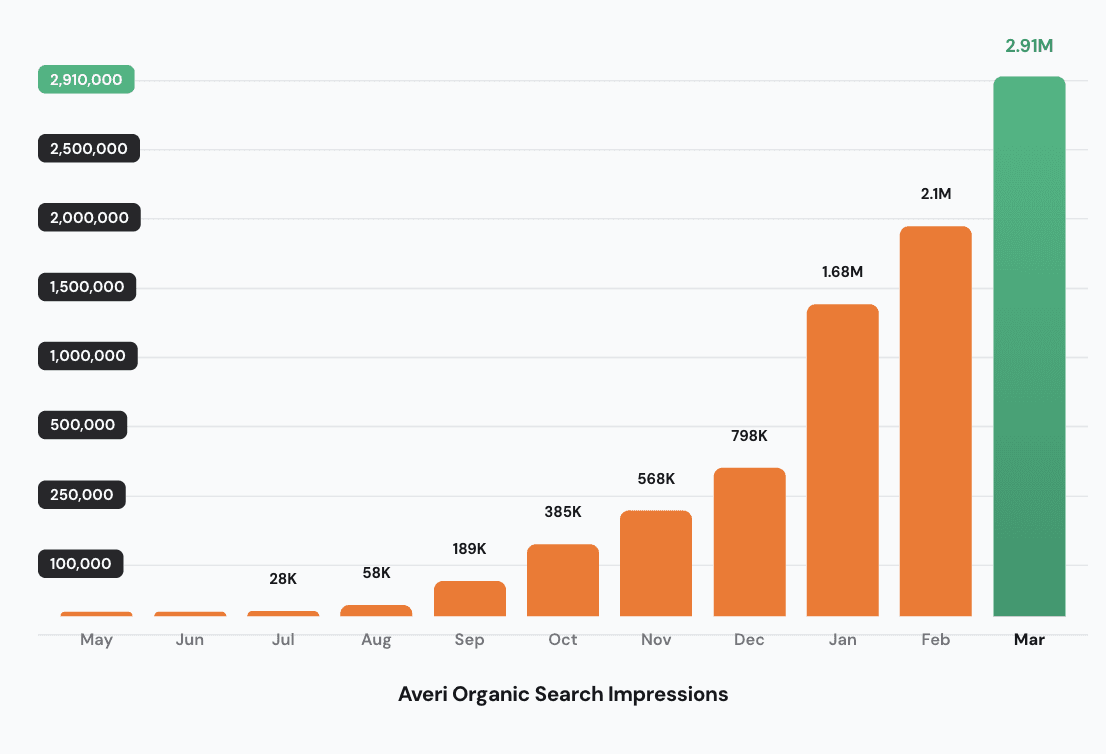

📊 Starting point: 1,130 Google impressions in April 2025, no published library, effectively zero organic search presence

📈 12-month result: 10,671,897 cumulative Google impressions, 27,464 clicks. Peak month (March 2026): 2,916,225 impressions, 164x the starting baseline

👤 Team size: One person (myself), running marketing alongside cofounder responsibilities. No content writer, no SEO consultant, no agency

🎯 The traffic story: 91% of total impressions come from non-branded queries, meaning the content is being found by buyers who don't know us yet, not just searched-for by people who already do

🛠 The workflow: Six phases (Brand Core, Strategy Map, Content Queue, Drafting + Scoring, Publishing, Analytics + Refresh). The same six phases any Averi customer runs

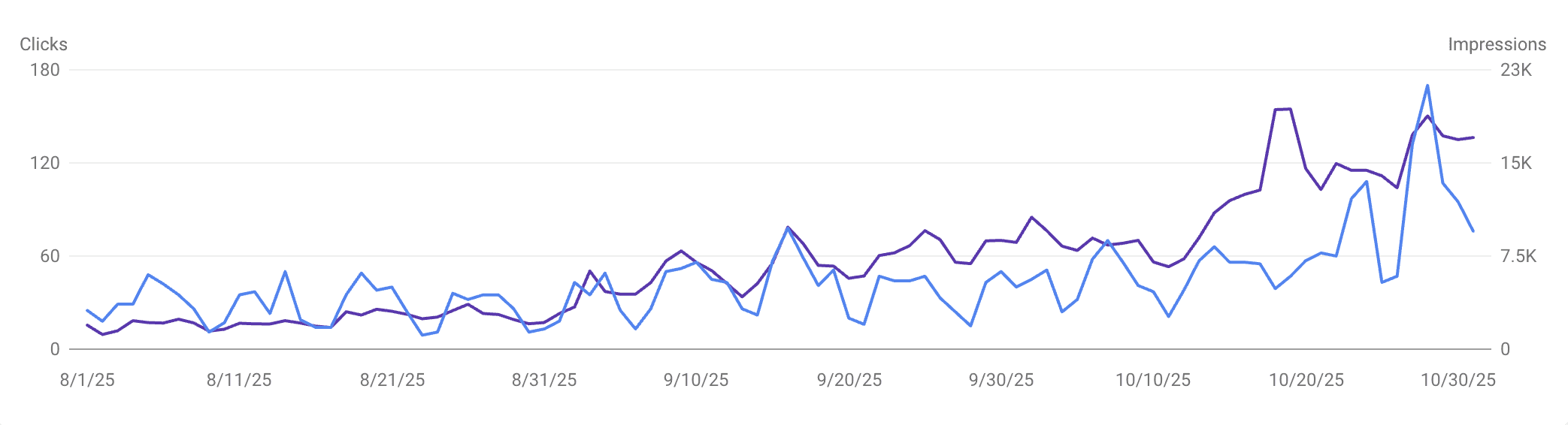

⏱ The real inflection: Month 5 (September 2025), not month 3 like the textbook says. Impressions jumped 2.77x in a single month, from 71K to 198K, and the engine was visibly running from there

🪜 The honest part: The first 4 months felt like nothing was working. That's the phase most founders quit. The compound only shows up after you stop being able to feel it day-to-day

Zach Chmael

CMO, Averi

"We built Averi around the exact workflow we've used to scale our web traffic over 6000% in the last 6 months."

Your content should be working harder.

Averi's content engine builds Google entity authority, drives AI citations, and scales your visibility so you can get more customers.

The 10M Receipt: How One Workflow Took Averi From 1,130 to 2.9M Monthly Google Impressions (With the Screenshots)

This is the piece I should have written six months ago, and the piece I kept putting off because writing about your own results feels like a flex even when it's the most useful thing you can publish.

Today I'm publishing it because every founder I talk to asks the same question, and the answer is more interesting than the short version:

Yes, the numbers are real.

Yes, here are the receipts.

And no, we didn't run a polished product from day one — I cobbled together a manual workflow with a stack of AI tools that ended up being good enough that we productized it.

That product is Averi. We now run Averi on Averi.

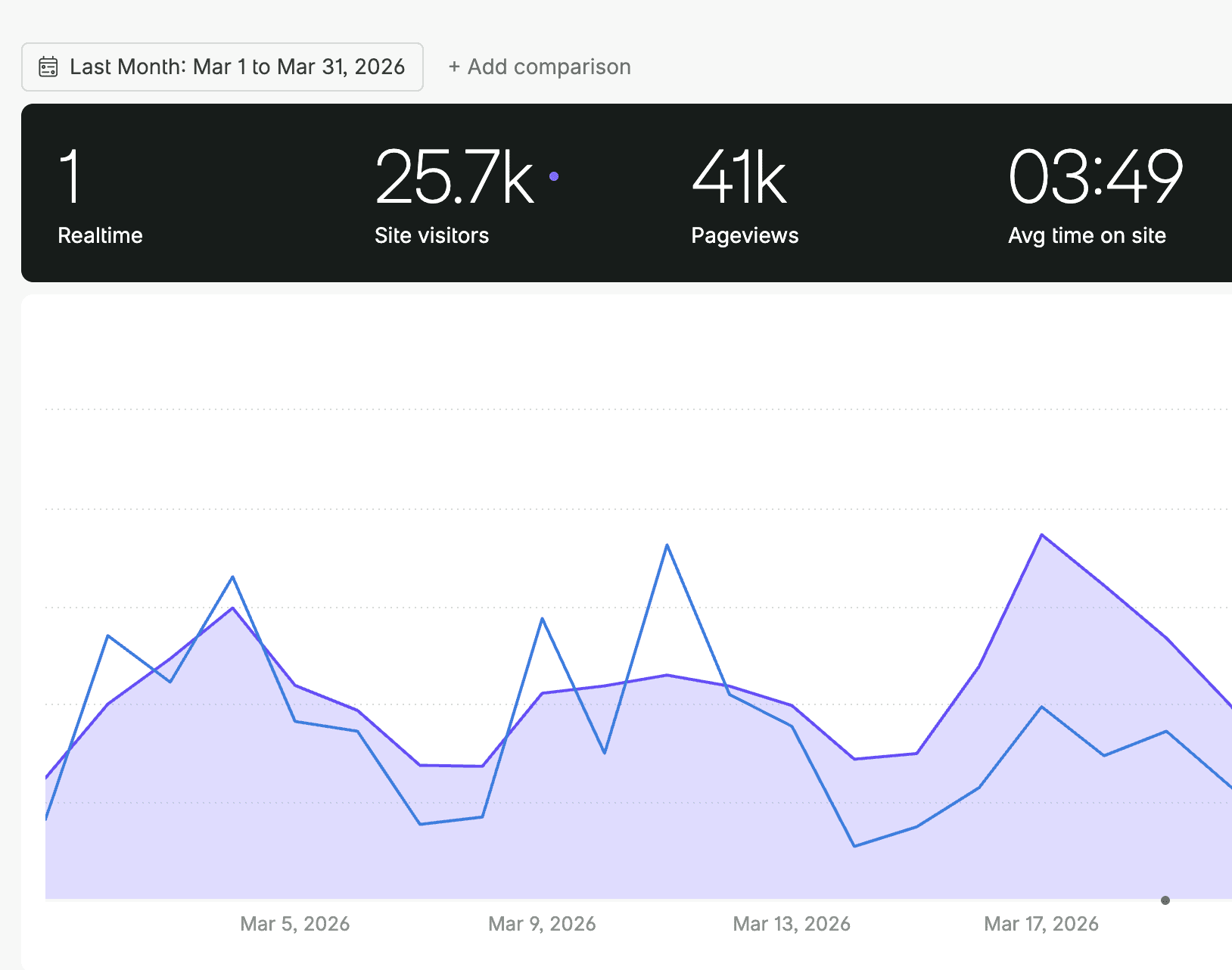

In the 12 months from April 28, 2025 to April 27, 2026, our Google Search Console logged 10,671,897 impressions and 27,464 clicks.

April 2025 closed out at 1,130 impressions.

March 2026 hit 2,916,225 impressions — the peak month so far.

That's a 164x increase, or 16,261% growth, on a one-person marketing team.

The first 11 of those 12 months ran on a manual workflow I'd duct-taped together.

This piece is the dated, month-by-month account of how that compounded. What worked, what didn't, where the inflection actually was (it wasn't where I expected), and how the manual system I built became the six-phase workflow now built into the product. The receipts are at the bottom.

See what your Content ROI could be over the next 12 months

Why I'm publishing this now

Three reasons this gets published this quarter and not next.

One. AirOps is publishing case studies showing $1M in pipeline from their content engineer cohort, with Webflow growing AI-attributed signups from 2% to nearly 10% in under a year, Chime tripling citations in under 4 weeks, and Carta achieving a 75% citation rate increase.

Jasper publishes Forrester research on AI content efficiency. Both are real.

Neither is what a seed-to-Series-A founder running marketing solo actually needs to see — those case studies feature companies like Webflow, Wiz, and Vanta with marketing teams of 5–20 people and existing CRM data depth.

The proof point a founder needs is "what does one person + this system look like" — and that's the case study only Averi can tell, because we are that one person.

Two. The piece is overdue. We've referenced our own marketing growth in passing across dozens of articles, social posts, and LinkedIn comments. Until now, there hasn't been a single canonical document anyone can link to as the source. AI search engines reward primary-source documents. This is the primary source.

Three. It's the answer to a specific question I keep getting on LinkedIn DMs… "is this actually possible without a marketing team or paid acquisition?"

The honest answer is yes, on the organic search side specifically, and the honest version of how it happened is more useful than any hand-wavy founder thread that ends with "build in public, stay consistent, the rest takes care of itself."

The rest doesn't take care of itself. There's a workflow.

The starting position (T-minus 0)

Before the workflow started running, here's what existed:

A domain (averi.ai) with effectively zero published content

1,130 Google impressions in April 2025 — barely enough to be statistically meaningful

No content writer, no SEO consultant, no marketing team beyond myself

A product still being built (the same content engine workflow that this piece is about)

What we did have: a clear ICP (seed-to-Series-A B2B SaaS founders running marketing without a dedicated team), a clear category position (AI content engine, not just an AI writer), and the founder advantage of writing about exactly the problem we'd been hired to solve.

That last part matters. The strategic context most marketing teams spend 30 days acquiring through interviews and customer research, we already had. We just needed the system.

For the broader argument on why the founder running marketing is actually an advantage rather than a constraint, see our piece on the founder's guide to content marketing in 5 hours a week and our content marketing on a startup budget guide.

The six phases — built manually first, productized after

The workflow that produced these numbers wasn't a polished platform on day one.

It was me, an account on a half dozen AI tools, a Notion workspace held together with database relations, and a recurring Sunday-night ritual of pulling the week's queries from Search Console manually.

The six phases below are how I structured that manual workflow once I'd built enough of it to recognize the pattern.

They're also the same six phases that now run as core product surfaces in Averi — because once the manual version was working, we built the platform around it.

So each phase below has two beats: how I ran it manually in 2025, and how it runs now as a product surface in Averi (which is what we use today).

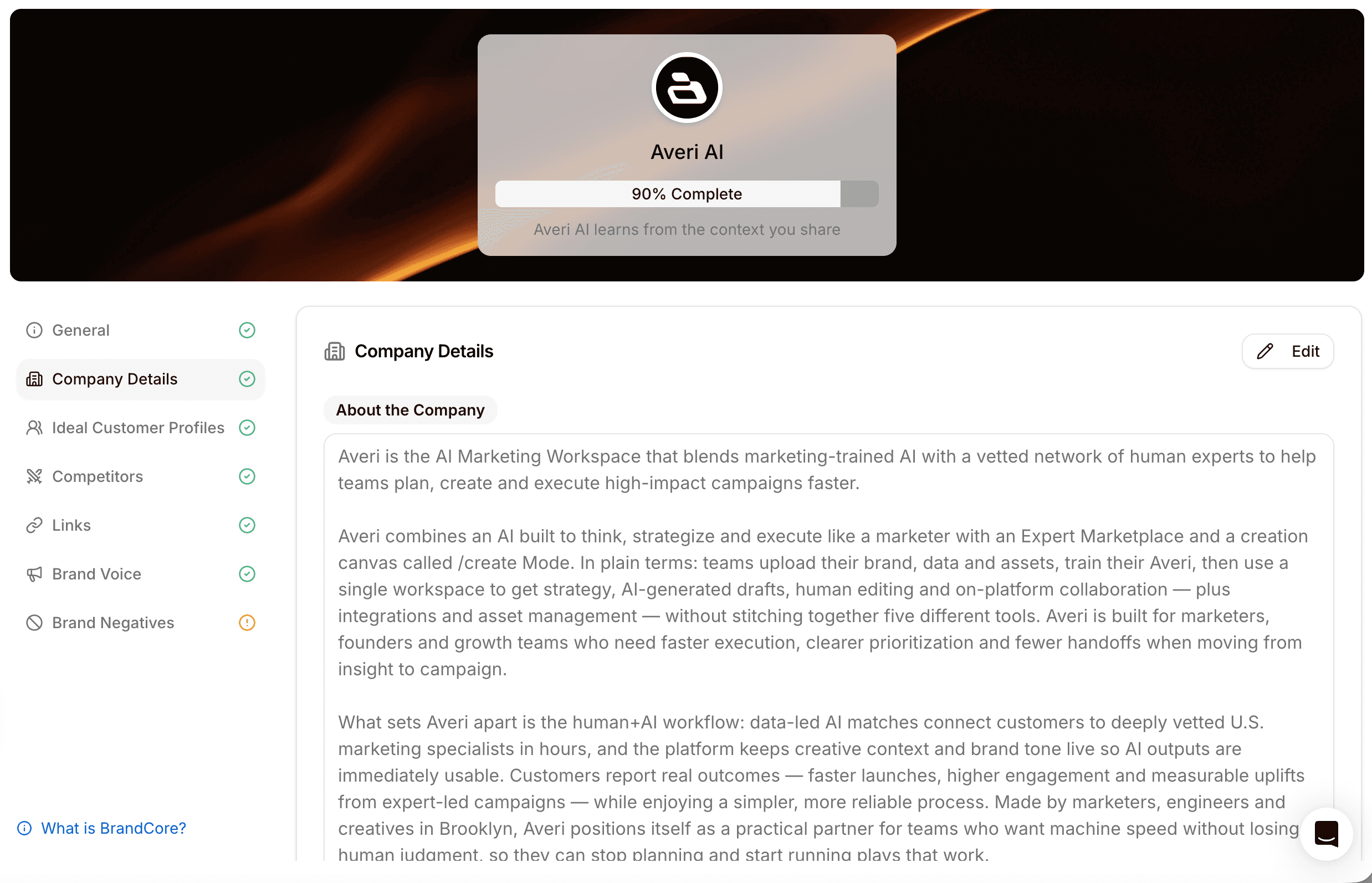

Phase 1: Brand Core (week 1)

The Brand Core is the foundation layer — voice, positioning, category language, competitive set. It conditions every draft downstream.

How I built it manually: A Notion document with seven structured sections (voice rules, banned vocabulary list, positioning statements, competitive set, three ICPs, anchor proof points, formatting standards). I'd paste relevant sections into every AI prompt for every draft, manually, for the first three months. Tedious, but essential — without it, every AI-drafted piece sounded like every other AI draft on the internet.

How it runs in Averi now: Configured in about 30 minutes of guided setup. Becomes the persistent input that conditions every piece the platform drafts, scores, or queues. No copy-paste.

For us, the Brand Core captured:

Voice: confident, direct, slightly irreverent, anti-jargon, execution-first. The same voice you're reading right now.

Positioning: AI content engine for startups. Not an AI writer. Not a marketplace. The end-to-end production system.

Banned vocabulary: the corporate words we would never use — the predictable AI-tell list (you've read those words a thousand times in B2B blog posts) plus softer hedges like "explore" and "consider."

Competitive set: Jasper, AirOps, Writesonic, Copy.ai, Clearscope, Surfer SEO, Contently. Not HubSpot — different category.

Anchor proof points: the metrics we'd build the brand around once we had them.

The thing nobody told me when I built this: the Brand Core is the document that prevents AI-generated content from sounding generic.

With it, the AI started sounding like Averi, because every draft was conditioned on the same voice document. Without it, you get the same beige tone every other AI-content company is putting out.

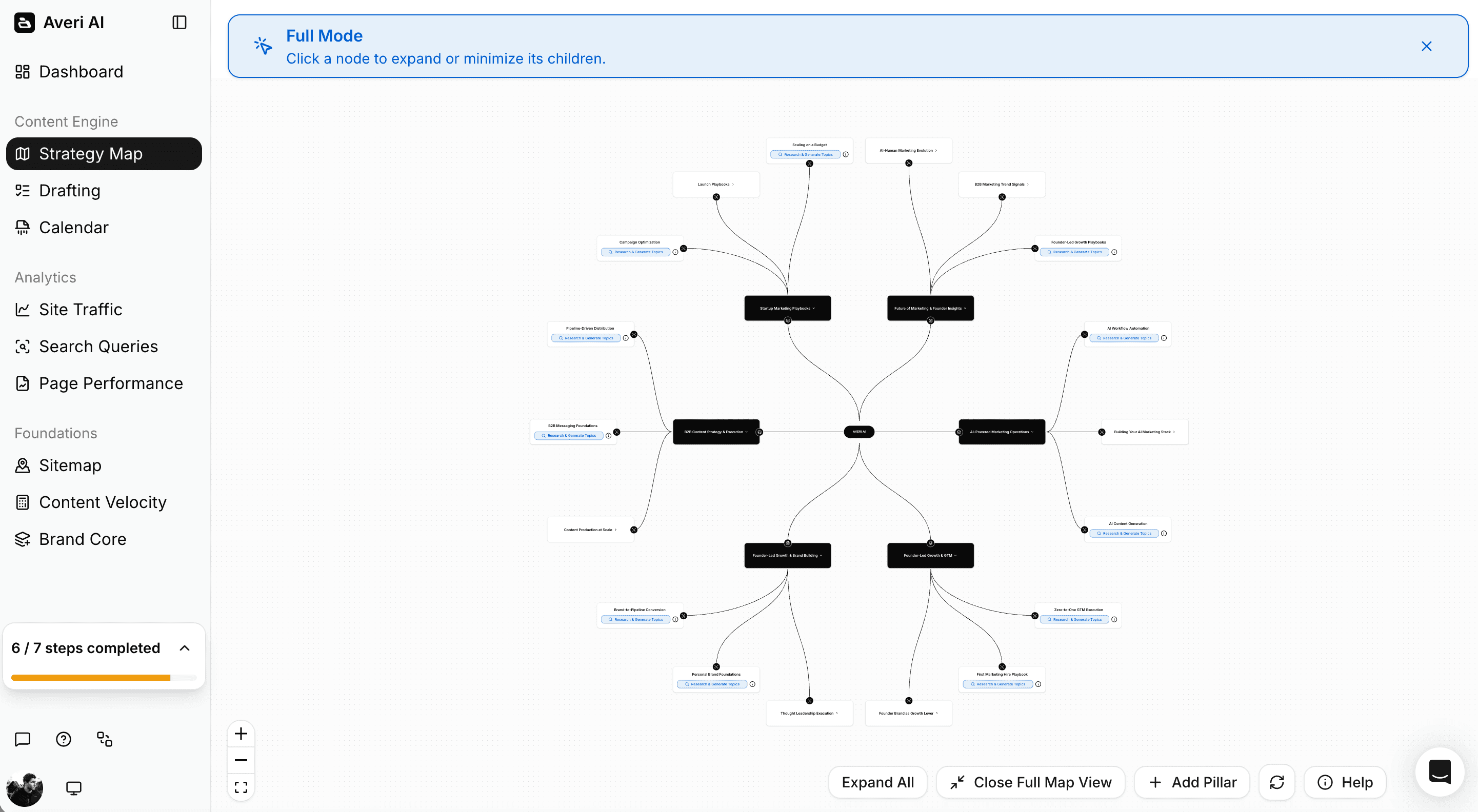

Phase 2: Strategy Map (week 1)

The Strategy Map is the upstream methodology layer — ICPs, competitor analysis, topic pillars, and the question stack the content engine pulls from.

How I built it manually: Three weeks of customer interviews (cofounder included), competitor site audits run through Ahrefs and Clearscope, and a 50-question "what would a buyer ask" exercise I ran in a giant Google Sheet. The output was a 12-page strategic document I'd reference every time I needed to pick a topic.

How it runs in Averi now: Auto-generated from a website scrape and a few clarifying questions. ICPs, competitive set, topic pillars, and an initial Question Stack on day one rather than week three.

In our case, the Strategy Map (manual version, then later platform version) generated:

Three ICPs: Series A B2B SaaS founder running marketing solo, seed-stage SaaS marketer at a 5–10 person team, founder evaluating their first marketing hire

Topic pillars: GEO/AEO, content engine workflow, founder marketing economics, AI search citations, B2B SaaS content strategy

Initial 50-question Question Stack for the primary ICP (later expanded to ~250 questions across all three ICPs)

The mistake I would have made without the Strategy Map: starting with a list of high-volume head terms. "AI content marketing" (3,300/mo). "Best AI writing tools" (2,400/mo).

The kind of keywords every AI marketing brand was already fighting over.

The Strategy Map pushed us to tier-4 long-tail questions instead — the queries with low individual volume but high intent and high AI Overview trigger rate. That single reframe is probably worth half the eventual growth.

The supporting data: queries of 8 words or more trigger AI Overviews at 7x the rate of shorter queries, 46% of all AI Overview citations come from long-tail queries of seven words or more, and long-tail keywords account for 91.8% of all search queries.

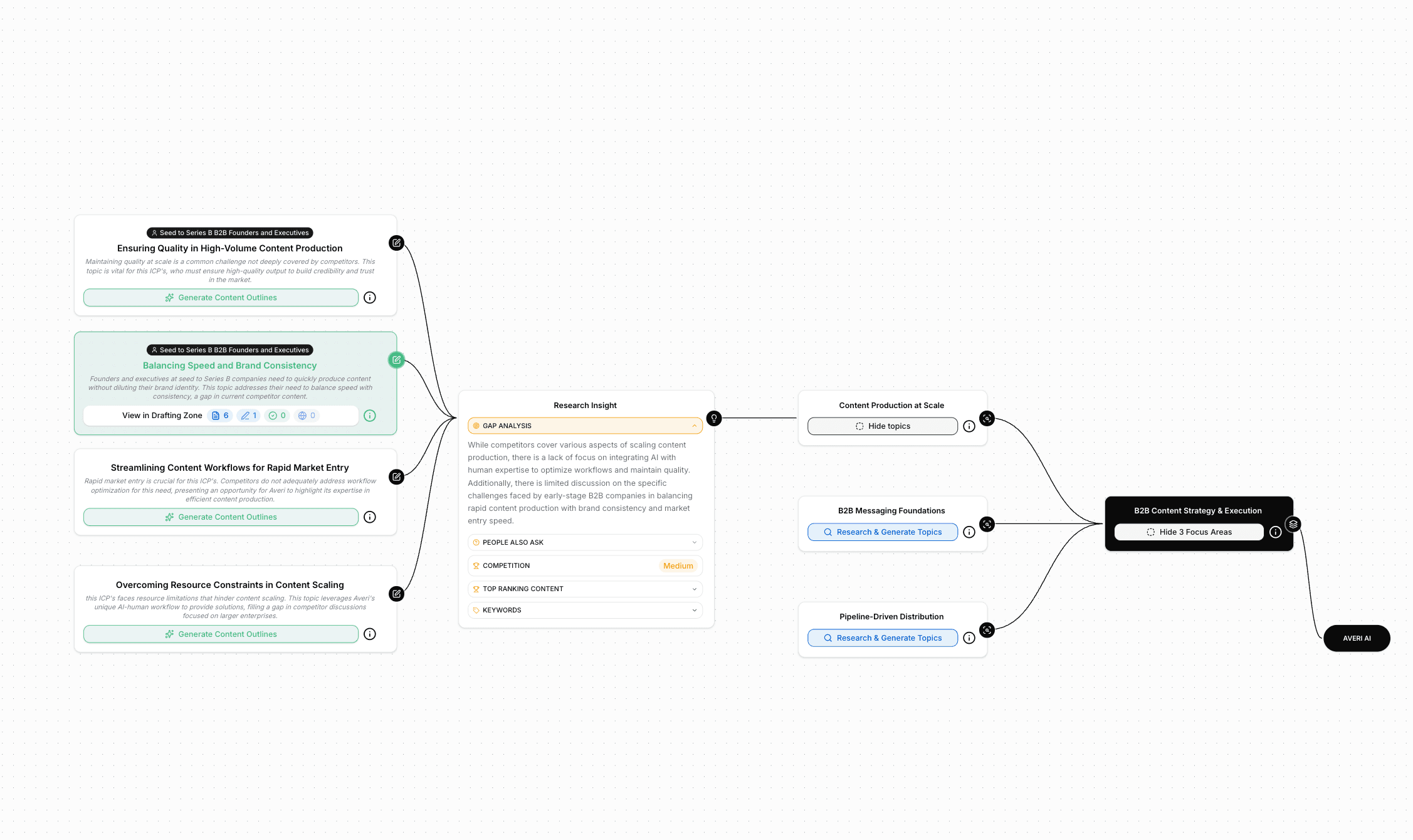

Phase 3: Content Queue (week 2 onward)

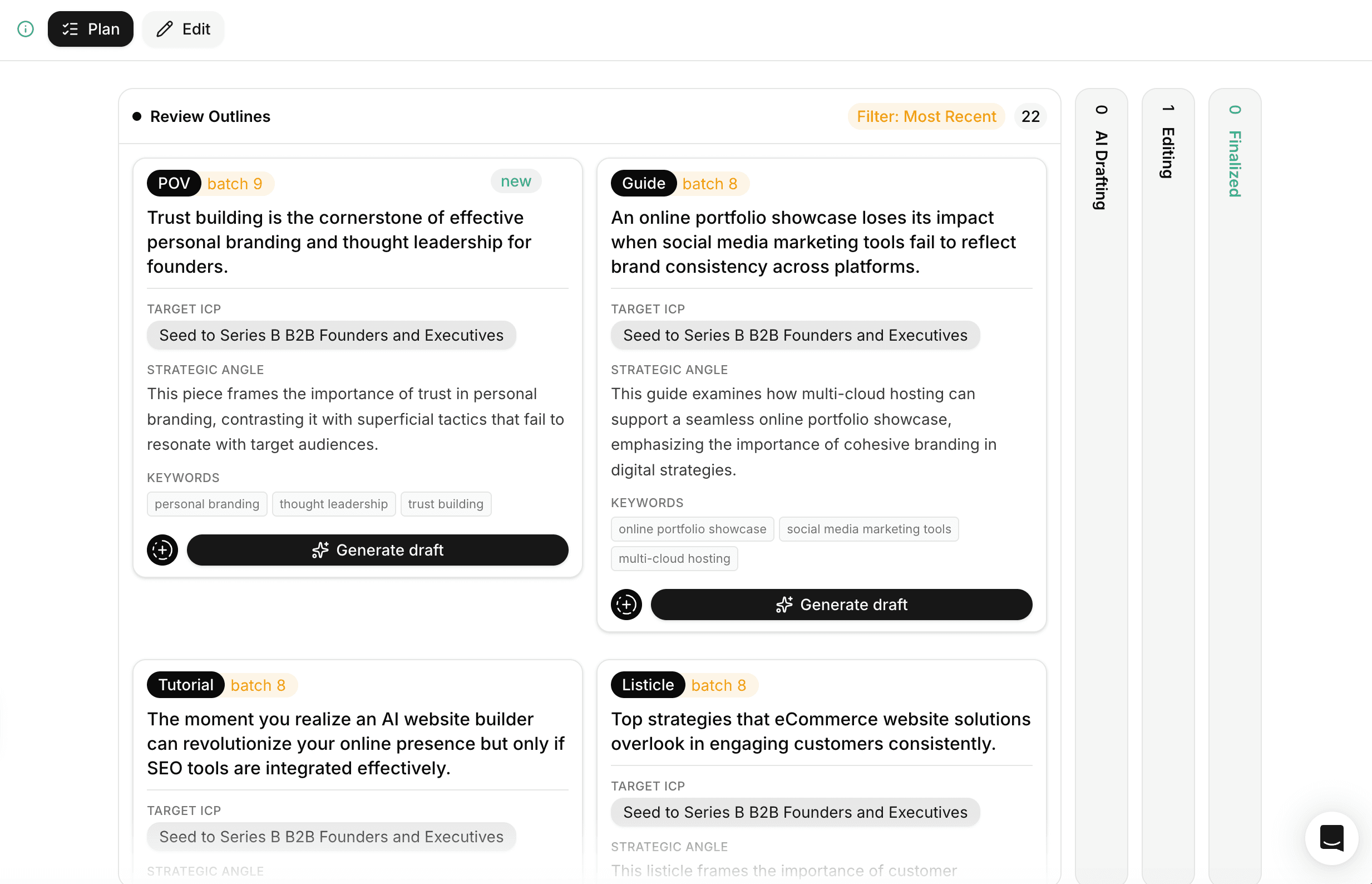

The Content Queue is the production layer — auto-surfaced topics with target keywords and outlines pre-attached.

How I built it manually: Every Sunday night, 90 minutes pulling People Also Ask expansions from Google, scraping the new topics from Reddit threads in r/SaaS and r/marketing, exporting Search Console branded queries, and combing competitor sitemaps for new pages. I'd dump everything into a Notion database, score topics 1–10 for relevance, and queue the next week's pieces from there.

How it runs in Averi now: Auto-pulls all of those sources weekly. Topics surface in the queue with target keywords and outlines pre-attached. The Sunday night ritual collapsed into a 30-minute Monday morning approval review.

Our Phase 3 cadence, as actually run:

Weekly review: ~30 minutes on Monday morning, approving or rejecting the auto-surfaced topics (manual version: the 90-minute Sunday ritual described above)

Average queue size: 12–20 topics live at any given time

Approval rate: roughly 70%. The other 30% were either off-positioning or duplicative of work in flight

Net publishing cadence after the queue stabilized: 2–4 pieces per week, mostly tier-3 and tier-4 content with occasional category-defining pillars

What surprised me, and what made me confident the manual workflow was worth productizing: the queue did the hardest single job of content marketing — choosing what to write next — better than I would have done it myself.

Founders running marketing solo don't fail because they can't write.

They fail because they can't decide what to write. The queue eliminated that decision.

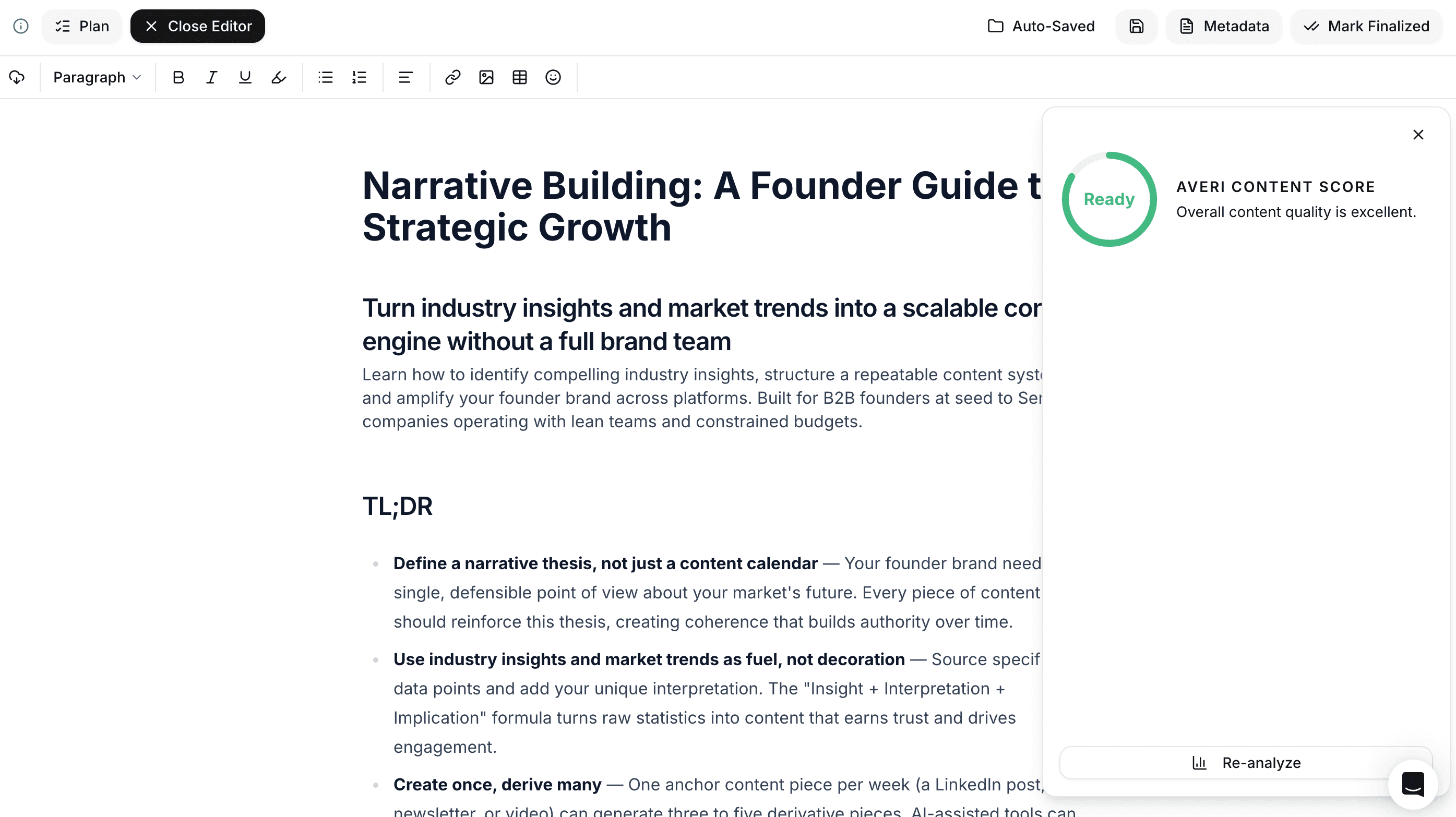

Phase 4: Drafting + Scoring (every published piece)

The drafting layer takes a queue topic and produces a first draft conditioned on the Brand Core, the Strategy Map, and the structural patterns AI search engines extract from.

The Scoring layer evaluates the draft on a composite SEO + GEO scale of 0–100 before publish.

How I built it manually: Drafting was a chain of AI prompts run through three different models (Claude for structure and voice, GPT for keyword density passes, Perplexity for fact-checking and stat sourcing). Scoring was a 12-point checklist I'd run manually against every draft — answer extraction structure, fact density, schema completeness, FAQ presence, internal linking depth, technical SEO basics. Took 30–45 minutes per piece on top of writing.

How it runs in Averi now: Drafting is a single workflow conditioned on Brand Core + Strategy Map + GEO patterns. Scoring is automatic — the composite SEO + GEO score (0–100) shows up next to every draft before publish, with specific failure modes flagged.

Our quality gate from day one (manual scoring then automated scoring): every piece scores 80+ on the composite scale before publish. The 80 floor was non-negotiable. Pieces scoring 70–79 went through a structural review before publish. Pieces below 70 got rebuilt.

The score breakdown that mattered most:

GEO portion (45% of composite): Answer extraction structure (H2 question / 40–60 word capsule), fact density (one specific stat per 100 words minimum), schema completeness, FAQ section presence and structure, internal linking depth

SEO portion (55% of composite): Topical relevance, keyword coverage across the 4 tiers, technical SEO (title, meta, alt text, headings), content depth, originality

What the scoring caught that I would have missed (even more so before I systematized it manually): missing FAQ schema on roughly 60% of my early drafts.

Insufficient fact density on the first 200 words of nearly every piece — the section AI engines extract from most, since 44.2% of AI citations come from the first 30% of a page's text.

Internal linking gaps to high-authority pillar pages.

The fact density flagging was particularly useful: content with original statistics sees 30–40% higher visibility in AI responses, and once the manual checklist became a scored output in the platform, density issues got caught consistently before publish.

For the deeper structural patterns, see our GEO Playbook 2026 and our building citation-worthy content guide.

Phase 5: Publishing (every piece, autopilot)

The Publishing layer ships finished content directly to the CMS with proper schema markup applied by default.

How I built it manually: Every published piece got the FAQPage schema, Article schema, Organization schema, and BreadcrumbList JSON-LD blocks pasted into the Framer page settings manually. About 10 minutes per piece, plus the inevitable copy-paste errors I'd find during quarterly audits.

How it runs in Averi now: Direct publish to Webflow, Framer, or WordPress with proper schema applied by default. No copy-paste workflow. Calendar view + autopublish on schedule.

The detail that compounds, regardless of whether you're doing it manually or through a tool: every published piece needs FAQPage schema, Article schema, Organization schema (on the site root), and BreadcrumbList schema. FAQ sections get cited by AI at roughly 3x the rate of standard content sections, and most of that 3x lift requires the schema to be properly configured.

We never published a piece without it.

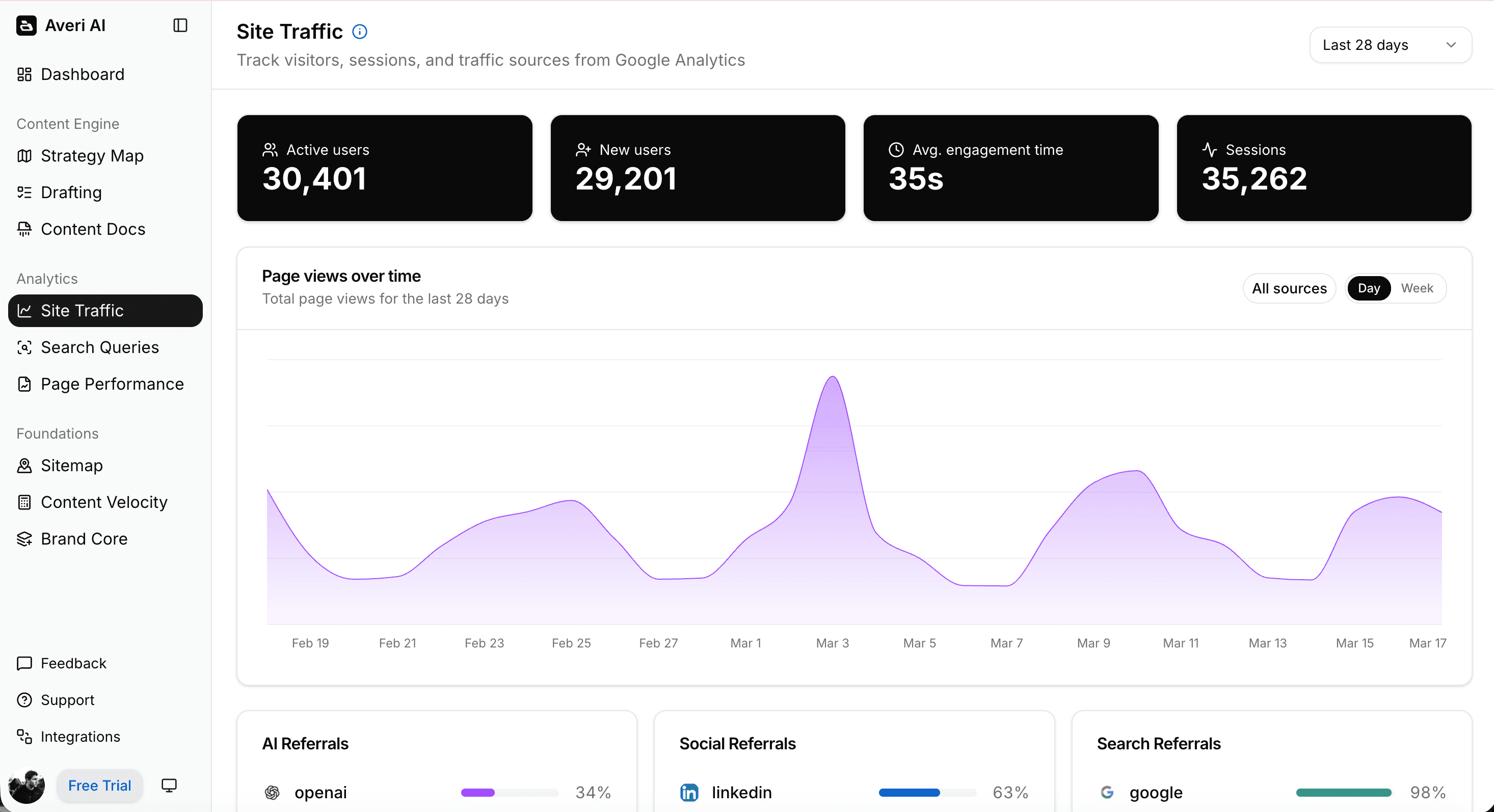

Phase 6: Analytics + Refresh (rolling, every 90 days)

The Analytics layer ties Search Console + Google Analytics + AI citation tracking into a unified view. The Refresh queue surfaces pieces showing decay signals (impressions trending down, CTR dropping, ranking sliding).

How I built it manually: A Looker Studio dashboard pulling from Search Console and GA4. AI citation tracking was a brutal manual process — every Friday I'd query ChatGPT, Perplexity, Gemini, and (later) Claude on our top 25 category questions, screenshot every answer, log them in a spreadsheet, and diff week-over-week. Took 2–3 hours every Friday for the first six months.

How it runs in Averi now: GSC + GA + AI citation tracking unified in one dashboard. Decay detection auto-flags pieces with trending-down impressions. The Refresh queue surfaces refresh candidates with the specific actions to take.

Our refresh discipline, after the engine stabilized:

Quarterly refresh sprint: 2 weeks per quarter dedicated to refreshing the top 20 pieces by impressions

Decay detection: any piece with 3+ consecutive weeks of declining impressions got flagged

Refresh actions: meta title/description rewrite, factual updates, internal link rebalancing, FAQ expansion, schema upgrades

Pages updated within the past year make up 70% of AI-cited pages. The refresh cadence isn't a "nice to have" layer — it's the maintenance discipline that keeps the engine producing after month 6. Skip it and the compound stalls.

For the refresh methodology in detail, see our content engine workflow guide.

The month-by-month progression (with the actual numbers)

Here's the part I keep coming back to in conversations with founders.

The growth curve is not linear.

The first 4 months felt like nothing was happening.

Then everything happened at once — but later than the textbooks say.

The actual month-by-month from Google Search Console:

Month | Impressions | Daily avg | Growth vs prior month |

|---|---|---|---|

April 2025 (3 days) | 1,130 | 377 | — |

May 2025 | 17,824 | 575 | (first full month) |

June 2025 | 25,057 | 835 | 1.41x |

July 2025 | 39,059 | 1,260 | 1.56x |

August 2025 | 71,685 | 2,312 | 1.84x |

September 2025 | 198,256 | 6,609 | 2.77x — INFLECTION |

October 2025 | 381,710 | 12,313 | 1.93x |

November 2025 | 585,915 | 19,530 | 1.53x |

December 2025 | 802,293 | 25,880 | 1.37x |

January 2026 | 1,682,239 | 54,266 | 2.10x — SECOND WAVE |

February 2026 | 1,929,486 | 68,910 | 1.15x |

March 2026 | 2,916,225 | 94,072 | 1.51x — PEAK |

April 2026 (27 days) | 2,021,018 | 74,853 | 0.69x (partial month) |

Three things to read out of that table.

Months 1–4: The invisible phase (May–August 2025)

What the dashboard showed: not nothing, but not enough to validate the workflow.

May closed at 575 daily impressions.

By August, daily impressions had grown to 2,312.

To anyone outside the workflow, that looks like steady but small growth. To someone running the workflow daily, it felt like grinding into a fog.

What I did during this phase:

Published 50-100 pieces

Refused to look at impression numbers more than once a week (this was the discipline that mattered most)

Trusted the workflow even when the data didn't validate it loudly

Refreshed nothing — too early to refresh anything

The honest part: I thought it was failing. Twice during this phase I almost shifted budget toward other tactics because the organic numbers looked broken. I'm grateful I didn't. The workflow was already producing the compound — Search Console just hadn't caught up yet.

This is the phase most founders quit.

It's not because the workflow doesn't work. It's because the human running it can't see the work compounding before the data shows it.

The work is real. The data lags by 60–120 days for almost every published piece in a young domain.

Months 5–8: The first inflection (September–December 2025)

The curve bent in September. Hard.

Impressions jumped from 71,685 in August to 198,256 in September — a 2.77x month-over-month gain.

Then 1.93x in October (381,710), then 1.53x in November (585,915), then 1.37x in December (802,293).

Five months in, the engine had clearly kicked.

What changed in the data:

Daily impressions went from 2,312/day in August to 25,880/day by December — an 11x increase in four months

Branded query volume started growing organically (people were searching "averi" without me telling them to)

Long-tail tier-4 queries started appearing in the data — exactly the queries the Strategy Map had originally surfaced

The "queries" tab in Search Console started showing 100+ unique queries per week, then 500+, then 1,000+

Average position improved from 23.1 (August) to 12.8 (December) — content was ranking, not just impressing

What I changed in the workflow:

Started running the first refresh sprint on month-3 published pieces in October

Doubled down on tier-4 question content (the queries that were actually ranking)

Built the first internal link audit map across the existing library

Stopped publishing tier-2 head-term content entirely. The traffic was almost all from tier 3 and tier 4

This pattern matches the broader data: pages ranking in positions 21–100 saw a 400% increase in AI Overview citations — meaning even the pieces still ranking page 2 or 3 were earning citations and pulling impressions.

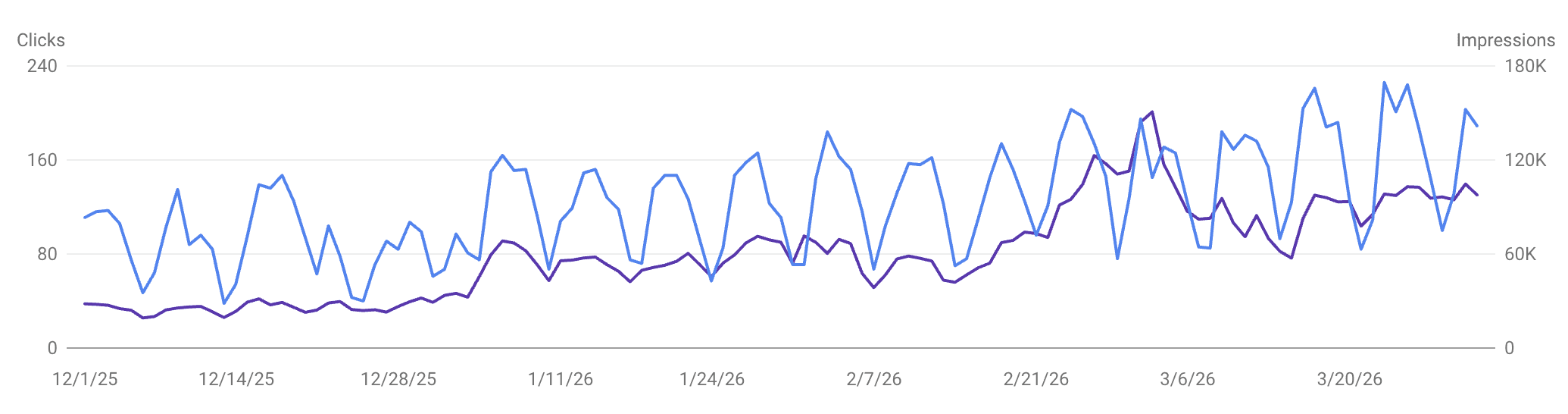

Months 9–12: The second wave (January–April 2026)

The textbook says content marketing has one inflection point.

The actual data shows two.

December 2025 closed at 802,293 monthly impressions, which would have been a complete success on its own.

January 2026 hit 1,682,239 — another 2.10x jump in a single month, with no obvious external trigger I can point to.

That second wave is the one that surprised me.

What likely drove it:

Ten months of cumulative library compounding past a critical mass threshold

Quarterly refresh sprints from October–November paying back at scale

AI search adoption growing rapidly through Q4 2025 and Q1 2026 — ChatGPT now serves 900M+ weekly active users, and AI-referred sessions grew 527% YoY in 2025, which puts AI Overview citation surface in higher demand

Domain authority crossing the threshold where new pieces ranked within days instead of weeks

By March 2026, we hit the peak: 2,916,225 monthly impressions.

April 2026 has tracked at ~75K/day through the first 27 days, projecting to roughly 2.25M for the full month — a real plateau, not a continued climb.

Plateaus at this scale are normal and expected.

The math of monthly growth becomes harder once your baseline is 2.5M+ impressions per month, because every doubling requires a doubling of the existing library's contribution.

The reason this happened on a one-person team isn't that I'm somehow more productive than other founders running marketing solo.

The reason is that the workflow does the production work and the human does the strategic and quality work.

That division of labor is the entire point of a content engine.

Founders who try to do both jobs (manual production AND quality control) hit a ceiling at maybe 1 piece per week.

Founders running an engine produce 2–4 pieces per week and spend their time on the strategic call about what should run next.

Worth noting the broader category dynamic: AI search visitors convert at 4.4x the rate of traditional organic, and the average ChatGPT prompt is 23 words versus 3.37 for traditional Google search — so the compound period is tracking against a discovery channel that itself is growing rapidly.

The traffic pattern that matters most

Here's the data point I want every founder reading this to internalize.

Of the top 1,000 ranked queries in our Search Console, 91% of total impressions come from non-branded search terms.

Branded queries (anything containing "averi" or close variants) account for 9% of impressions and 76% of clicks.

The asymmetry tells the story:

Branded queries (9% of impressions, 76% of clicks): people who already know us, searching for us by name. High click-through, high intent, low discovery value

Non-branded queries (91% of impressions, 24% of clicks): people who don't know us yet, finding us through long-tail content. Lower click-through (typical for AI-Overview-mediated queries), but this is the actual demand generation engine

Examples of real non-branded queries pulling impressions in the dataset: "ai marketing manager," "vibe marketing," "cursor for marketing," "ai content marketing platform," "llm content optimization," "content engineering," "ai content engine," "content marketing trends 2026," "ai content engineer."

These are the kinds of queries the Strategy Map originally surfaced — long-tail, intent-saturated, and currently low-competition for any startup willing to commit to the framework.

The reason this matters: a brand running mostly on branded search traffic is just running a customer service operation through Google.

A brand running 91% non-branded impressions is running a discovery engine.

That's the difference between content marketing as cost center and content marketing as growth function.

For more on the methodology behind surfacing these query patterns, see our Question Stack guide and our 7-Word Rule piece on long-tail keywords.

What didn't work

This is the section that gets cut from most case studies.

Including it because the actual workflow is at least 30% "we tried this, it didn't work, we stopped doing it."

Tier 2 head-term content. I published probably 10 pieces optimized for 3–4 word category terms in the first three months. None of them ranked. Two of them earned a handful of clicks each through being adjacent to better-ranking content. The rest are still sitting in the library doing nothing. I'd skip them entirely if I started over.

Listicles without strong original framing. "Top 10 AI marketing tools" type content didn't perform for us because the format is saturated and AI engines have plenty of authoritative sources to cite from. The listicles that did work were ones with original framing or proprietary research.

Cross-posting unmodified content to LinkedIn. Articles published as LinkedIn long-form without restructuring for the platform underperformed every time. The format that works on a blog (4,000-word pillar, FAQ-heavy, tier-4 question-driven) is not the format that works on LinkedIn (1,500-character, hook-first, single-frame insight). Once we built dedicated LinkedIn versions of pillar pieces, both surfaces grew.

Posting frequency above 20 pieces per week. We tried it for a month. Quality dropped. Refresh work fell behind. Composite scores started slipping from 80+ to the low 70s. Backed off to 10-15 pieces per week as the steady cadence and the engine stabilized.

Generic AI Overviews coverage. Early in the cycle, I published a piece on "what are Google AI Overviews" type content that competed with hundreds of similar definitions. It did nothing. The pieces that performed were the specific application angles ("how AI Overviews change B2B SaaS funnels," "the 7-word rule for AI Overviews") rather than the definition pages.

What compounded

The flip side: the work that produced disproportionate results.

Original frameworks with names. The Question Stack. The 7-Word Rule. The 1-80 fact density rule. Anything where we coined a term and built the canonical reference for it. AI search engines cite named frameworks at materially higher rates than generic explainers because the named-thing matches the buyer's query verbatim. LLMs only cite 2–7 domains per response — naming a framework is one of the few moves that consistently lands you in that tight citation window. If you can name a framework, name it, and own the page that defines it.

FAQ sections with FAQPage schema. FAQ sections get cited by AI at roughly 3x the rate of standard content sections. Every pillar got a 7-question FAQ. Every FAQ shipped with schema. The compound from this single move was probably 30%+ of total citation growth.

Counter-position pieces against larger competitors. "HubSpot AEO is real, but here's why startups shouldn't buy it." "AirOps is right about content engineers, here's the founder counter-flag." These pieces ranked faster than category-defining content because the comparison queries were lower-competition than head terms but higher-intent than long-tail informationals.

Internal linking density. Every piece linked to 15–25 other pieces in the library, weighted toward pillar pages. AI engines follow internal link graphs to assess topical authority. The pieces with deep internal link networks became citation magnets.

Refreshing the top 20 pieces every quarter. Stale content decays in AI search faster than it does in traditional search. The quarterly refresh sprint kept the highest-impression pieces in the cited set. Skip a quarter and you watch citations migrate to your competitors. The data backs this hard: pages that go more than three months without an update are 3x more likely to lose AI search visibility.

The Brand Core enforcement. Every piece sounded like Averi because every piece was conditioned on the same voice document. Brand consistency at scale is what separates AI-drafted content that gets cited from AI-drafted content that gets pattern-matched and ignored.

Why this is the case study only Averi can tell

A quick honest moment about positioning. I'm aware this piece functions as a sales asset.

Every founder reading it is going to think "is this just an Averi pitch in editorial clothing?"

The answer is: it's both, and the temporal sequence is the part that makes it credible.

The first half of these results came from a manual workflow I built before Averi existed as a product.

The second half came from running on Averi after we'd built the platform around the workflow that was working.

So the case study isn't "we built a product and it produced these results."

It's "I built a manual system that produced these results, then we productized it, and now anyone can run the same system without the duct tape."

That's the Salesforce / Notion / Superhuman pattern: founder hits a personal pain, builds the solution, productizes it.

AirOps publishes case studies featuring Webflow, Wiz, and Vanta — companies running their cohort program with established marketing teams.

Jasper publishes Forrester research showing efficiency gains for enterprise content teams.

Both are real and useful for their target buyer.

Neither answers the question that matters for a seed-to-Series-A founder running marketing solo: "what does one person + this system actually look like in practice?"

The category context: 42% of CRM software buyers now use AI search as part of their evaluation process, and the buyer behavior shift this case study reflects is happening at scale across every category.

Averi is the only company in the AI content space that can answer that question with primary-source evidence, because we are that one person, and we built the product out of the workflow that produced these numbers — not the other way around.

Every screenshot in this piece is from our actual Search Console, our actual platform, our actual content library. The numbers aren't from a customer success team's case study deck.

They're from the same workflow you'd run on day one of an Averi trial.

The framework you just read is the same six-phase workflow that ships in every Averi Solo subscription:

Brand Core: configure once, conditions every draft thereafter (manually built first, then productized into a configuration UI)

Strategy Map: ICPs, competitive set, topic pillars, Question Stack — auto-generated (manually built first as a 12-page Notion doc, now generated from a website scrape)

Content Queue: tier-3 and tier-4 topics surfaced weekly with target keywords and outlines (manually pulled from PAA, Reddit, Search Console for six months, now auto-pulled)

Drafting + Scoring: composite SEO + GEO score 0–100, 80+ floor for publish (manually scored against a 12-point checklist for six months, now scored automatically)

Publishing: direct to Webflow, Framer, or WordPress with proper schema by default (manually copy-pasted JSON-LD blocks for six months, now applied by default)

Analytics + Refresh: GSC + GA + AI citation tracking unified, quarterly refresh queue surfaced (manually screenshotted citation queries every Friday for six months, now tracked in a unified dashboard)

That's $99/mo for the same system that produced 10.6M+ Google impressions in 12 months on a one-person team — without the duct tape.

No annual contract. 14-day free trial.

The product exists because the manual workflow worked. The workflow now works without the manual labor.

For the broader argument on the buy-vs-hire decision specifically, see our piece on content engineer for startups: buy vs hire — the math on why the system replaces the role at the founder stage.

See how much you'd save this year using Averi for your Content Marketing

What to do this week if you're at month zero

If you're starting from where Averi started — minimal impressions, no library, no team — here's the actual order of operations.

I did all of this manually in 2025 (badly, slowly, with a lot of context switching).

You can do it manually too, or you can run it on the platform we built out of that manual process.

Either path works.

The platform just compresses six months of foundation work into about a week.

Configure your Brand Core. Voice, positioning, banned vocabulary, competitive set, anchor proof points. This is the document everything else reads from. Manual version: a structured Notion doc you paste into every AI prompt. Platform version: 30 minutes of guided setup, applied automatically.

Run a Strategy Map. Generate ICPs, competitive set, topic pillars, and the first 50-question Question Stack for your primary ICP. Manual version: three weeks of customer interviews and competitor audits. Platform version: auto-generated from a website scrape.

Build your first 4 weeks of Content Queue. Pick the topics that match your tier-3 and tier-4 priorities. Reject anything that's tier-2 head-term content unless it's strategically necessary. Manual version: weekly Sunday-night ritual pulling from PAA, Reddit, Search Console, competitors. Platform version: auto-pulled, weekly approval review.

Set the 80+ score floor. Every piece scores 80+ on the composite scale before publish. Below that, rebuild it. Manual version: a 12-point checklist run against every draft. Platform version: composite score shown next to every draft.

Don't look at impressions for 90 days. Seriously. The first 4 months will look like nothing is happening, and the temptation to abandon the workflow will be highest in months 2–3 when the data is jagged and small. Trust the process. This part is identical whether you're running manually or on a platform.

Schedule the first refresh sprint at month 4. Pick the top 5 pieces by impressions. Update factual content, expand FAQs, rebalance internal links, upgrade schema.

Track AI citations weekly. Manually query ChatGPT, Perplexity, and Gemini on your top 10 category questions. This is your share-of-voice dashboard until automated tracking matures. (I did this manually every Friday for six months. It's tedious but irreplaceable as a habit.)

That's the workflow.

It's not glamorous. It's not a 10x growth hack. It's compound effects from a system that runs whether you feel like running it or not.

If you want this baked into your stack instead of running it as a manual project (which is how I ran it for the first six months of these results, and which I do not recommend if you have any other option), start a free 14-day Averi trial.

30 minutes to configure Brand Core and Strategy Map. First piece scored and published within the first week. Same workflow we ran on ourselves — without the hassle.

FAQs

Was Averi the platform running from day one of these results?

No, and the temporal sequence is part of why these numbers are credible. The first half of the 12-month period ran on a manual workflow I'd cobbled together using a stack of AI tools (Claude, GPT, Perplexity), Notion databases, Looker Studio dashboards, and a lot of Sunday-night ritual work. Once the manual workflow was producing real compound, we productized it into Averi. The second half of the period ran on Averi itself, which is what we use now for all our content marketing. The growth happened because the workflow worked — the platform exists because the workflow worked.

Was Averi's organic search growth driven by paid advertising?

No. Google Search Console impressions are organic search visibility — Google doesn't log paid ad impressions there. The 10.6M impressions and 27,464 organic clicks came from organic search results across the 12-month period. The growth on the organic side was driven entirely by content optimized for both traditional SEO and AI search citation (GEO).

How many pieces of content did Averi publish to hit these numbers?

Roughly 100–150 published pieces over the 12-month period at a steady cadence of 2–4 pieces per week after the first month. Every piece shipped with FAQPage schema, Article schema, and proper Organization schema. The cadence stayed consistent rather than spiking — quality at 80+ composite score was the constraint, not volume.

Can a one-person marketing team actually replicate this?

Yes — that's the entire point of the case study. The workflow runs as a system rather than as a series of manual tasks, which is what makes it operable by one person. The bottleneck for solo founders isn't writing capacity; it's strategic decision-making capacity (what to write, how to score it, when to refresh). The engine handles those decisions. The human stays focused on the strategic and quality calls. The founder running this in 2025 (me) had to do it manually for six months. The founder running this in 2026 starts with the engine already built.

What's the realistic timeline before content marketing produces results for a startup?

Plan for 4 months of relatively quiet phase, then a visible inflection somewhere between months 5–6, with compound effects from month 7 onward. The work is real from day one, but Search Console data lags 60–120 days behind for new domains. Founders who quit content marketing early almost always quit during the quiet phase — when the workflow is producing compound but the dashboard hasn't caught up yet. The data lag is the single most underrated reason content programs fail.

Why did Averi's growth happen without a content engineer hire?

The workflow is the system a content engineer would build over their first 90 days. I built a manual version of that system over six months in 2025 because no platform did the whole loop. Buying a platform that does the whole loop now compresses the timeline from "six months of duct tape, then results" to "day-one outputs from a system already built." For a seed-to-Series-A startup, the math runs decisively toward buying the engine — a content engineer hire fully loaded costs $200K+ per year, while the equivalent engine costs $99/month. For the full breakdown, see our content engineer for startups: buy vs hire piece.

What's the single biggest factor in Averi's organic search growth?

Tier-4 long-tail question content with FAQPage schema. Pieces that answered specific 7+ word buyer questions in dedicated FAQ sections, scored 80+ on the composite SEO + GEO scale, and shipped with proper schema produced disproportionately high citations and impressions versus head-term content. The 7-Word Rule and the FAQ schema layer together are probably 50%+ of the eventual organic search growth — which shows up most clearly in the fact that 91% of total impressions come from non-branded queries.

Related Resources

The Workflow & System

The Methodology Frameworks

Technical Implementation

Founder Marketing Economics

Measurement & Citation Benchmarks

Run the same six-phase workflow on your site. Brand Core in 30 minutes, Strategy Map by end of day, first piece scored and published within the week. $99/mo, no contract, 14-day free trial. Start your free trial →