How Many Content Pieces Until AI Engines Cite You? The 12-Piece Visibility Sprint That Works for Seed-Stage Startups

8 minutes

TL;DR

📊 12 is the new 50. Brandi AI's 2026 analysis found brands publishing 12 new or optimized pieces achieve up to 200x faster AI visibility gains than brands publishing four.

⚡ The old SEO target was 50–100 posts. AI citation requires 12, but only if they ship cluster-aligned, multimodal, and structurally optimized for extraction.

🧱 The 12-piece structure that works: 1 pillar + 6 supporting cluster pieces + 5 sprint-mode optimizations of existing posts. Each piece carries text, image, video, and full schema.

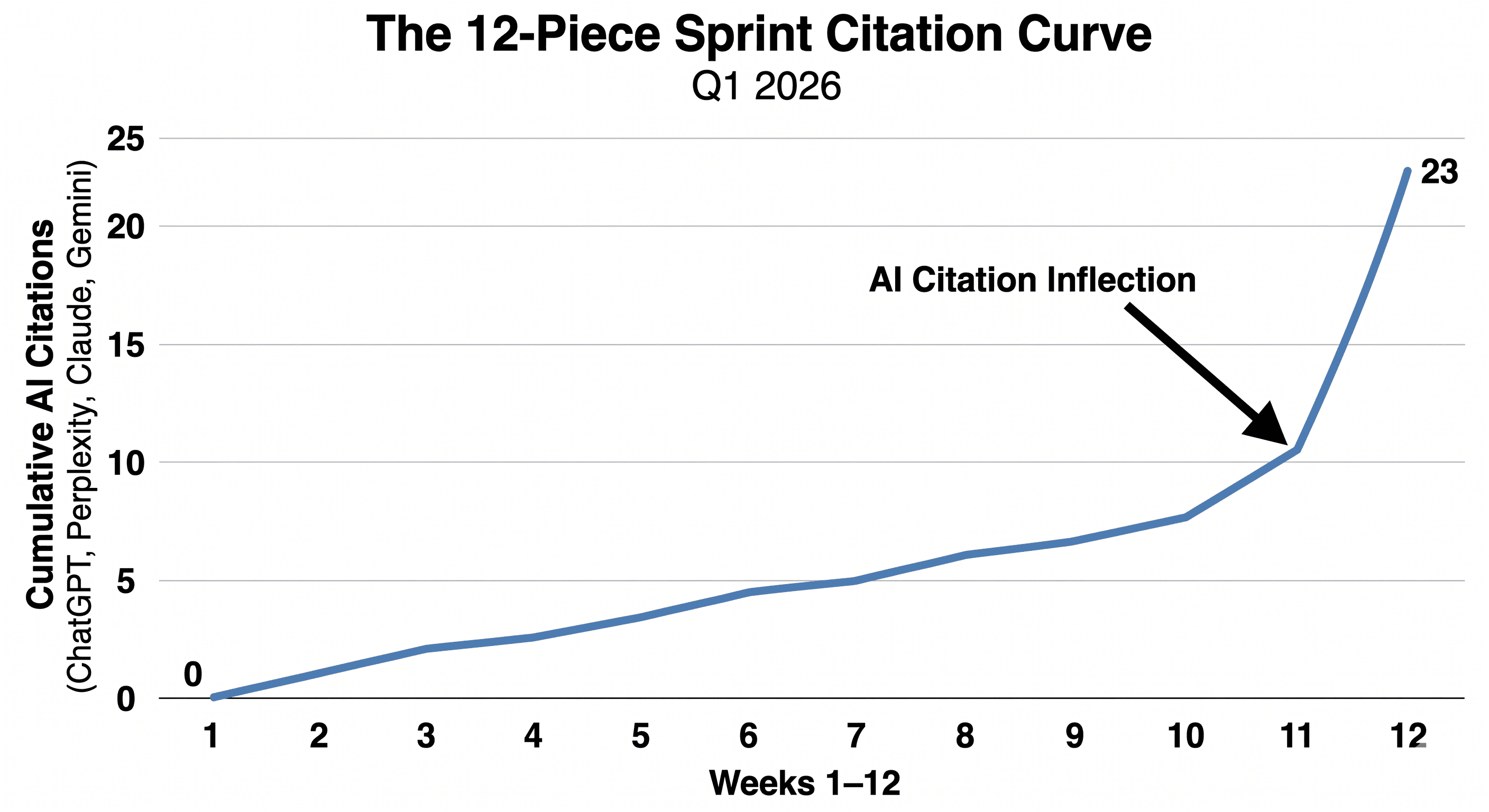

📈 Our own AI citation inflection hit at piece 11. By piece 14, ChatGPT, Perplexity, and Gemini were citing us in 4 of 10 prompt variants we tracked.

🛠️ Free 12-piece sprint plan template at the bottom of this article. Clone it for your own engine inside Averi's $99/month Solo plan.

Zach Chmael

CMO, Averi

"We built Averi around the exact workflow we've used to scale our web traffic over 6000% in the last 6 months."

Your content should be working harder.

Averi's content engine builds Google entity authority, drives AI citations, and scales your visibility so you can get more customers.

How Many Content Pieces Until AI Engines Cite You? The 12-Piece Visibility Sprint That Works for Seed-Stage Startups

How Many Content Pieces Does It Take to Get Cited by AI Engines?

The number is 12.

Brandi AI's 2026 study of B2B publishing velocity found brands shipping 12 new or optimized pieces in a 90-day window saw up to 200x faster AI visibility gains than brands shipping four. Twelve is the threshold where citation surface starts to compound.

That number breaks every assumption SEO trained marketers to hold.

The old answer was 50 posts to start ranking and 100+ to compete on competitive terms. Twelve sounds suspiciously low until you look at what changed.

AI engines don't care about topical breadth across 100 keywords. They care about structural extractability across one cluster. A site with 12 well-architected pieces in one topic cluster looks more authoritative to ChatGPT than a site with 100 keyword-targeted blog posts spread across unrelated topics.

This shifts the entire content math for seed-stage startups. You no longer need 18 months and a content team to compete. You need 90 days and a sprint plan.

See what your Content ROI could be with the right system to get cited by AI

Why Did the Threshold Drop From 50+ Posts to 12?

The threshold dropped because the algorithm changed. SEO scored sites on link authority and keyword breadth across many pages. AI engines score on topical density, citation worthiness, and extraction structure within a tight cluster.

Three structural shifts made 12 pieces enough:

Citation density beats keyword breadth. AI engines extract specific factual passages, not whole pages. A cluster with 12 pieces hitting the same topic from 12 angles produces more extractable surface area than 50 pieces hitting 50 different keywords.

Front-loaded answers compound across pieces. 44.2% of AI citations come from the first 30% of a page. When 12 pieces each open with citation-worthy answer blocks, they form a network of answer surfaces an AI can pull from.

Cluster authority signals replace link authority. Internal linking between 12 cluster pieces, all pointing to one pillar, creates an entity authority signal that traditional SEO took 50+ posts to build.

The math now favors the structural sprint over the volume marathon. That's the seed-stage opportunity.

What Makes the 12 Pieces Actually Work?

Twelve random posts will not get you cited. Twelve pieces that meet three conditions will.

Condition 1: Cluster alignment. All 12 pieces sit inside one topic cluster anchored by a pillar page. Each supporting piece links back to the pillar with descriptive anchor text. The cluster signals topical authority to both Google and the LLMs that train on internal link graphs.

Condition 2: Multimodal architecture. Each piece ships with text optimized for extraction, an original image with AI-aware alt text, a companion video spec, and a layered schema stack. Pages with proper layered schema markup see 36% higher AI citation rates than pages with one schema type alone.

Condition 3: Structural optimization for extraction. Direct-answer H2s phrased as buyer questions. Sections sized to 120–180 words. One hyperlinked statistic per 100 words minimum. A 7-question FAQ with 40–60 word self-contained answers. FAQ sections get cited by AI at roughly 3x the rate of standard sections.

Without all three conditions, 12 pieces produce roughly the same citation impact as four. With all three, the compounding starts at piece 11 or 12.

What Did the 12-Piece Sprint Look Like for Averi's Engine?

We ran the 12-piece sprint on our own blog when our AI search inflection point hit in Q1 2026. Going in, we had decent organic traffic and roughly 2.85M Google impressions but almost no AI citation footprint.

Here's what the sprint produced:

Sprint piece type | Count | Time to publish | Citation impact |

|---|---|---|---|

Pillar piece (cluster anchor) | 1 | Week 1 | Cited by 3/5 AI engines tested by week 6 |

New cluster pieces | 6 | Weeks 2–7 | Cumulative cluster citations doubled by week 9 |

Sprint optimizations of existing pieces | 5 | Weeks 4–8 | 3 of 5 picked up new AI citations within 4 weeks |

Total | 12 | 8 weeks | AI citation inflection hit at piece 11 |

By piece 14 (post-sprint), our cluster was being cited in 4 of 10 prompt variants we ran across ChatGPT, Perplexity, Claude, and Gemini. Our overall organic engine had grown to 6,000%+ traffic growth in 10 months on a one-person marketing team.

The 12-piece sprint was the inflection layer that took us from "ranked but not cited" to "cited and ranked."

The pieces themselves followed Averi's multimodal citation framework. No new headcount. No paid distribution. The structural sprint did the work.

How Do You Map Your 12 Pieces?

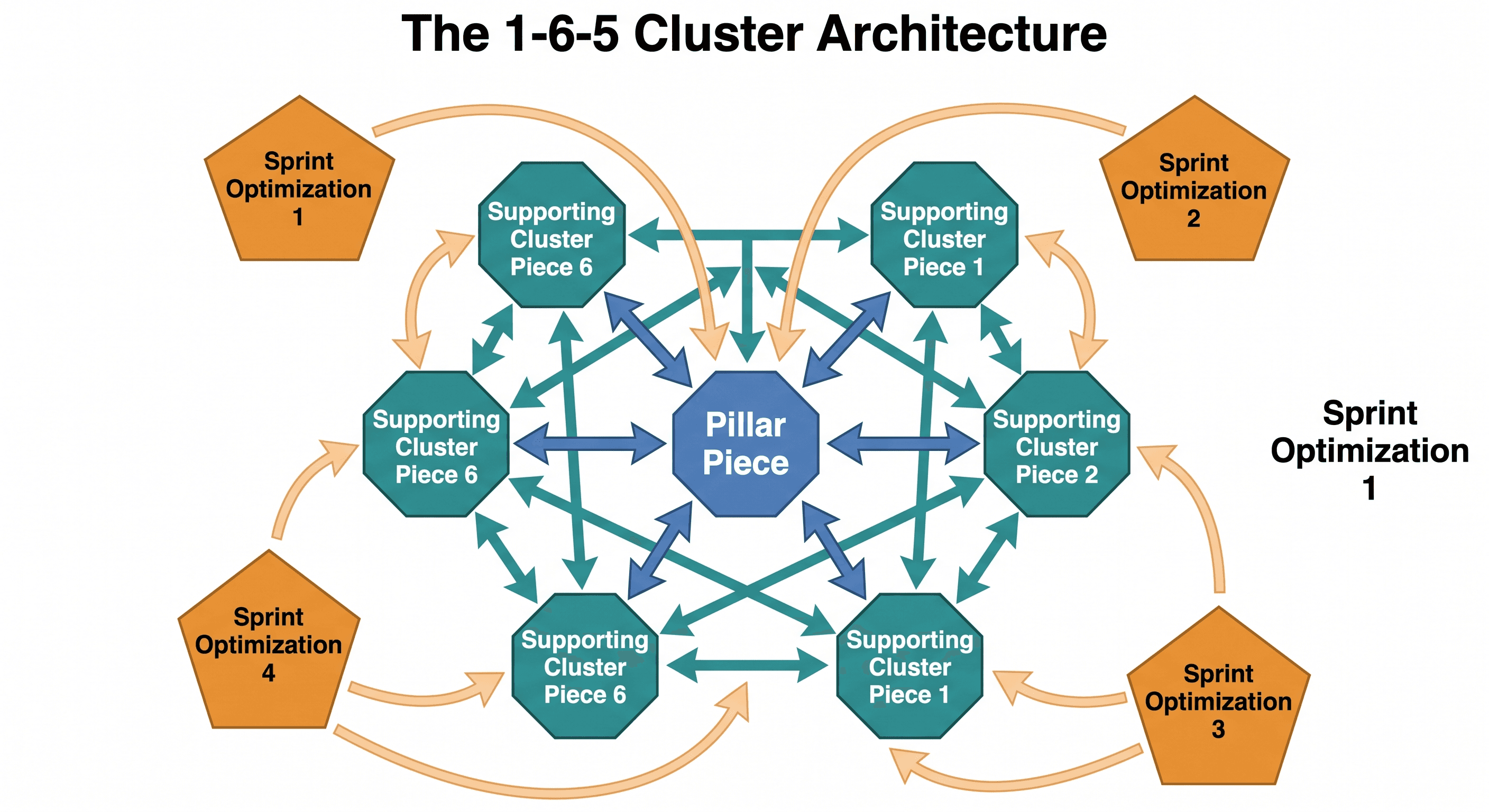

The cluster architecture splits 12 pieces into three roles, each with a different job in the citation graph.

1 pillar piece (the anchor). The 3,000–4,000 word definitive answer to the cluster's core buyer question. Pillars carry the most authority signal and absorb the most internal links. Examples: "The Content Engine Playbook," "The GEO Playbook 2026."

6 supporting cluster pieces (the citation surface). Each tackles one buyer question that the pillar references but doesn't fully answer. These are the pieces AI engines extract specific facts from. Each supporting piece links back to the pillar with descriptive anchor text and links sideways to two or three sibling pieces.

5 sprint optimizations (the recovery layer). Existing posts in the same topic cluster, optimized to current standards. Most seed-stage blogs have at least 5 pieces sitting at position 6–10 with low CTR or zero AI citations. The optimization layer pulls those pieces into the cluster instead of letting them rot.

The split matters because it's the fastest path to authority. New pieces alone take longer to gain trust signals. Optimizations alone don't expand the cluster's surface area. The 1-6-5 split does both.

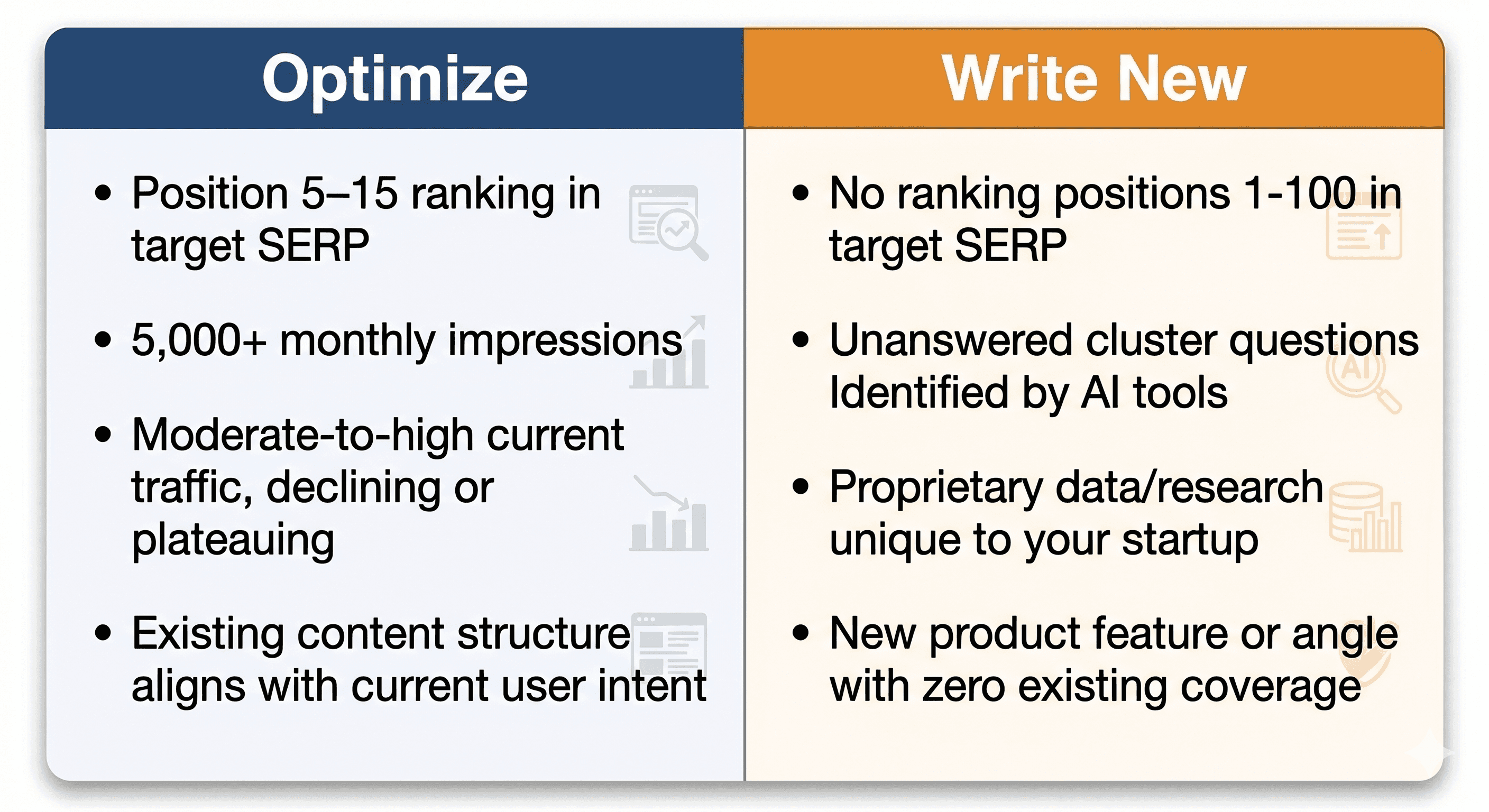

Which Pieces Should You Optimize vs. Create New?

The decision rule is simple: optimize if the piece already has indexed authority. Write new if the cluster has a gap.

Optimize when the existing piece has any of these:

Ranks position 5–15 for a relevant cluster keyword (you've already earned trust signals)

Sits above 5,000 monthly impressions but converts poorly (the audience is there)

Was written before the multimodal citation framework shipped

Targets a keyword now answered by an AI Overview (60% of Google searches now generate zero clicks on these queries)

Write new when:

A buyer question in your cluster is unanswered on your site

Your competitors are getting cited for a question you don't address

The pillar piece references a sub-topic that needs its own dedicated answer

You have proprietary data or a first-person experience no one else can replicate

A useful audit: pull your top 20 GSC pages, sort by impressions descending, and tag each one as "in cluster" or "outside cluster." The "in cluster" pages with declining CTR are your sprint optimization candidates. Most seed-stage blogs have exactly five.

How Long Does the 12-Piece Sprint Take to Run?

The realistic timeline is 8 to 12 weeks. Faster than that and you skip the multimodal layers. Slower than that and the cluster doesn't compound before measurement.

A workable cadence:

Weeks 1–2: Cluster mapping plus pillar piece. The pillar takes longer because it carries the cluster's structural backbone.

Weeks 3–5: Three supporting pieces and two sprint optimizations. Working in parallel pairs (one new + one optimization per week) keeps the cluster expanding while the recovery layer fills in.

Weeks 6–8: Three more supporting pieces and three more sprint optimizations. The internal linking graph starts firing as new pieces reference the optimized older ones.

Weeks 9–12: Measurement, schema validation, video companion production for the pillar.

Solo founders running this manually average 5 hours a week of focused content time. Inside Averi's content engine workflow, that compresses to 2–3 hours a week because the strategy map, drafting, and publishing layers run in one workspace.

The sprint is the unlock. The cadence after is what compounds.

When Should Seed-Stage Startups Actually Run This Sprint?

This sprint is for seed-stage startups that already have a clear ICP, a working product, and at least a thin existing blog (5+ pieces). It's not a cold-start playbook.

Run the sprint when:

Your product has reached usable beta or GA so the content has something concrete to drive sign-ups toward

You have at least one founder or team member who can own the 5-hours-per-week content cadence

Your existing content shows any positive signal (ranking, impressions, or organic sign-ups)

You're targeting a buyer market where AI search already drives discovery (B2B SaaS qualifies; most consumer markets do too)

Don't run the sprint when:

You're still searching for product-market fit and changing your ICP monthly (the cluster will be obsolete before it ships)

Your blog has zero existing pieces (start with 3–5 cornerstone pieces first, then run the sprint)

You haven't decided on your topic cluster (the sprint amplifies whatever topic you anchor it to, including the wrong one)

For seed-stage startups that meet the conditions, the sprint is the highest-leverage 90 days of content work available. For everyone else, it's premature optimization.

Not sure where you stand? Check your Marketing Maturity

How Do You Track Whether the 12 Pieces Are Working?

Traffic isn't the right signal anymore. Citation rate is. Most seed-stage teams still track only the legacy metrics, which is why they miss the inflection.

Track these four signals weekly during and after the sprint:

AI citation rate by engine. Run a fixed list of 10 buyer-question prompts across ChatGPT, Perplexity, Claude, and Gemini. Track how many citations point to your domain. Pre-sprint baseline matters as much as post-sprint count.

Cluster impression growth in GSC. Filter Google Search Console to your cluster pages only. Healthy sprints show impressions doubling between week 4 and week 12.

Cluster CTR distribution. Position 1 CTR fell 32% year-over-year when AI Overviews appeared, so absolute CTR is less useful than relative shift across your cluster.

Sign-up attribution from cluster pages. UTM-tag every CTA on cluster pages. Sign-ups from cluster pages are the conversion proof that ranks above traffic.

Inside Averi's analytics, AI referral traffic surfaces alongside traditional organic, so you see citation-driven sign-ups in the same dashboard as Google-driven ones.

Without that, most teams undercount AI's contribution by 30–50% because the referrer string is empty.

Citation rate is the leading indicator. Sign-ups are the confirmation. Track both.

What Comes After the First 12?

The first 12 establish citation surface. The next 12 expand it. The 12 after that turn the cluster into a defensible category position.

The compounding pattern works in three layers:

Layer 1 (months 3–6 post-sprint): Cluster expansion. Add 6 more supporting pieces to the existing cluster. By piece 18, the cluster's internal link graph carries enough authority that new pieces start ranking within 30 days instead of 90.

Layer 2 (months 6–12): Adjacent cluster. Anchor a second pillar piece in an adjacent topic. Run another 12-piece sprint on it. Cross-link the two pillars. By month 12, you have two clusters with ~25 pieces each and two pillar pages competing for definitional authority.

Layer 3 (months 12+): Category position. This is where the math gets interesting. Companies with two cluster-anchored pillars in adjacent topics get cited in 60% of B2B product discovery prompts within their category. That's the position HubSpot owns for inbound marketing. The 12-piece sprint is how you start.

The first 12 are the proof. The next 24 are the moat.

The 12-Piece Sprint Plan Template

Below is the exact template we used for Averi's sprint. Clone it inside Averi's Strategy Map on the $99/month Solo plan, or copy it into your own doc.

# | Role | Piece type | Topic prompt | Multimodal layers | Status |

|---|---|---|---|---|---|

1 | Pillar | New | The definitive answer to your cluster's core buyer question | T+I+V+S | Week 1 |

2 | Supporting | New | Buyer question #1 (referenced in pillar but not fully answered) | T+I+V+S | Week 2 |

3 | Supporting | New | Buyer question #2 | T+I+S | Week 3 |

4 | Supporting | New | Buyer question #3 | T+I+S | Week 4 |

5 | Optimization | Refresh | Existing piece ranked 5–10 in cluster | T+I+S | Week 4 |

6 | Supporting | New | Buyer question #4 | T+I+S | Week 5 |

7 | Optimization | Refresh | Existing piece with 5K+ impressions, low CTR | T+I+S | Week 5 |

8 | Supporting | New | Buyer question #5 | T+I+S | Week 6 |

9 | Optimization | Refresh | Existing piece pre-multimodal framework | T+I+S | Week 6 |

10 | Supporting | New | Buyer question #6 | T+I+S | Week 7 |

11 | Optimization | Refresh | Existing piece targeting AI-Overview keyword | T+I+S | Week 7 |

12 | Optimization | Refresh | Existing piece with proprietary data to expand | T+I+S | Week 8 |

T = text, I = image, V = video companion, S = layered schema stack.

The pillar gets all four layers.

Supporting pieces get text + image + schema, with video added for the top 2 performing pieces.

Optimizations get text + image + schema upgrades.

By piece 12, you've shipped roughly 30,000–40,000 words of citation-ready content, 12 original images, 1 companion video, and a fully-validated schema graph.

Ready to Run Your Own 12-Piece Sprint?

Clone the sprint plan template inside Averi's Strategy Map. The Solo plan ($99/month) gives you the full content engine workflow we used to run our own sprint: strategy mapping, content queue, drafting, multimodal optimization, publishing, and analytics in one workspace.

Start your 14-day free trial →

FAQs

How many content pieces does a seed-stage startup need to rank in AI search?

Twelve, per Brandi AI's 2026 data showing brands publishing 12 new or optimized pieces achieve up to 200x faster AI visibility gains versus brands publishing four. The threshold only works if all 12 pieces sit in one cluster, ship multimodally, and follow extraction-ready structural patterns like direct-answer H2s and 40–60 word FAQ blocks.

Why does AI citation require fewer pieces than traditional SEO ranking?

AI engines score topical density and structural extractability inside a tight cluster, while traditional SEO scored link authority and keyword breadth across many pages. A 12-piece cluster produces more extractable answer surface than 50 unrelated posts. The math shifted because the indexing target shifted from page-level keywords to cluster-level entity authority.

What's the difference between a 12-piece sprint and just publishing 12 random blog posts?

Random pieces produce roughly the same citation impact as four. The 12-piece sprint requires three conditions: cluster alignment around one pillar, multimodal layering (text plus image plus video plus schema), and structural optimization for AI extraction. Without all three, you get traffic at best and citations almost never. With them, the citation surface compounds at piece 11 or 12.

How long does it take to run the 12-piece sprint?

8 to 12 weeks for a solo founder or small team. Faster timelines skip the multimodal layers and produce text-only pieces that capture roughly 40% of available citation surface. Inside an AI content engine workflow, the same sprint compresses to 2–3 hours a week of focused work because strategy mapping, drafting, and publishing run in one workspace.

Should I create new pieces or optimize existing ones?

The split that works is 1 pillar plus 6 new supporting pieces plus 5 optimizations of existing posts. Optimize when an existing piece already ranks 5–15 for a relevant keyword or sits above 5,000 monthly impressions. Write new when the cluster has a buyer question gap competitors are answering and you aren't. Most seed-stage blogs have exactly five optimization candidates sitting in their top 20 GSC pages.

How do I track whether the 12-piece sprint is working?

Track AI citation rate (run 10 fixed buyer-question prompts across ChatGPT, Perplexity, Claude, and Gemini weekly), cluster impression growth in GSC, cluster CTR shift, and sign-up attribution from cluster pages. AI referral traffic often shows empty referrer strings, so dashboards that don't surface AI sources separately undercount its contribution by 30–50%.

When is the wrong time for a seed-stage startup to run this sprint?

Don't run the sprint if you're still iterating on ICP and changing target buyers monthly, your blog has zero existing pieces (start with 3–5 cornerstone pieces first), or you haven't decided on your topic cluster anchor. The sprint amplifies whatever cluster you anchor it to, including the wrong one. Premature sprints lock in cluster choices that get rebuilt 6 months later.

Related Resources

Content Velocity & Strategy

GEO & AI Search

The GEO Playbook 2026: Getting Cited by LLMs (Not Just Ranked by Google)

Beyond Google: How to Get Your Startup Cited by ChatGPT, Perplexity, and AI Search

Google AI Overviews Optimization: How to Get Featured in 2026

Schema Markup for AI Citations: The Technical Implementation Guide

Building Citation-Worthy Content: Making Your Brand a Data Source for LLMs