The Multimodal Content Cluster: How One Topic Becomes Citable Across ChatGPT, Perplexity, and Google AI Overviews

Zach Chmael

Head of Marketing

8 minutes

In This Article

A blog post is now a multimodal cluster — text, image, video, schema. Each format is a citation surface. Here's the 4-layer template we run.

Updated

Trusted by 1,000+ teams

Startups use Averi to build

content engines that rank.

TL;DR

🎯 A blog post is now a multimodal cluster, not a single artifact. Text + image + video + structured data + third-party signals — five formats, five citation surfaces, five different engines that prefer different formats

📊 The platform divergence is structural: ChatGPT prefers product pages and encyclopedic content, Perplexity prefers recency and citation-dense methodology, Google AI Mode prefers multimodal signals (especially YouTube) and topic comprehensiveness via query fan-out. Same content, different formats win on different engines

🎬 YouTube is the single most-cited domain in Google AI Overviews — accounting for 18.2% of all citations from outside the top 100. A pillar piece without a companion video leaves the largest single citation surface in Google's AI ecosystem on the table

🖼 Alt text is no longer just accessibility — it's a citation surface for vision-enabled AI engines. Gemini, ChatGPT (with vision), and Claude all extract image content. Alt text written for AI extraction (descriptive, fact-dense, contextual) gets cited at meaningful rates; alt text written purely for accessibility doesn't

📐 Schema is the connective tissue. Article + FAQPage + ItemList + VideoObject + ImageObject schema turns a single page into a multi-surface citation asset. Pages with proper schema markup see 36% higher AI citation rates

⚙️ The four-layer template: text optimized for extraction (Layer 1), image with AI-aware alt text (Layer 2), video with transcript (Layer 3), structured data tying them together (Layer 4) — the architecture that turns one topic into a cluster citable across every major engine

Zach Chmael

CMO, Averi

"We built Averi around the exact workflow we've used to scale our web traffic over 6000% in the last 6 months."

Your content should be working harder.

Averi's content engine builds Google entity authority, drives AI citations, and scales your visibility so you can get more customers.

The Multimodal Content Cluster: How One Topic Becomes Citable Across ChatGPT, Perplexity, and Google AI Overviews

A blog post used to be one thing… a piece of text on a URL.

In 2026, that mental model is structurally broken.

A blog post is now a multimodal cluster — text + image (with alt text written for AI extraction) + video (with transcript) + structured data (with schema) + third-party signals (Reddit, YouTube, G2).

Each format is a separate citation surface.

Each citation surface gets evaluated differently by ChatGPT, Perplexity, Gemini, Claude, and Google AI Mode.

Each engine pulls from the formats it prefers, ignoring the formats it doesn't.

The teams still building text-only blog posts in Q2 2026 are publishing into roughly 40% of the citation surfaces buyers actually use.

The teams building multimodal clusters — same topic, four-or-five formats, each optimized for its specific engine — are publishing into 100% of them. The gap is the difference between a cluster that compounds across all AI engines and a cluster that's invisible on at least three of them.

This piece is the four-layer template we run on every pillar piece at Averi.

It's not a multimodal repurposing playbook (Averi has a separate piece on that, focused on human audiences across distribution channels).

This is the AI-citation-specific framework: how to structure each format so it gets extracted, parsed, and cited by the engine that prefers that format.

Why a single-format piece is now structurally undercovered

Three structural shifts have happened in the last 12 months that broke the "one URL = one piece of content" mental model.

Shift 1: Vision-enabled AI engines now extract image content. Gemini's multimodal architecture analyzes images natively. ChatGPT-4 with vision extracts content from images at production quality. Claude can read images and integrate that content into responses. The image inside your blog post used to be decoration. In 2026, it's a citation surface — the AI engine reads the chart, evaluates the alt text, and pulls the data point into an answer to a buyer's question. If the alt text reads "graph showing data," the citation goes to a competitor whose alt text reads "Q1 2026 organic traffic showing 16,261% YoY growth from May to March on a single-person team."

Shift 2: Video became a primary AI citation surface. YouTube is now the single most-cited domain in Google AI Overviews and accounts for 18.2% of all citations from outside the top 100. The Gemini 3 update specifically expanded multimodal integration. Brands with video content covering their category get cited at rates text-only brands cannot match. The 5-7 minute companion video isn't optional anymore — it's the difference between being cited in Google AI Overviews and being invisible to that surface.

Shift 3: Schema became the connective tissue across formats. Article schema describes the text. ImageObject schema describes the image. VideoObject schema describes the video. FAQPage schema describes the question-answer structure. ItemList schema describes any list (steps, comparison rows, recommendations). Each schema type tells a different AI engine how to read a different format. Pages with proper schema markup see 36% higher AI citation rates, but only when the schema is layered correctly across formats — not just one schema type slapped on the article.

The combined effect: a text-only blog post in 2026 produces roughly 40% of the citation surface a properly-architected multimodal cluster produces. The gap isn't going to narrow. It's going to widen as vision-enabled engines mature and multimodal becomes the default search expectation.

For broader context on the platform divergence specifically, see our Platform Divergence Playbook and B2B SaaS Citation Benchmarks Report.

The four-layer template

Every pillar piece at Averi runs four layers of multimodal optimization.

The combined cluster produces citations across ChatGPT, Perplexity, Gemini, Claude, and Google AI Mode at roughly 4x the rate of a comparable text-only piece.

Layer 1: Text optimized for extraction (the foundation)

The text is still the foundation — but it's structured for AI extraction, not just human reading.

Structural patterns that produce text citations:

Direct-answer headlines. Pages with headlines that directly answer the question get cited by ChatGPT 41% of the time. H2s phrased as questions ("Why is my organic traffic flat?") followed by direct answers in the first 40-60 words.

120-180 word sections between headings. Pages that use 120-180 words between headings receive 70% more ChatGPT citations than pages with sections under 50 words. Too short produces fragmented extractability; too long buries the answer.

Fact density at 1 stat per 100 words minimum. Content with original statistics sees 30-40% higher visibility in AI responses. 44.2% of AI citations come from the first 30% of a page's text, so the front-loaded fact density specifically determines whether the page gets cited at all.

FAQ section with self-contained answers. FAQ sections get cited by AI at roughly 3x the rate of standard content sections. Each answer 40-60 words, contextually self-contained (the answer makes sense without the question), structured for direct extraction.

For the deeper take on the question-answer structure specifically, see our FAQ optimization for AI search guide and our building citation-worthy content guide.

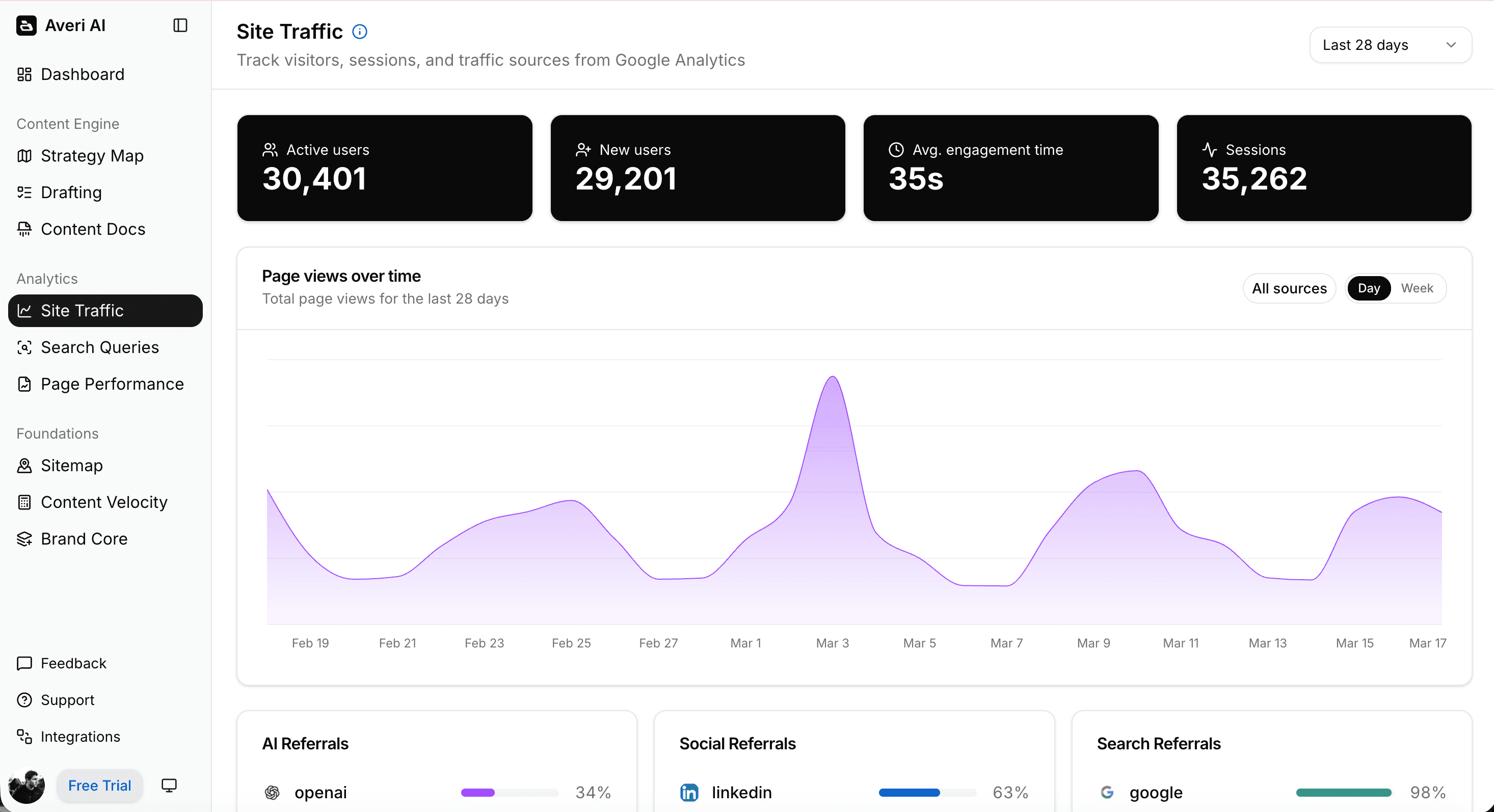

img alt text: averi-analytics-platform-up

Layer 2: Image with AI-aware alt text (vision-enabled engines)

Images used to be optional supplementary content. With vision-enabled AI engines (Gemini, ChatGPT-4o with vision, Claude), images are now a separate citation surface that the AI evaluates independently of the surrounding text.

Patterns that produce image citations:

Original imagery, not stock photos. Stock photos rarely surface in AI image search. Original charts, graphs, screenshots, and proprietary visuals get cited at materially higher rates. The 5W report observation… AI engines preferentially extract original visual content over reused stock imagery.

AI-aware alt text. Alt text written purely for accessibility ("graph showing data") gets ignored by vision-enabled engines because it doesn't add information beyond what the image provides. AI-aware alt text describes the content of the image specifically… "Q1 2026 GSC dashboard showing 2.9 million organic impressions, peak month March 2026, 16,261% YoY growth from May 2025 baseline of 17,824 impressions." The descriptive density is the citation lever.

Embedded EXIF metadata and descriptive filenames. "averi-content-engine-growth-q1-2026.png" is meaningfully more citable than "image-2024-04-01.png." Filenames are crawled and treated as semantic signals.

ImageObject schema markup. Tells the AI engine exactly what the image represents, who created it, when, and what entity it relates to. Increases citation probability by 30-40% on vision-enabled engines.

The alt-text-as-citation-surface concept is the single most underutilized lever in 2026 multimodal optimization.

Most B2B SaaS teams write alt text for accessibility, not for AI extraction, and lose citation opportunities they could be capturing with the same effort.

Layer 3: Video with transcript (Google AI Overviews + YouTube ecosystem)

Video is the highest-impact layer for Google AI Overviews specifically, and increasingly for ChatGPT through training-data exposure to YouTube transcripts.

Patterns that produce video citations:

Companion video to every pillar piece. A 5-7 minute video covering the same content as the text piece. Posted on YouTube. Linked from the pillar piece (and vice versa). The dual-format approach captures both Google AI Overview citations (which favor YouTube) and ChatGPT citations (which exposure to YouTube transcripts during training).

Structured transcripts. YouTube auto-generates transcripts. Structured transcripts (with timestamps, named sections, fact-dense passages) get extracted at materially higher rates than auto-generated ones. The 30-60 minutes spent restructuring a transcript is one of the highest-ROI activities in multimodal optimization.

VideoObject schema on the embed. Schema markup on the video embed within the text piece tells AI engines the video exists, what it's about, when it was published, and how it relates to the surrounding text content. Increases citation probability across both YouTube and the embedded page.

Direct-answer chapter markers. YouTube chapters with descriptive names ("Why your organic traffic looks flat at month 3") perform like FAQ headings — they create direct extraction surfaces that AI engines parse for answer matches.

For most B2B SaaS teams, the video layer is the biggest gap in current optimization.

Producing one companion video per pillar piece (5-7 minutes, same content as the text, with structured transcript and chapter markers) is roughly a 90-minute production task that produces measurable citation lift across the largest single AI surface in the Google ecosystem.

Layer 4: Structured data (the connective tissue)

Schema markup is what tells AI engines how the formats relate to each other and to the topic.

Layered schema architecture for a pillar piece:

Article schema describes the text content (author, date published, modified date, word count, headline)

FAQPage schema describes the question-answer section (each Q&A as a structured pair the AI can extract directly)

ItemList schema describes any list content (steps, comparison rows, ranked items)

VideoObject schema describes the embedded or linked video (URL, duration, upload date, content URL)

ImageObject schema describes the image content (URL, caption, content describing the image semantically)

Organization schema ties the publisher entity to the content

Person schema ties the author entity (Zach Chmael for our pieces) to the content

The combined schema stack tells the AI engine: this is a multimodal cluster covering [topic], authored by [verified entity], published on [verified date], with these specific text/image/video components, each describing this specific facet of the topic. The structured-data layer is what connects the four other layers into a coherent unit the AI can parse and cite as a whole.

Pages with proper schema markup see 36% higher AI citation rates, and the lift is even higher when the schema is layered across multiple formats rather than applied as a single Article schema slapped on the page.

For the deeper implementation guide specifically, see our Schema Markup for AI Citations: Technical Implementation Guide.

See what your Content ROI could be with a proper strategy

How the same article shows up in three different AI engines

Worth showing this concretely. Let me walk through how a single pillar piece — properly architected as a multimodal cluster — shows up differently across ChatGPT, Perplexity, and Google AI Overviews when a buyer asks a question about the topic.

Example pillar piece: "Why your organic traffic isn't flat — it's lagging" (a real piece in Averi's library covering the data lag in content marketing).

The cluster components:

Layer 1 (text): 3,800-word pillar piece with 7-question FAQ, 15+ stats with citation links, direct-answer H2s

Layer 2 (image): Original chart showing Averi's first 12 months of GSC data with the inflection points marked, AI-aware alt text describing the data ("Search Console growth chart showing 17,824 impressions in May 2025 reaching 2.9M in March 2026, with marked inflections in September 2025 and January 2026")

Layer 3 (video): 6-minute YouTube companion video walking through the same diagnostic, with structured transcript and chapter markers

Layer 4 (schema): Article + FAQPage + ImageObject + VideoObject + Organization + Person schema layered on the page

How ChatGPT cites it (when buyer asks "why is my SaaS organic traffic flat after 4 months"):

ChatGPT pulls the direct-answer text from the FAQ ("The first 90 days are usually invisible because rankings haven't consolidated...")

It cites the URL of the pillar piece

It does not surface the video or image (ChatGPT prioritizes encyclopedic text over multimodal content)

The fact density and front-loaded answers are what makes the text version citation-worthy

How Perplexity cites it (same query):

Perplexity pulls the methodology section (the 6-question diagnostic)

It surfaces the visible "2026" date signals in the text and meta

It cites both the pillar URL and quotes a specific stat from the piece (the Ahrefs 1.74% rate)

It does not surface the video unless the query specifically mentions video; it does surface the image if the query is data-related

The recency signals and citation-dense methodology are what make the piece Perplexity-worthy

How Google AI Overviews cites it (same query):

Google AI Overviews surfaces a snippet from the text, the YouTube video as a multimodal citation, and the original chart image

The AI Overview shows three components: a paraphrased answer (from the text), an embedded video thumbnail (from the YouTube companion piece), and the chart (from the image with AI-aware alt text)

The query fan-out mechanic pulls from sub-queries: "data lag SEO," "GSC data delay," "content marketing 90 days" — all of which the multimodal cluster covers

The piece is cited across multiple sub-queries because the cluster covers the topic comprehensively across formats

The same piece, the same topic, three different citation patterns.

Without the multimodal architecture, the piece would only have surfaced on ChatGPT (text-only).

With the multimodal architecture, it surfaces on all three engines, capturing roughly 3x the citation volume per topic.

For the deeper take on how to actually measure this, see our How to Track AI Citations and Measure GEO Success guide and our 9 Best AI Search Visibility Trackers comparison.

Common mistakes when building multimodal clusters

Five patterns I see most often when teams attempt multimodal architecture:

Mistake 1: Producing each format separately, with no cross-linking. The most common failure mode. Team writes the blog post. Different team produces the YouTube video weeks later. The two never link to each other. Schema isn't layered. The image is a stock photo with no alt text. The team thinks they have a multimodal cluster; they actually have four disconnected assets. AI engines treat them as four separate pieces, none of which is deep enough on its own to earn primary citation status.

Mistake 2: Treating alt text as accessibility-only. Vision-enabled AI engines extract image content based on alt text. Alt text written for accessibility ("graph showing data") provides no additional information for the AI engine to extract. AI-aware alt text — descriptive, fact-dense, semantically specific — is the citation lever. Most B2B SaaS teams are leaving this lever entirely on the table.

Mistake 3: Skipping the video layer because "we're not a video company." YouTube is the most-cited domain in Google AI Overviews from outside the top 100. Skipping video means skipping the largest single citation surface in Google's AI ecosystem. The "we're not a video company" framing was reasonable when video meant high-production polished content. With AI engines extracting from transcripts, a 5-7 minute screen-record explainer with a structured transcript captures the citation surface at minimal production cost.

Mistake 4: One schema type instead of layered schema. Most teams add Article schema and stop. The full citation lift comes from layered schema (Article + FAQPage + ItemList + VideoObject + ImageObject + Organization + Person), each describing a different format component. The layered approach signals to AI engines that the page is a multi-surface asset. The single-schema approach signals nothing more than "this is an article."

Mistake 5: Optimizing each format for its preferred engine without coordinating across the cluster. Teams that recognize platform divergence sometimes overcorrect — producing text optimized for ChatGPT, video optimized for YouTube, images optimized for Pinterest, with no coherent topical thread connecting them. The cluster fails because the formats don't reinforce each other. The fix is treating multimodal as a single topic with four format-specific implementations, not four separate pieces of content with topic similarity.

For deeper context on platform-specific optimization differences, see our Platform Divergence Playbook and Citation Benchmarks Report.

What to do this week

If you want to shift from text-only blog posts to multimodal clusters, the order:

Audit your top 10 pillar pieces with the four-layer test. For each piece, check: Layer 1 (text optimized for extraction with FAQ + fact density + direct-answer H2s), Layer 2 (original image with AI-aware alt text), Layer 3 (companion video with structured transcript), Layer 4 (layered schema across all formats). Most teams fail at least 2 of the 4 layers on most pieces. That's normal. That's where the work is.

Start with Layer 4 (schema) on existing pieces. It's the lowest-effort, highest-impact work. Adding FAQPage + ImageObject + VideoObject schema to existing pieces takes 30-60 minutes per piece and produces measurable citation lift within 30-60 days. Do this before producing any new format content.

Rewrite alt text on top 10 pieces. Replace accessibility-only alt text with AI-aware alt text. Describe the content of the image specifically, with fact density, in the way a citation-worthy text passage would describe it. 1-2 hours of work per piece. Compounds across vision-enabled engines.

Produce one companion video per quarter for the top pillar piece. Don't try to retrofit video for the entire library at once. Pick the highest-traffic pillar piece. Record a 5-7 minute screen-record explainer covering the same content. Upload to YouTube with a structured transcript and chapter markers. Embed back in the pillar piece. Wait 60 days. Measure citation lift on Google AI Overviews specifically. Use that result to justify scaling video production across the rest of the library.

Build platform-specific tracking before adding more formats. Single-platform AI citation tracking misses 89% of the citation pool. Track citations across ChatGPT, Perplexity, and Google AI Overviews minimum. Without multi-platform tracking, you can't measure whether the multimodal architecture is producing citation lift across engines.

Document the four-layer template as a non-negotiable editorial standard. Every new pillar piece runs all four layers. Below the threshold, the piece doesn't ship. Building this discipline upfront prevents the "we'll add the video later" pattern that produces fragmented multimodal coverage.

Re-audit quarterly. Multimodal optimization patterns shift fast. Vision-enabled engines mature monthly. Schema standards update. The four-layer template is the architecture; the specific implementations within each layer evolve continuously. Quarterly re-audits catch decay and surface new opportunities.

That's the framework.

The teams that build multimodal clusters in Q2 2026 capture roughly 3x the citation surface of teams running text-only blog posts. The gap is structural, defensible, and widening as multimodal becomes the default expectation across every major AI engine.

If you want this baked into your stack — Brand Core that captures the multimodal worldview at setup, Strategy Map that organizes content as topic clusters across formats, Content Scoring that flags missing layers before publish, native publishing with schema-by-default, and unified analytics across rankings + citations + AI surfaces — start a free 14-day Averi trial.

30 minutes to set up. The first pillar you produce inside Averi will be architecturally calibrated for the multimodal reality this article describes.

Related Resources

The Multimodal Foundation

Schema Markup for AI Citations: The Technical Implementation Guide

Building Citation-Worthy Content: Making Your Brand a Data Source for LLMs

Multimodal Content Repurposing: Scaling Your Reach Across Formats

Platform-Specific Optimization

The Platform Divergence Playbook: Three Plays for ChatGPT, Perplexity, Google AI Mode

ChatGPT vs Perplexity vs Google AI Mode: B2B SaaS Citation Benchmarks Report (2026)

Platform-Specific GEO: How to Optimize for ChatGPT vs Perplexity vs Google AI Mode

The Reddit-AI Search Connection: How User-Generated Mentions Become LLM Citations

The Methodology

The Measurement Layer

Real Receipts

Build the multimodal cluster from one workflow. Averi's content engine treats every pillar as a multi-format cluster by default — Brand Core captures the worldview, Strategy Map organizes topics across formats, Content Scoring flags missing layers, and unified analytics track citations across every major AI engine. $99/mo, no contract, 14-day free trial. Start your free trial →

FAQs

What is multimodal content optimization for AI?

Multimodal content optimization is the practice of structuring a single topic across text, image, video, and structured data — each format optimized for AI extraction and citation by the engines that prefer that format. ChatGPT preferentially cites text and product pages. Perplexity prioritizes citation-dense methodology and recency. Google AI Overviews favor multimodal content (especially YouTube) and topic comprehensiveness via query fan-out. A multimodal cluster captures all three citation surfaces; a text-only piece captures roughly one of the three.

Why does YouTube matter so much for AI citations?

Because YouTube is the single most-cited domain in Google AI Overviews — accounting for 18.2% of all citations from outside the top 100. The Gemini 3 update specifically expanded multimodal integration in early 2026, making video a primary citation surface. ChatGPT also gets meaningful citation signal from YouTube through training-data exposure to transcripts. A pillar piece without a companion video leaves the largest single citation surface in Google's AI ecosystem on the table.

How should I write alt text for AI extraction in 2026?

Replace accessibility-only alt text ("graph showing data") with AI-aware alt text that describes the content semantically and with fact density. Example: "Q1 2026 Search Console dashboard showing 2.9 million monthly impressions on a brand-new domain, with growth inflections marked at September 2025 and January 2026." Vision-enabled AI engines (Gemini, ChatGPT-4o with vision, Claude) extract image content based on alt text — descriptive, fact-dense alt text is a citation surface, generic accessibility alt text isn't.

What schema types should I use for multimodal content clusters?

Layer the following schema types on every pillar piece: Article (describes the text), FAQPage (describes the question-answer section), ItemList (describes any list content), VideoObject (describes embedded or linked video), ImageObject (describes images), Organization (ties the publisher entity), and Person (ties the author entity). The layered approach signals to AI engines that the page is a multi-surface asset. Pages with proper schema markup see 36% higher AI citation rates, with the lift compounding when the schema is layered across formats rather than applied as a single Article type.

Do I need to produce video for every pillar piece?

For maximum AI citation coverage, yes — but the implementation can be pragmatic. A 5-7 minute screen-record explainer covering the same content as the text piece, uploaded to YouTube with a structured transcript and chapter markers, captures most of the citation lift. Production budget isn't the constraint; the constraint is just deciding to do it. Most B2B SaaS teams skip video because of perceived production complexity, leaving the largest single citation surface in Google's AI ecosystem unaddressed.

Are images and videos really cited by AI engines?

Yes, increasingly. Vision-enabled AI engines (Gemini, ChatGPT-4o with vision, Claude) extract image content natively. Google AI Overviews cite YouTube videos directly with thumbnail and link. Perplexity surfaces images for data-related queries. The "AI engines only cite text" assumption was true in 2024 and false by 2026 across every major engine. Treating images and videos as citation surfaces (with proper alt text, schema, and structural optimization) is now standard practice for teams optimizing for AI visibility.

How does Averi handle multimodal content optimization?

Averi's content engine treats every pillar piece as a multi-format cluster by default. The Content Scoring System evaluates pieces on a composite SEO + GEO scale that flags missing layers (no FAQ section, no video companion, weak alt text, missing schema types) before publish. The Strategy Map organizes content by topic across formats, ensuring the multimodal coverage compounds rather than fragments. Native CMS publishing handles layered schema by default. The unified analytics dashboard tracks citations across ChatGPT, Perplexity, Gemini, and Google AI Overviews — surfacing which formats are producing citations on which engines, which gaps need filling next, and where the multimodal architecture is paying off.