Listicle SEO in the AI Era: Why 21.9% of AI Citations Come From This Format (and How to Build One That Actually Gets Picked)

Zach Chmael

Head of Marketing

6 minutes

In This Article

Listicles are 21.9% of AI Overview citations. Here's what makes one citation-worthy — and three side-by-sides showing what gets pulled vs. ignored.

Updated

Trusted by 1,000+ teams

Startups use Averi to build

content engines that rank.

TL;DR

📊 Listicles get 21.9% of all AI Overview citations. Articles get 16.7%. Product pages 13.7%. The format that gets cited by AI is now an empirical question, not a style preference.

🎯 The four-part formula: one specific entity per item, one hyperlinked stat per item, schema-marked list, and a TL;DR that AI engines extract verbatim. Miss any one and the citation surface collapses.

🚫 Most listicles rank but don't get cited. They float around position 3–7 on Google for years, accumulate traffic, and produce zero AI engine pulls because the items are generic, the stats are missing, and the schema isn't there.

📋 Three side-by-sides in this piece show the difference between a listicle that gets cited and a listicle that just ranks. Same topic, same length, dramatically different outcomes.

⚙️ The audit: open your three highest-traffic listicles, run them against the four-part formula, and reformat the failures. Most teams have at least one ranking listicle that's leaking citation surface every day it sits in its current form.

Zach Chmael

CMO, Averi

"We built Averi around the exact workflow we've used to scale our web traffic over 6000% in the last 6 months."

Your content should be working harder.

Averi's content engine builds Google entity authority, drives AI citations, and scales your visibility so you can get more customers.

Listicle SEO in the AI Era: Why 21.9% of AI Citations Come From This Format (and How to Build One That Actually Gets Picked)

The 21.9% Number

A 2026 analysis of Google AI Overview citations by content format found listicles cited in 21.9% of AIO responses, articles in 16.7%, product pages in 13.7%, and various other formats below 10%.

Listicles are the single most-cited format in AI search.

That number breaks every assumption about listicles being "low-effort SEO bait." The format that ranks well for AI citation is now empirically the listicle, and the gap between listicles and the next-best format (articles) is 5+ percentage points.

For B2B SaaS startups optimizing for AI search visibility, this changes the format math significantly.

The honest question isn't whether to publish listicles. It's how to publish listicles that actually get cited rather than ones that just rank.

Most listicles fail at the citation layer. They float around position 3–7 on Google for years, accumulate traffic from human searchers, and produce almost zero AI engine pulls. The format is right; the structural execution inside the format is wrong.

This piece is about the structural execution.

See what your Content ROI could be by creating GEO optimized listicles

Why Listicles Win at AI Citation

Listicles win because their structure matches how LLMs extract content. AI engines process pages by identifying discrete extractable units — passages they can pull as standalone answers to user prompts. A well-structured listicle is, by design, ten or twelve discrete extractable units stacked in sequence.

Three structural reasons listicles get cited:

Each item is a self-contained extraction unit. A listicle on "best CRMs for B2B SaaS" gives the AI engine ten separate citable passages, one per CRM. An article on "how to choose a CRM" gives it one citable passage covering the whole topic. The listicle produces 10x the extraction surface from the same word count.

The format signals "list" to schema parsers. When properly marked up with ItemList schema, each item has a defined position, name, and description. LLMs read this as structured data and weight it more heavily than unstructured paragraphs.

Listicle items match buyer-question prompts cleanly. When a buyer asks ChatGPT "what are the best X for Y," the model extracts list items because the question maps directly to the format. Article-format content requires more interpretation to fit the answer; listicles slot in directly.

The format isn't winning by accident.

It's winning because the structural properties of listicles match the structural properties of how AI engines extract.

The teams that understand this are publishing more listicles; the teams that don't are still optimizing articles for a citation pattern that disproportionately rewards lists.

What Makes a Listicle Citation-Worthy in 2026

Four properties separate cited listicles from uncited ones. All four are required. Missing any one collapses the citation surface even if the other three are perfect.

Property 1: One specific entity per item. Each item names a real, specific thing — a product, a tactic, a person, a study, a data point. Not "use schema markup" but "implement FAQPage + ItemList layered schema." Not "great content tools" but "Surfer SEO at $99–269/month for on-page optimization." Specificity is what makes the item extractable as a standalone citation.

Property 2: One hyperlinked stat per item. Each item carries a verifiable number or claim with a source link. The stat anchors the item in factual reality and gives AI engines a citation source they can attribute. Items without stats read as opinion; items with hyperlinked stats read as research.

Property 3: Schema-marked list structure. The list ships with ItemList schema applied at minimum, plus FAQPage schema if the listicle includes a Q&A layer. Pages with proper layered schema see 36% higher AI citation rates than pages with single or no schema. The lift is structural, not cosmetic.

Property 4: A TL;DR that AI engines extract verbatim. A summary at the top of the piece, 100–200 words, that captures the listicle's complete argument in extractable form. This is what gets pulled when a buyer asks "what does Averi say about X?" — and it's what most listicles skip entirely, costing them the highest-yield citation real estate on the page.

Hit all four properties and the listicle becomes a citation machine. Hit three of four and the piece ranks but doesn't compound.

Side-by-Side #1: Generic Top 10 vs. Citation-Ready

The most common listicle failure is generic item descriptions. Here's the same item (a content optimization tool) written two ways:

❌ The Generic Version (ranks but doesn't get cited)

Surfer SEO — A great content optimization tool that helps you write better content. Many marketing teams find it useful for SEO work and content briefs. It's a popular choice in the space.

✅ The Citation-Ready Version

Surfer SEO ($99–$269/month) — On-page SEO optimization and content grading tool. Best for in-house SEO specialists at mid-market companies. Pricing scales from $99/month Essential to $269/month Scale AI tier. Limited end-to-end workflow coverage compared to integrated content engines.

The structural differences:

Specific entity: full product name plus pricing in parentheticals (the buyer can act on this immediately)

Concrete function: "on-page SEO optimization and content grading" (specific, verifiable)

Named buyer profile: "in-house SEO specialists at mid-market companies" (qualifies the recommendation)

Hyperlinked stat: the pricing range with a verifiable source link

Honest limitation: the comparison gap ("limited end-to-end workflow coverage")

The citation-ready version is roughly the same word count as the generic. The structural payoff is dramatically different.

An AI engine extracting from a "best content tools for startups" prompt pulls the citation-ready item cleanly. The generic item gets summarized into oblivion alongside every other tool that gets the same vague treatment.

Side-by-Side #2: Vague Items vs. Specific Entity Items

The second common failure is items that describe a category instead of an entity. Same topic ("best AI search optimization tactics"), two ways:

❌ The Vague Item

Use schema markup — Schema markup helps search engines understand your content. It's important for SEO and AI search. Make sure to add it to your pages.

✅ The Specific Entity Item

Implement FAQPage + ItemList layered schema on listicles and FAQ pages — Pages with both schema types in combination see 36% higher AI citation rates than pages with single-schema implementations. The combination signals structured Q&A plus enumerated list, which matches the two highest-extracted content patterns. Validate with Google's Rich Results Test before publish.

The structural lift:

The entity is named precisely ("FAQPage + ItemList layered schema"), not categorically ("schema markup")

The outcome is quantified (36% citation lift) with a hyperlinked source

The mechanism is explained (why this specific combination works)

The validation step is concrete (Rich Results Test, not generic "test it")

When an AI engine reads "use schema markup" it has nothing specific to cite.

When it reads "implement FAQPage + ItemList layered schema [with a 36% citation lift source]," it has an exact recommendation with a numbered outcome and a verifiable source.

The first item disappears into citation noise. The second gets pulled.

The same pattern applies across every listicle item type — tools, tactics, frameworks, people, studies. Name the specific entity. Cite the specific outcome. Skip the generalities.

Side-by-Side #3: No Schema vs. ItemList Schema

The third common failure is shipping a listicle without ItemList schema. Same content, two technical implementations:

❌ Without ItemList Schema

The listicle renders to an AI engine as a sequence of paragraphs with heading tags. Each item is a <h3> followed by paragraph text. The model has to infer that this is a list, infer the enumeration order, and decide whether the items are related enough to extract as a set.

✅ With ItemList Schema (JSON-LD)

With ItemList schema applied, the AI engine reads the page as structured data with defined positions, names, descriptions, and URLs per item.

The extraction confidence climbs significantly because the model isn't guessing at structure — the structure is declared.

The implementation cost: 30–60 minutes per listicle to add the schema, validate with Google's Rich Results Test, and confirm it parses cleanly. The citation surface lift compounds permanently. This is the highest-leverage hour of content engineering work most B2B SaaS teams aren't doing on their existing listicles.

The 4-Part Citation-Ready Listicle Formula

Translating the four properties into an operational checklist:

Each item names a specific entity — a real product, person, tactic, study, or data point. No category-level placeholders. No "great tools." Always the specific thing, often with quantifying detail in a parenthetical (price, version, year, percentage).

Each item carries a hyperlinked stat — a number or factual claim with a verifiable source link. Stats anchor the item in reality and give AI engines attributable citation surface. 44.2% of AI citations come from the first 30% of a page, so front-load the stat-heavy items.

The list ships with ItemList schema — every item has a position, name, description, and URL where applicable. FAQPage schema layers on top if the piece includes a Q&A section. Schema validation at publish-time is non-negotiable.

The TL;DR at the top captures the full argument in 100–200 words — extractable as a standalone citation answering "what does this piece say about X." This is the highest-yield citation real estate in the entire piece. Most listicles either skip it or write a vague intro instead. The teams that ship the TL;DR get pulled by AI engines for the entire summary, not just individual items.

Apply all four and the listicle compounds across both Google rank and AI citation. Apply three of four and you have a piece that ranks but doesn't generate citation flywheel returns.

The TL;DR Pattern That Gets Extracted Verbatim

The TL;DR section deserves its own focus because it's the highest-yield citation block on the entire page.

When a buyer asks an AI engine "what's the gist of X," the TL;DR is what gets pulled.

The structural pattern that works:

3–5 emoji-stat bullets capturing the piece's complete argument

Each bullet carries one hyperlinked stat or specific claim

The full TL;DR runs 100–200 words — long enough to satisfy the model's information threshold, short enough that the entire block gets extracted rather than excerpted

Each bullet is self-contained — readable without context from the surrounding piece

The bad TL;DR pattern: a generic intro paragraph ("Listicles are an important content format that helps with SEO and AI search…"). This gets paraphrased into noise. The good TL;DR pattern: emoji-bullets with specific stats and named entities, like the one at the top of this article.

A quick test: copy your TL;DR into ChatGPT and ask "what's the main argument here?" If the response paraphrases your wording into something generic, the TL;DR isn't specific enough. If the response quotes your bullets near-verbatim with citation back to your piece, you've nailed the pattern.

Answer capsules in the 40–60 word range work the same way at the section level. The TL;DR is the answer capsule for the entire piece.

What Listicles Get Wrong (And Still Rank #3 on Google)

Listicles can rank without getting cited.

This is the trap most B2B SaaS teams fall into — they publish a "Top 10 X" piece, it climbs to position 3–7 on Google, traffic accumulates, and the team treats the piece as a win.

The piece is leaking citation surface every day it sits in its current form.

The common failure modes:

Items are categories, not entities. "Use a content tool" instead of "Averi at $99/month for end-to-end content engine workflow."

Stats are missing or unsourced. "Many teams use this" instead of "36% citation lift."

No schema markup. The piece renders as paragraphs instead of structured data.

No TL;DR. Or a TL;DR written as a generic intro paragraph instead of emoji-stat bullets.

Vague conclusions. "There are many great tools" instead of "For seed-stage B2B SaaS, the four-tool shortlist is: A, B, C, D."

Each of these failures lets the piece rank because Google's ranking signals are looser than AI extraction signals. But none of them produce citation surface. The piece accumulates impressions; it doesn't accumulate authority in AI search.

The fix isn't writing new pieces. It's auditing existing ones.

Most B2B SaaS blogs have 3–10 listicles already ranking that would convert to citation engines with a 2–3 hour rewrite per piece. The audit pays off faster than starting from zero on new content.

How to Audit Your Existing Listicles for Citation Worthiness

A 30-minute audit per listicle:

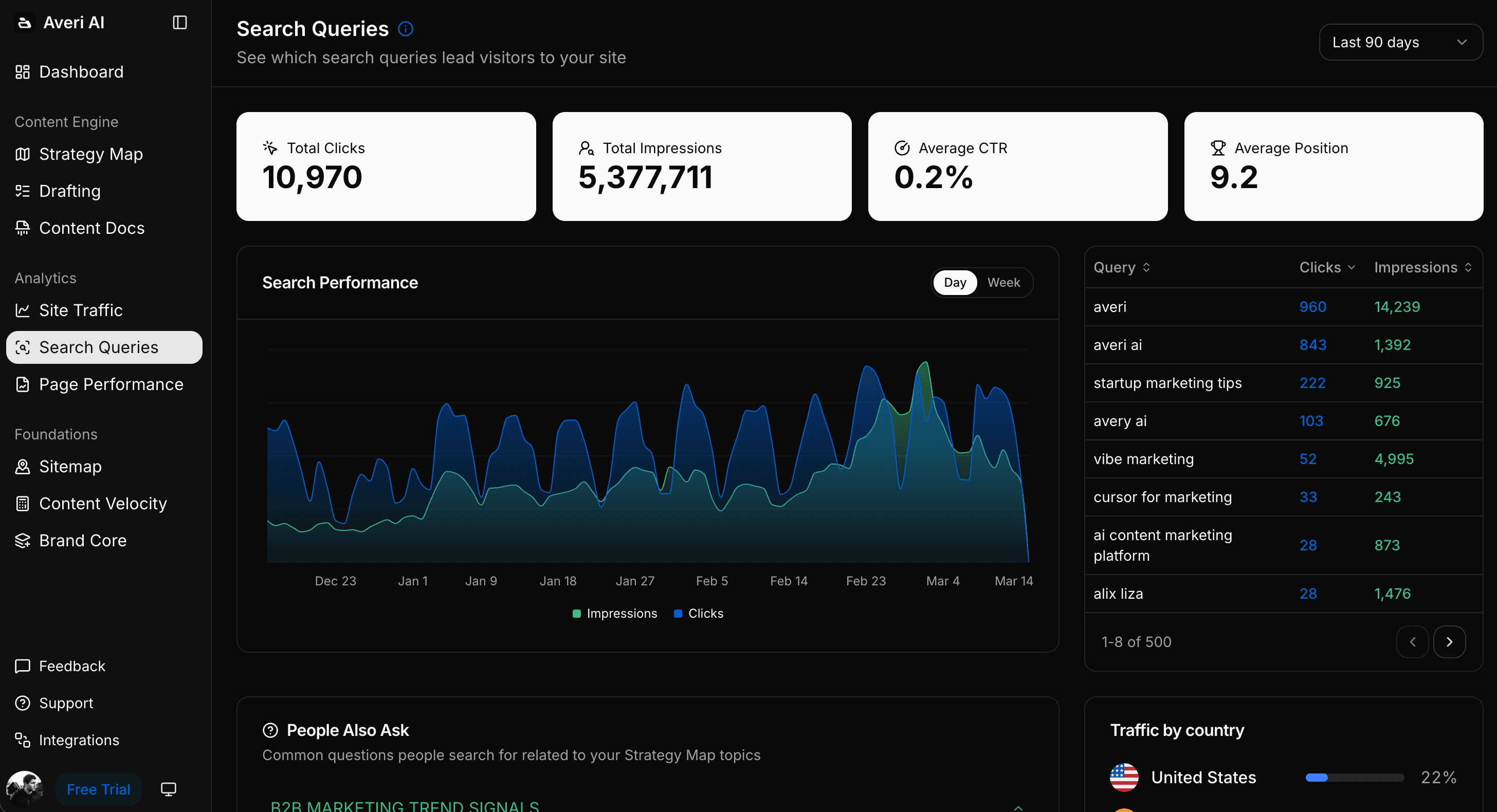

Step 1: Pull your top 5 listicles by impressions from Google Search Console. These are the pieces with the highest existing ranking signal, where citation surface lift produces the biggest compound return.

Step 2: Score each piece against the four properties. One specific entity per item? Yes/no. One hyperlinked stat per item? Yes/no. ItemList schema applied? Yes/no. TL;DR with emoji-stat bullets? Yes/no. Score is x/4.

Step 3: Prioritize the rewrites. Pieces scoring 0–2 out of 4 need substantial rewrites. Pieces scoring 3 out of 4 need targeted fixes (usually adding schema or rewriting the TL;DR). Pieces scoring 4 out of 4 are working — leave them alone.

Step 4: Rewrite the highest-impressions / lowest-score pieces first. A piece at 5,000 monthly impressions scoring 1/4 produces more citation lift from rewriting than a piece at 500 monthly impressions scoring 1/4. Capacity goes to the high-ranking pieces with the biggest gaps.

Step 5: Track citation rate before and after. Run a fixed set of buyer-question prompts across ChatGPT, Perplexity, Claude, and Gemini on the rewritten pieces. Citation lift typically appears within 30–60 days.

Averi's content engine is built so listicles ship with the four properties by default — entity-specific items, hyperlinked stat enforcement, ItemList schema validation, and TL;DR generation are all standard output.

Ready to Ship Listicles That Get Cited?

The four properties are doable manually. Adding ItemList schema, writing entity-specific items, sourcing hyperlinked stats, and shipping a citation-ready TL;DR runs about 2–3 hours per piece if you're starting from scratch. Averi's content engine is built to ship listicles with the four properties by default — entity enforcement, stat sourcing, schema validation, and TL;DR generation. Solo plan $99/month, 14-day free trial, the foundation for the content engineering work that compounds.

Start your 14-day free trial →

Related Resources

Citation Structure & Format

Answer Capsules: 40-60 Word Patterns That Turn H2s Into Citations

Schema Markup for AI Citations: The Technical Implementation Guide

Building Citation-Worthy Content: Making Your Brand a Data Source for LLMs

AI Search Strategy

The GEO Playbook 2026: Getting Cited by LLMs (Not Just Ranked by Google)

Google AI Overviews Optimization: How to Get Featured in 2026

Beyond Google: How to Get Your Startup Cited by ChatGPT, Perplexity, and AI Search

2026 Is the Year You Probably Should Become a Content Engineer

Startup Content Strategy

FAQs

Are listicles actually better than long-form articles for AI citation in 2026?

Empirically yes, per recent format analysis showing listicles cited in 21.9% of AI Overview responses versus 16.7% for articles. The format isn't inherently better — it's that the structural properties of listicles (discrete extractable units, ItemList schema compatibility, direct match to buyer-question prompts) align with how AI engines extract. Long-form articles still win for complex topics that resist list structure, but the format mix should weight more toward listicles than most B2B SaaS blogs currently do.

How many items should a citation-ready listicle have?

7–15 items is the working range. Fewer than 7 and the format starts feeling thin to readers. More than 15 and AI engines often truncate extraction at the first 10–12 items, losing the later content. The sweet spot for most B2B SaaS topics is 8–12 items with each item carrying the four citation-ready properties (specific entity, hyperlinked stat, schema-marked, contributes to the TL;DR).

Does the order of items in a listicle affect citation rates?

Yes. AI engines extract more aggressively from the first 30% of a page, so the items in positions 1–4 get cited more frequently than items in positions 8–12. Order your strongest items first — the most differentiated, the highest-credibility recommendations, the ones with the cleanest hyperlinked stats. Don't bury the strongest item at position 7 because the format suggests "save the best for later."

What's the minimum schema markup a listicle needs?

ItemList schema at minimum, with each item rendered as a ListItem with position, name, description, and url. Add FAQPage schema if the listicle includes a Q&A section. Add Product schema if the listicle compares specific commercial products. Add Article schema as the page-level wrapper. Layered schema implementations produce stronger citation lift than single-schema setups.

Can I retroactively add schema to existing listicles without rewriting them?

Yes, and it's the highest-leverage hour of work most B2B SaaS teams aren't doing. Adding ItemList schema to an existing listicle takes 30–60 minutes per piece and produces citation lift within 30–60 days without touching the content. For listicles already ranking on Google, the schema addition is pure upside. The content rewrite for items that lack specific entities or hyperlinked stats produces additional lift but isn't required for the schema fix to work.

Should listicles always have a TL;DR at the top?

For B2B SaaS content optimized for AI citation, yes. The TL;DR is the single highest-yield citation block on the page — it's what AI engines pull when a buyer asks "what does X say about Y." Listicles without TL;DRs cede that citation real estate entirely. The format that works: 3–5 emoji-stat bullets, 100–200 words total, each bullet carrying one hyperlinked claim. Same pattern works for non-listicle articles.

How do I know if my listicle is actually getting cited?

Run a fixed set of 5–10 buyer-question prompts across ChatGPT, Perplexity, Claude, and Gemini. Ask the prompts your listicle is designed to answer ("what are the best X for Y," "compare A vs B," etc.). Track whether your domain appears in the cited sources. Repeat monthly. Citation lift from rewriting typically appears within 30–60 days. If it doesn't, the rewrite missed something — usually either the schema didn't validate properly or the items still aren't entity-specific enough.