AI Agents Are Doing March Madness Brackets. Your Content Calendar Should Be Next.

5 minutes

TL;DR:

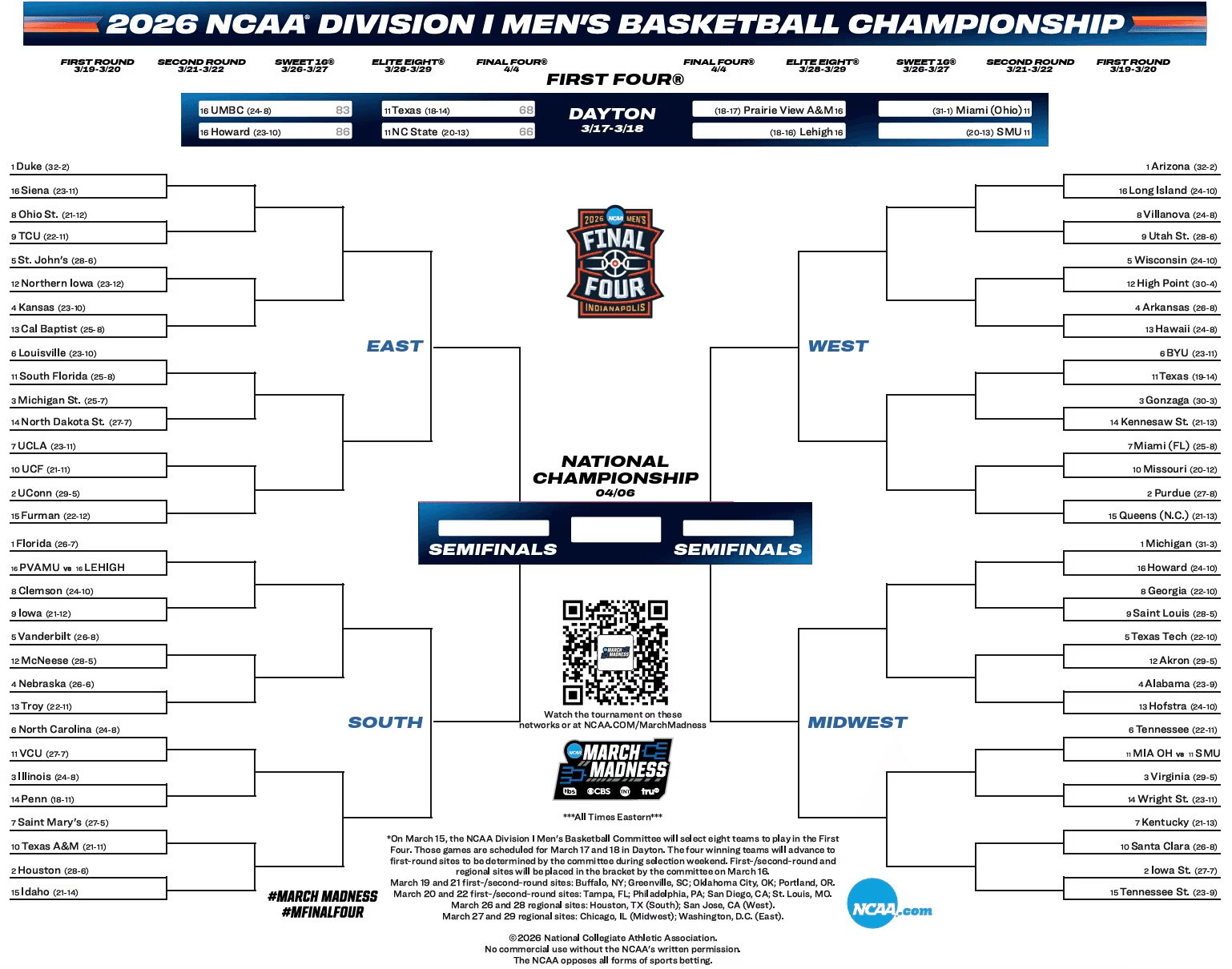

🏀 Every major AI — ChatGPT, Claude, Gemini, Copilot — filled out a 2026 March Madness bracket this week, analyzing efficiency data, injury reports, and historical upset patterns

📊 Claude's AI bracket ranked in the top 1.8% of ESPN entries last year — better than most human experts

📅 85% of marketers use AI for content planning, but only 10.8% use it for actual automation — the gap between "AI assists me" and "AI operates for me" is where the opportunity lives

🔌 The same capabilities powering AI brackets (data ingestion, pattern recognition, probabilistic decision-making) are exactly what content engines need

⚡ The question isn't whether AI can plan your content. It's why you're still doing it manually when AI is already outperforming experts at picking basketball games.

Zach Chmael

CMO, Averi

"We built Averi around the exact workflow we've used to scale our web traffic over 6000% in the last 6 months."

Your content should be working harder.

Averi's content engine builds Google entity authority, drives AI citations, and scales your visibility so you can get more customers.

AI Agents Are Doing March Madness Brackets. Your Content Calendar Should Be Next.

What Happened This Week That Should Make Every Marketer Uncomfortable?

March Madness tips off today. And this year, something happened that barely made the marketing news cycle but should have been front-page for every content strategist in the country.

CBS Sports published a side-by-side comparison of bracket predictions from ChatGPT, Microsoft Copilot, and Google Gemini. Yahoo Sports had Claude simulate the entire tournament. Axios reported that AI brackets are now a fixture of the tournament — not a novelty, but a legitimate analytical tool. Dedicated platforms like Rithmm now exist solely to generate AI-powered bracket predictions.

These aren't toy demos.

One AI bracket system ranked in the top 1.8% of all ESPN Tournament Challenge entries last year. It correctly predicted all four Elite Eight teams. The model was trained on KenPom efficiency data, historical upset rates by seed matchup, injury reports, coaching records, and venue factors — then stress-tested round by round.

Read that last sentence again.

Trained on domain-specific data. Fed historical patterns. Given real-time context. Stress-tested against probable outcomes.

Now ask yourself: why is AI doing this for basketball brackets but not for your content calendar?

The Bracket Problem and the Content Problem Are the Same Problem

A March Madness bracket is a prediction engine. You're taking 68 teams, analyzing their strengths, weaknesses, matchup dynamics, historical trends, and contextual factors (injuries, momentum, venue) — and making 63 sequential decisions about which ones will advance.

A content calendar is a prediction engine too. You're taking hundreds of potential topics, analyzing search volume, competitive density, audience intent, trending signals, and your own performance history — and making sequential decisions about which ones to produce, when, and in what format.

The inputs are different. The cognitive architecture is identical.

Both require:

Data ingestion — pulling structured information from multiple sources

Pattern recognition — identifying what's worked historically and what's changing

Probabilistic reasoning — weighing competing signals when certainty is impossible

Sequential decision-making — each choice affecting the value of every subsequent choice

Real-time adaptation — adjusting when conditions change mid-cycle

AI is already doing all five for basketball. It's barely doing any of them for content marketing. And the reason isn't capability. It's infrastructure.

Why AI Is Better at Brackets Than Content Calendars (For Now)

The bracket problem has something the content calendar problem doesn't: clean, centralized data.

When an AI fills out a March Madness bracket, it has access to structured datasets — KenPom ratings, Bart Torvik efficiency metrics, historical tournament results going back decades, current-season records, conference tournament performance, Vegas lines. All standardized. All machine-readable. All in one place.

When a marketer asks AI to plan their content calendar, what does it have access to?

Usually nothing. No brand context. No competitive intelligence. No performance history. No keyword research. No understanding of which topics you've already covered. No awareness of what your ICP actually cares about.

So the AI does what anyone would do with zero context: it generates generic suggestions that could apply to any company in any industry at any stage.

"10 Tips for Better Email Marketing."

"How to Leverage Social Media in 2026."

The content marketing equivalent of picking all 1-seeds to win every game.

This isn't an AI capability problem. It's a context problem.

And the data proves it. A CoSchedule survey found that 85% of marketers use AI for content planning tasks. But when you dig deeper, the picture looks a lot less impressive. Only 10.8% of marketing teams use AI for actual automation — the rest are using it for ideation and first drafts. Most marketers are using AI like a suggestion box, not an operating system.

The gap between "AI helps me brainstorm" and "AI runs my content operations" is the gap between asking ChatGPT to fill out a bracket with no data and training Claude on the entire KenPom database with historical upset rates and injury reports.

Context is the difference. And context requires infrastructure.

What a March Madness Bracket Can Teach You About Content Strategy

The best AI bracket builders don't just dump data into a model and ask for picks. They engineer the context. And the methodology is instructive for anyone building a content engine.

Lesson 1: Train on Your Own Data, Not Generic Benchmarks

The AI bracket that hit the top 1.8% on ESPN wasn't using generic basketball knowledge. It was trained on specific efficiency metrics for every team in the tournament field — adjusted offensive and defensive ratings, tempo data, strength of schedule. Domain-specific data, not general-purpose knowledge.

Your content strategy should work the same way.

An AI that knows your brand voice, your competitive landscape, your historical content performance, and your audience's actual search behavior will outperform one that's generating topics from a blank prompt. Every time. The 75-85% of marketing teams using AI tools are mostly giving AI general-purpose prompts and getting general-purpose results.

Lesson 2: Historical Patterns Compound, But Only If You Capture Them

Bracket builders trained their AI on historical upset rates by seed matchup — not just current-season data. The AI learned that 12-seeds upset 5-seeds at a specific historical rate, that certain defensive profiles perform better in tournament settings, that coaching experience in March correlates with late-game execution.

Content works the same way.

Your best-performing topics, your fastest-ranking content types, your highest-converting formats — these patterns exist in your data. But most teams never capture them in a way AI can use. Every piece you publish should feed back into the system that decides what to publish next.

That's how content compounds.

Lesson 3: Upsets Matter More Than Chalk

Here's the most interesting bracket insight: a bracket where every favorite wins is almost certainly wrong. Even if each individual pick is the most probable outcome, the aggregate probability of all favorites winning is vanishingly small.

The best bracket builders explicitly built in upset modeling — identifying the 8-10 lower-seeded teams likely to win in the first round based on matchup-specific factors.

Content calendars have the same dynamic.

A calendar filled exclusively with high-volume, high-competition keywords — the "favorites" — is almost certainly suboptimal.

The best content strategies deliberately include "upset" plays: low-competition keywords with high intent, emerging topics before they trend, contrarian angles on saturated subjects. The teams that only chase head keywords are the ones picking all 1-seeds — safe, obvious, and unlikely to win.

Lesson 4: The Model Needs a Coach

Even the most sophisticated AI bracket required human guidance.

The builder spent an entire day training Claude, walking through matchups round by round, stress-testing picks, and adjusting reasoning. The AI did the analysis. The human set the framework, validated the logic, and caught the blind spots.

This is the AI + human model that actually works for content.

AI handles the data-intensive work — keyword research, competitive analysis, trend detection, content scoring, draft generation. Humans handle the strategic judgment — brand voice, editorial perspective, audience intuition, creative risk.

Two hours approving, not twenty hours creating.

From Brackets to Content Engines: What Has to Change

The March Madness AI bracket works because someone built the infrastructure for it to work.

They collected the right data, structured it for the model, created the evaluation framework, and established the feedback loop.

Your content calendar needs the same infrastructure. Here's what that looks like:

Brand context as a persistent data layer. Not a Google Doc that AI reads once. A living system that captures your voice, positioning, ICPs, and competitive landscape and applies it automatically to every decision. The bracket equivalent of loading KenPom data for the entire field.

Performance data feeding back into planning. Your analytics shouldn't be a report you read. They should be the input your AI uses to recommend what to create next — identifying which topics are trending, which pieces are underperforming, and where new opportunities are emerging. The bracket equivalent of tracking how seed matchups actually play out over years.

Competitive monitoring as a live signal. Just as bracket builders track injuries and conference tournament results right up to Selection Sunday, your content engine should be tracking what competitors publish, what they rank for, and where they're leaving gaps. Not monthly. Continuously.

A scoring system that balances multiple dimensions. Brackets weight offense vs. defense, tempo vs. efficiency, regular season vs. tournament performance. Your content decisions should balance SEO potential against GEO citability, audience intent against competitive difficulty, topical authority against emerging opportunity.

This isn't hypothetical. This is what content engines are built to do.

How Averi Turns Your Content Calendar Into a Bracket-Level Prediction Engine

Averi was built around the same principle that makes AI brackets work: better context produces better predictions.

Brand Core captures your voice, positioning, audience profiles, and competitive landscape in a structured layer that informs every content decision — the content equivalent of loading the full KenPom database. Every piece your AI generates starts with complete context, not a blank prompt.

Strategy Map organizes your content pillars, focus areas, and topics into a visual strategic framework — so the AI isn't just generating random suggestions. It's making recommendations that advance a coherent strategy, the same way a bracket builder considers how early-round picks affect late-round matchup dynamics.

Content Queue uses keyword analysis, competitor monitoring, and trend detection to proactively recommend what to create next. You're not staring at a blank calendar wondering what to write. You're choosing from a pre-vetted pipeline of opportunities the system has already qualified.

Content Scoring balances SEO and GEO dimensions — so every piece isn't just optimized for Google rankings but structured for AI citations too. Two scoring dimensions, weighted and combined, exactly like how bracket models balance offensive and defensive efficiency.

Analytics and Library close the feedback loop. Every published piece feeds performance data back into the system, making future recommendations smarter. Your Library grows with each article, giving AI more context for the next draft. Last month's results shape next month's strategy — automatically.

The result: a content operation that runs less like a blank editorial calendar and more like a prediction engine trained on your specific data, your specific market, and your specific performance history.

Two hours a week. Not twenty.

The Real Lesson From March Madness

Axios noted something that should stick with every marketer: the evolution of AI brackets over the past three years mirrors the broader trajectory of AI capability. What seemed like a gimmick two years ago is now a legitimate analytical tool that outperforms most human experts.

Content marketing is on the same trajectory.

Right now, most marketers use AI the way casual fans fill out a bracket — gut instinct, surface-level knowledge, a few popular picks they've seen on social media. The ones who are building real infrastructure — data-rich context layers, automated feedback loops, multi-dimensional scoring systems — are building the content equivalent of a top-2% bracket.

The tournament starts today. Sixty-eight teams. Sixty-three games. Millions of brackets.

Your content calendar has just as many variables, just as many decisions, just as many competing signals.

The only question is whether you're going to keep filling it out by hand — or build the engine that fills it out for you.

FAQs

Can AI really plan a content calendar better than a human?

AI can process more data signals simultaneously than any human — keyword trends, competitive gaps, performance history, seasonal patterns, and audience behavior. A CoSchedule survey found 85% of marketers already use AI for content planning. The difference is context: AI with rich brand data and performance feedback outperforms generic suggestions. AI with no context produces generic results. The quality of the output depends entirely on the quality of the input infrastructure.

How is a content calendar like a March Madness bracket?

Both are prediction engines that require data ingestion, pattern recognition, probabilistic reasoning, and sequential decision-making. A bracket analyzes team efficiency, historical matchups, and contextual factors to predict 63 outcomes. A content calendar analyzes search data, competitive density, audience intent, and performance history to decide which topics to produce and when. Same cognitive architecture, different domains.

What data does AI need to plan content effectively?

At minimum: your brand voice and positioning, target audience profiles, competitive landscape, historical content performance, keyword and search data, and industry trend signals. This is why content engines that centralize brand context outperform standalone AI tools — they provide the structured data layer AI needs to make informed decisions rather than generic ones.

Why don't more marketers automate their content planning?

Context infrastructure. Only 10.8% of marketing teams use AI for actual automation despite 85% using it for ideation. The gap exists because most marketers don't have their brand data, performance metrics, and competitive intelligence centralized in a format AI can access. They have data scattered across Google Analytics, Search Console, CRM, spreadsheets, and documents — which is why a unified content engine matters.

What's the difference between AI-assisted planning and AI-operated planning?

AI-assisted planning is asking ChatGPT for topic ideas — the equivalent of checking ESPN's expert picks before filling out your bracket by hand. AI-operated planning is a system that ingests your brand context, monitors competitor activity, analyzes keyword opportunities, and proactively recommends a prioritized content queue — the equivalent of a trained model simulating every matchup 10,000 times.

How does this connect to SEO and GEO?

A bracket model balances offensive and defensive efficiency. A content engine should balance SEO and GEO — optimizing for Google rankings and AI search citations simultaneously. Content scoring that weights both dimensions ensures every piece in your calendar serves dual discovery channels, not just one.

What should I do differently about my content calendar right now?

Three things: centralize your brand context in a persistent system (not scattered docs), connect your analytics to your planning so performance data feeds future decisions, and stop treating your content calendar as a static document. Treat it as a dynamic prediction engine that gets smarter with every piece you publish. That's the content engine model — and it's how you go from filling out brackets by hand to building the system that fills them out for you.