Google's New AI Optimization Guide Just Killed 4 GEO Myths (And Validated 3 Things Smart Companies Already Do)

6 minutes

TL;DR

🚫 LLMs.txt files do nothing for Google AI Overviews. Google says so explicitly.

🚫 "Chunking" content for AI extraction isn't required. Google understands nuance on full-length pages.

🚫 Structured data isn't required for AI search visibility (still earns rich results in regular Search).

🚫 Inauthentic mention-building doesn't help. Spam systems already block what AI features depend on.

✅ Non-commodity, first-hand experience content is the single biggest visibility lever.

✅ Multimodal content (images and video) gets surfaced in AI responses.

✅ Technical hygiene (indexable, crawlable, semantic HTML) is the floor — but it has to be there.

🟡 GEO as a discipline isn't dead. It's just bigger than Google. ChatGPT, Claude, and Perplexity still operate by different citation rules.

Zach Chmael

CMO, Averi

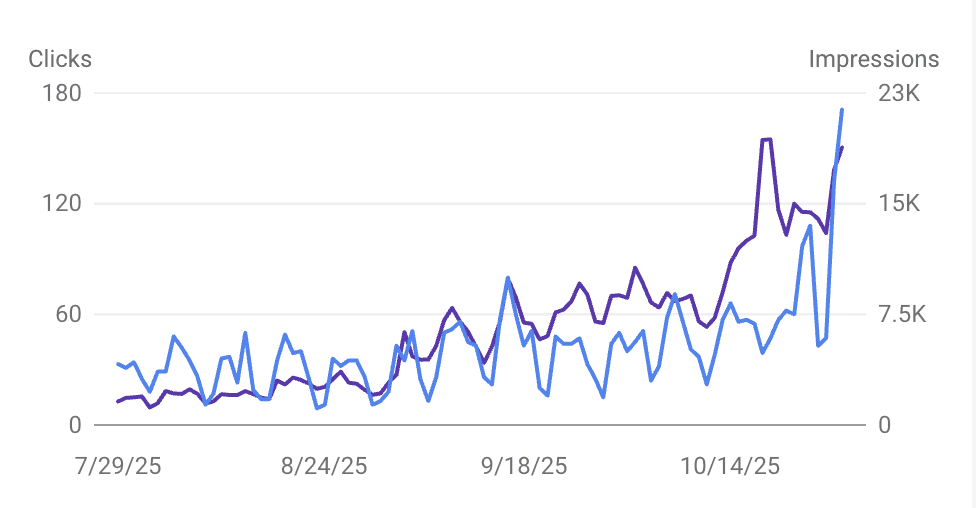

"We built Averi around the exact workflow we've used to scale our web traffic over 6000% in the last 6 months."

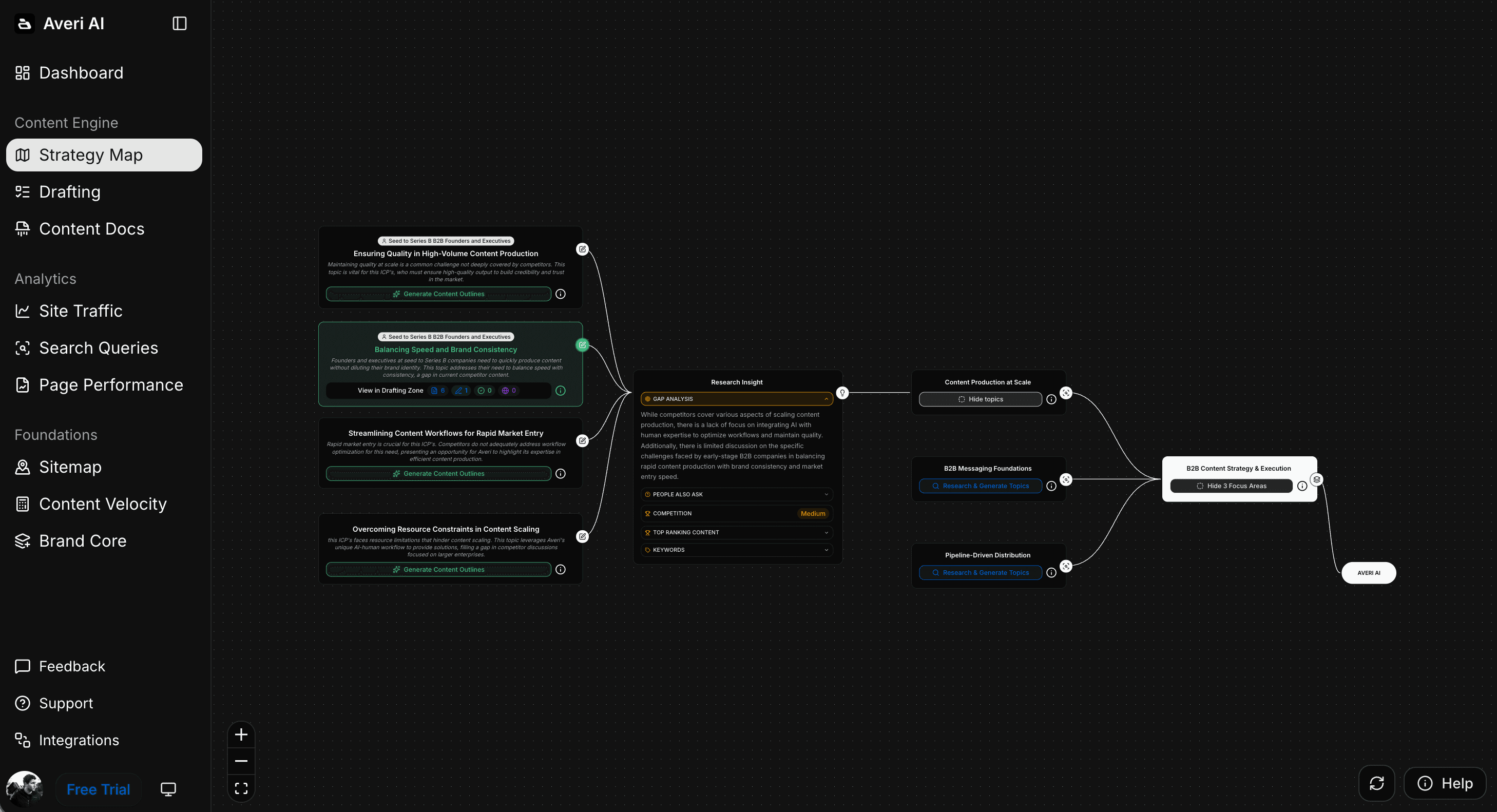

Your content should be working harder.

Averi's content engine builds Google entity authority, drives AI citations, and scales your visibility so you can get more customers.

Google's New AI Optimization Guide Just Killed 4 GEO Myths (And Validated 3 Things Smart Companies Already Do)

On May 15, Google quietly published its first official guide to optimizing for generative AI search.

One sentence in that guide invalidates roughly eighteen months of "GEO course" content sold across LinkedIn and Substack… from Google's perspective, "AEO" and "GEO" are not separate disciplines. They're SEO. The same SEO you've been doing.

That single line will quietly retire a cottage industry.

But the more useful takeaway is what Google said next: four specific GEO tactics that don't work, and three quality signals that absolutely do.

We've been running a content engine that hit 6,000% organic traffic growth in 10 months with no paid acquisition and a one-person marketing team. We read the entire guide against our actual operating playbook. Here's the audit, and here's what you should change starting Monday.

What Google Actually Said About GEO

The exact wording matters. Google's position: "optimizing for generative AI search is optimizing for the search experience, and thus still SEO."

Their AI Overviews and AI Mode features are powered by two mechanisms — retrieval-augmented generation, which pulls from the same index that ranks every other Search result, and query fan-out, which spins up related sub-queries to gather a fuller answer.

Both rely on Google's core ranking systems.

The implication is direct. For Google's AI surfaces specifically, there is no separate "GEO algorithm" sitting underneath. There is one ranking system, and the AI layer reads from it.

Why does this matter for a startup?

Because most of the GEO advice floating around the founder ecosystem treats Google AI Overviews as if it were a third-party citation engine like ChatGPT.

It isn't.

Google AI Overviews now appear on 48% of queries, and getting featured in them is mostly a function of ranking well in regular Search and structuring content for direct answers. That's it.

4 GEO Tactics Google Just Officially Told You To Stop Doing

This is the section where some of our own past advice needs revising. We've used some of these tactics ourselves. The guide makes clear which ones to retire and which to reframe.

Myth 1: LLMs.txt files improve AI visibility

The pitch: place a markdown manifest of your site at /llms.txt to help AI systems "understand" your content.

The reality, per Google: you don't need to create LLMs.txt files or any "special" machine-readable markup to appear in their generative AI features. Google may discover and index many file types, but that doesn't mean any are treated specially.

We've seen SEO platforms charging $79/month for "GEO file generation". For Google specifically, that fee buys you nothing.

There's a more nuanced case for LLMs.txt with Anthropic's Claude or with smaller open-source AI surfaces, but for the biggest AI search experience in the world, it's noise.

Myth 2: You have to "chunk" content for AI to read it

The pitch: break long content into bite-sized, 50-word answer blocks so AI systems can extract them more easily.

Google's position: there's no requirement to break content into tiny pieces, no ideal page length, and Google's systems are designed to understand nuanced topics across a full page.

Our 120-to-180-word section length and direct-answer H2 structure still works — but defend it on reader-experience grounds, not "AI prefers chunks." Structuring content for direct answers is a clarity practice that benefits humans and AI equally. Chunking for AI as a goal in itself was always a misread of how retrieval works.

Myth 3: Structured data is the secret to AI citation

The pitch: add Article schema, FAQPage schema, ItemList schema, VideoObject schema, and watch your AI citations climb.

Google: "structured data isn't required for generative AI search, and there's no special schema.org markup you need to add."

That's a sharp correction.

Schema is still worth implementing because it helps with rich results in regular Search (which feed AI Overviews indirectly), but it's not a citation lever. If you've been treating schema as the differentiator in your AI strategy, recalibrate. We covered this nuance in our schema markup guide, but our framing was too strong.

The honest version: schema unlocks rich snippet eligibility. Rich snippets help with AI Overview surfacing. Causation is two steps removed, not direct.

Myth 4: Buying "AI mentions" builds entity authority

The pitch: pay to be quoted in scraped roundup posts, AI-friendly directories, and synthetic Reddit threads to build "citation signals."

Google: seeking inauthentic mentions across the web isn't as helpful as it might seem, because core ranking systems block spam, and the AI features depend on those same systems.

If your link-building agency is selling "AI citation packages," ask them to define authenticity.

The honest version of mention-building is earning real coverage in real publications, getting quoted in journalist roundups, and contributing to active communities. The synthetic version is being filtered out as fast as it's deployed.

The 3 Things Smart Startups Were Already Doing Right

The guide also confirms what's working. If you're already doing these three things, you can ignore most of the noise.

Non-commodity content is the single biggest lever

Google's own framing is striking.

They contrast generic listicles like "7 Tips for First-Time Homebuyers" with experience-led pieces like "Why We Waived the Inspection & Saved Money: A Look Inside the Sewer Line." T

he first kind is commodity content. The second is non-commodity — first-person, specific, falsifiable, hard to replicate without lived experience.

This is the single most important sentence in the guide: "Creating content that people find unique, compelling, and useful will likely influence your website's presence in generative AI search in the long run more than any of the other suggestions in this guide."

We tested this directly.

Our pieces that include phrases like "we tested," "in our case," and "this is what failed first" earned 3x the impressions of equivalent pieces written in a generic third-person voice.

That's not an AI hack. That's reader trust, which AI systems then reward.

Multimodal content gets surfaced

Google confirms that images and video bring more opportunities for sites to appear beyond text links in AI responses. This validates the multimodal content approach we've been pushing — original visuals, structured alt text, companion video for pillar pieces.

The execution detail that matters: original images outperform stock, and AI-aware alt text (descriptive plus fact-dense plus semantically specific) outperforms generic alt text. We've published over 200 articles using this approach. The pattern holds.

Technical hygiene is the floor

Indexable, crawlable, fast, semantic HTML, low duplication. None of this is exciting. All of it is required. If your site fails on Core Web Vitals or has crawl errors, no amount of GEO optimization will fix the gap. The guide's framing: a page must be indexed and eligible to show with a snippet to even be considered for AI features.

For startups on Framer, Webflow, or WordPress, this is mostly out-of-the-box. The trap is custom JavaScript implementations that block crawlers without your knowing. Run Search Console at least monthly and fix indexing issues before they compound.

Why GEO Isn't Dead — It's Just Bigger Than Google

Here's where the smart strategists will split from the obituary writers. Google's guidance is precise: it applies to Google's AI features. It does not apply to ChatGPT. It does not apply to Claude. It does not apply to Perplexity. It does not apply to the standalone Gemini app.

Those engines run on different mechanics. Reddit threads dominate ChatGPT citations because the model weights heavy on human-validated answers. Perplexity prioritizes real-time accuracy and source freshness. Claude's Projects feature pulls from user-uploaded knowledge and connected services. Each one has its own optimization surface.

So GEO as a discipline isn't dead. It's been resized. The new working definition: GEO is the practice of optimizing for citation across the AI engines Google's guide doesn't cover. That's a bigger market than the one we were trying to influence inside Google AI Overviews.

Citation Surface | What Drives Visibility | Optimization Lever |

|---|---|---|

Google AI Overviews | Core SEO + ranking signals | Strong SEO, direct-answer structure, technical hygiene |

ChatGPT | Reddit, Wikipedia, major publications | Community presence, earned PR, encyclopedia-style entities |

Perplexity | Real-time accuracy, freshness | Fresh content, clear sourcing, original data |

Claude | User-uploaded knowledge, MCP connectors | Direct distribution, partnership integrations |

Gemini (standalone) | Google's index + reasoning | Same as Google AI Overviews, plus structured datasets |

The reframe is healthier for everyone. The "GEO is a separate science from SEO" pitch was always partially true and partially marketing. Google just told us which part was which.

How To Adjust Your 2026 Content Operations (5 Specific Moves)

Five concrete changes worth making in the next 30 days.

1. Stop selling LLMs.txt as a Google play. If you publish a generator, scope it to Anthropic and other open AI surfaces. Don't position it as a Google AI Overview lever.

2. Reframe your schema strategy. Keep implementing Article, FAQPage, and VideoObject schema — they earn rich results that feed AI Overviews. Stop selling it as "AI citation insurance."

3. Double down on first-person experience content. This is the most actionable shift. Replace one generic listicle on your roadmap with one experience-led editorial. Track impressions over 90 days. Our pieces with first-person markers consistently outperform.

4. Split your AI optimization strategy by surface. Build a working doc that names what you do for Google AI Overviews versus ChatGPT versus Perplexity versus Claude. Stop using "GEO" as a single bucket. Platform-specific GEO is the actual discipline.

5. Start preparing for agentic search now. Google's guide includes a section on agentic experiences, pointing to Universal Commerce Protocol and the agent-friendly site UX guide on web.dev. Browser agents that visit your site need clean DOM structure, valid accessibility trees, and predictable layouts. This is the next frontier. Most of the category isn't writing about it yet.

The Strategic Bet For The Next 12 Months

Three trends will compound into one wave by mid-2027.

First, AI surfaces fragment. Google AI Overviews, AI Mode, ChatGPT search, Perplexity, Claude search, Gemini, Brave Leo, You.com, Arc Search — each is a separate citation game. The startups winning will not chase all of them. They'll pick the two that drive their pipeline and operate against actual data.

Second, agentic search shifts the optimization target. When an agent reads your site to complete a task — book a demo, compare specs, pull pricing — the optimization unit becomes the page's machine-readability, not its rankability. UCP and similar protocols are early. The teams that get fluent now will compound the lead.

Third, the bar for content quality rises. Google's guide is essentially a warning shot: commodity content will lose visibility, and AI systems are now sophisticated enough to detect it. The startups that win will not be the ones generating the most. They'll be the ones generating the most with the most expert voice attached.

The good news is that Averi customers were already operating against this thesis.

The bad news is that anyone running a "publish more, optimize later" workflow has about 12 months before the gap becomes uncloseable.

If your content is already first-person, experience-led, and structured for both readers and AI extraction, you're set up well.

If it isn't, score your existing content against this framework and see where it lands.

The fix isn't more content. It's better content, produced inside a workflow that bakes the quality signals in from the brief stage.

Score your content against this framework. Run your existing pages through Averi's Content Scoring system and see where they land on both SEO and GEO readiness — including the quality signals Google just validated. Start your 14-day free trial →

FAQs

Did Google really say GEO is the same as SEO?

Yes, with a specific caveat. Google said that optimizing for their generative AI search features is still SEO from their perspective. "AEO" and "GEO" describe work focused on AI search visibility, and Google considers that work part of standard SEO for their surfaces. Other AI engines — ChatGPT, Claude, Perplexity — operate by different rules.

Should I delete my LLMs.txt file?

No need to delete it. It doesn't help Google's AI search features, but it may be useful for other AI surfaces, especially Anthropic's Claude and smaller open AI projects. Keep it if you have one. Just don't pay for tools that position LLMs.txt as a Google AI Overview lever. It isn't.

Does this mean I should stop optimizing for AI Overviews?

The opposite. Google AI Overviews now appear on 48% of queries, and optimizing for them is critical. The shift is in method. Strong SEO fundamentals, direct-answer content, and technical hygiene drive AI Overview appearance — not GEO-specific hacks. Read our AI Overviews guide for the full playbook.

Is structured data still worth implementing?

Yes. Schema doesn't directly drive AI citations, but it does earn rich results in regular Search, which feed AI Overviews indirectly. Article, FAQPage, and VideoObject schema all remain worth the effort. The mental model shift is treating schema as a rich-results play, not an AI-citation play.

What's the difference between Google AI Overviews and ChatGPT optimization?

Google AI Overviews pull from Google's Search index, so ranking well drives most of the citation outcomes. ChatGPT pulls from Reddit, Wikipedia, and major publications, plus its training data. Optimizing for Google AI Overviews is strong SEO. Optimizing for ChatGPT is community presence, earned PR, and entity authority across the web.

What is agentic search and why should I care now?

Agentic search is when an AI agent visits your site on a user's behalf to complete a task — booking a meeting, comparing products, extracting data. Google's guide points to the Universal Commerce Protocol and web.dev's agent-friendly site UX article. Most startups aren't preparing for this yet. Getting fluent in the patterns now creates compounding category authority later.

How do I know if my content is "non-commodity" enough?

Use the swap test. Could a competitor publish the same piece word for word with only their company name changed? If yes, it's commodity content. If your piece names specific tests you ran, specific outcomes you measured, or specific decisions you made, it's non-commodity. The latter wins both in Google AI Overviews and across every other AI engine.

Related Resources

GEO & AI Search Strategy

Platform-Specific GEO: ChatGPT vs Perplexity vs Google AI Mode

ChatGPT vs Perplexity vs Google AI Mode: B2B SaaS Citation Benchmarks

Implementation & Measurement

Your First 90 Days of GEO: Realistic Implementation Timeline

Building Citation-Worthy Content: Becoming a Data Source for LLMs