How to Measure GEO: The AI Citation Metrics Framework

Zach Chmael

Head of Marketing

6 minutes

In This Article

Methodology first, tools second. The 4-layer KPI framework, manual tracking system, and scoring model for measuring GEO before you buy any software.

Updated

Trusted by 1,000+ teams

Startups use Averi to build

content engines that rank.

TL;DR

📊 4 measurement layers: Visibility (are you being cited?), Traffic (are citations producing visits?), Engagement (are AI visitors engaged?), Business Impact (is it affecting revenue?)

🔍 Manual tracking system: 20–30 queries × 3 platforms × monthly. 45 minutes. Records citation frequency, brand mentions, competitor citations, Share of Voice. Free.

📈 Key KPIs: Citation rate, brand mention rate, AI Share of Voice, AI referral traffic (GA4 custom channel), AI conversion rate, branded search lift

⚙️ GA4 setup required: Custom channel group for AI traffic (chatgpt.com, perplexity.ai, gemini.google.com, etc.). Without this, AI traffic hides in "direct."

📋 Citation Readiness Score: 8-factor weighted scoring model predicts citation probability before publishing. Answer capsules and non-promotional tone weighted 2x.

🛠️ Paid tools optional: Profound, SE Ranking, Frase, Otterly, Siftly. Upgrade from manual when tracking 50+ queries or needing daily frequency.

⚡ Start free with Averi. Content scoring at 55% SEO + 45% GEO catches citation readiness gaps before publishing.

Zach Chmael

CMO, Averi

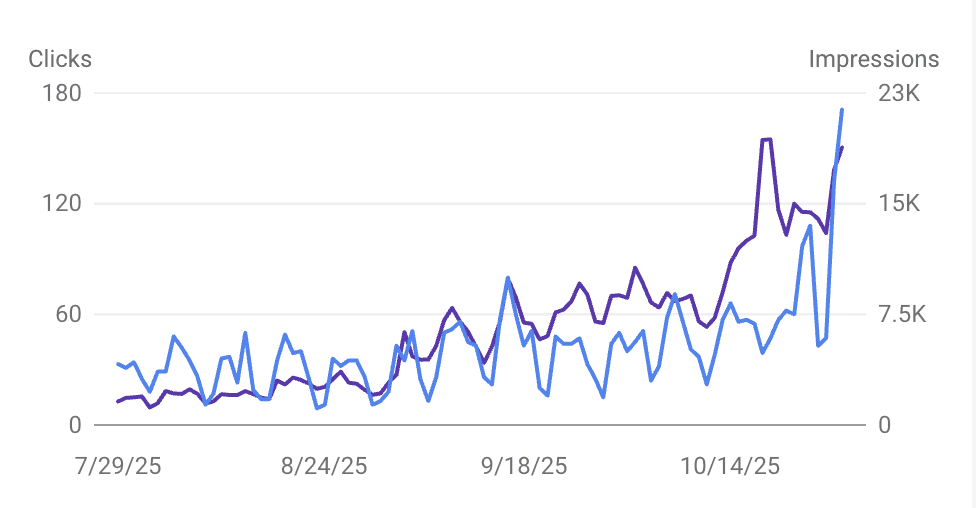

"We built Averi around the exact workflow we've used to scale our web traffic over 6000% in the last 6 months."

Your content should be working harder.

Averi's content engine builds Google entity authority, drives AI citations, and scales your visibility so you can get more customers.

How to Measure GEO: The AI Citation Metrics Framework

You can't manage what you can't measure.

But measuring GEO is harder than measuring SEO for one simple reason: there's no "AI Search Console."

Google gives you Search Console with exact impressions, clicks, positions, and CTR for every keyword.

AI platforms give you nothing. No dashboard. No analytics. No reporting interface. You have to build the measurement system yourself.

Most GEO measurement guides skip straight to "buy these tools." That's backwards.

Before you evaluate tools, you need a methodology: what to measure, how to score it, and what the numbers mean. Tools are optional. The methodology isn't.

This is the measurement framework.

It works manually (free, 45 minutes/month) and scales with paid tools when you're ready. It covers four layers of GEO metrics, a scoring model for content citation readiness, and the GA4 setup that captures AI referral traffic most analytics configurations miss.

This is part of the Definitive Guide to Generative Engine Optimization (GEO). The pillar covers the full framework.

This piece is the measurement layer.

Why Traditional Metrics Miss GEO Performance

Standard analytics measures clicks and conversions. GEO creates value that never registers as a click.

When ChatGPT answers "What's the best AI content tool for startups?" and cites your page, three things happen:

(1) the user reads your brand name in the answer,

(2) they may or may not click through, and

(3) the citation strengthens your brand's entity recognition for future queries.

Traditional analytics captures only #2, and only if the click has proper attribution.

93% of Google AI Mode sessions end without a click. 60% of all Google searches produce zero clicks. If your measurement system only counts clicks, it can't see the majority of GEO's impact.

The framework below measures all three dimensions: visibility (are you being cited?), traffic (are citations producing visits?), and business impact (are those visits converting?).

The 4-Layer GEO Metrics Framework

Layer 1: Visibility Metrics (Are AI Systems Mentioning You?)

These are the most fundamental GEO metrics. They answer: does your brand exist in AI-generated answers?

Citation frequency. How often your pages appear as cited sources in AI answers across target queries. This is the core GEO metric. Measure it by running a consistent set of queries across AI platforms and recording whether your content is cited.

Brand mention rate. How often your brand name appears in AI answers, even without a direct citation link. ChatGPT mentions brands 3.2x more often than it provides clickable citations. A mention without a link still builds awareness and entity recognition.

AI Share of Voice. Your brand mentions as a percentage of total brand mentions across your tracked queries. Formula: (Your Brand Mentions ÷ Total Brand Mentions in Category) × 100. If you track 20 queries and your brand appears in 6 answers while competitors appear in a combined 14, your Share of Voice is 30%.

Citation position. Where in the AI answer your citation appears. First citation = highest value (shapes the answer). Fifth citation = supporting reference. Track not just whether you're cited but where.

Layer 2: Traffic Metrics (Are Citations Producing Visits?)

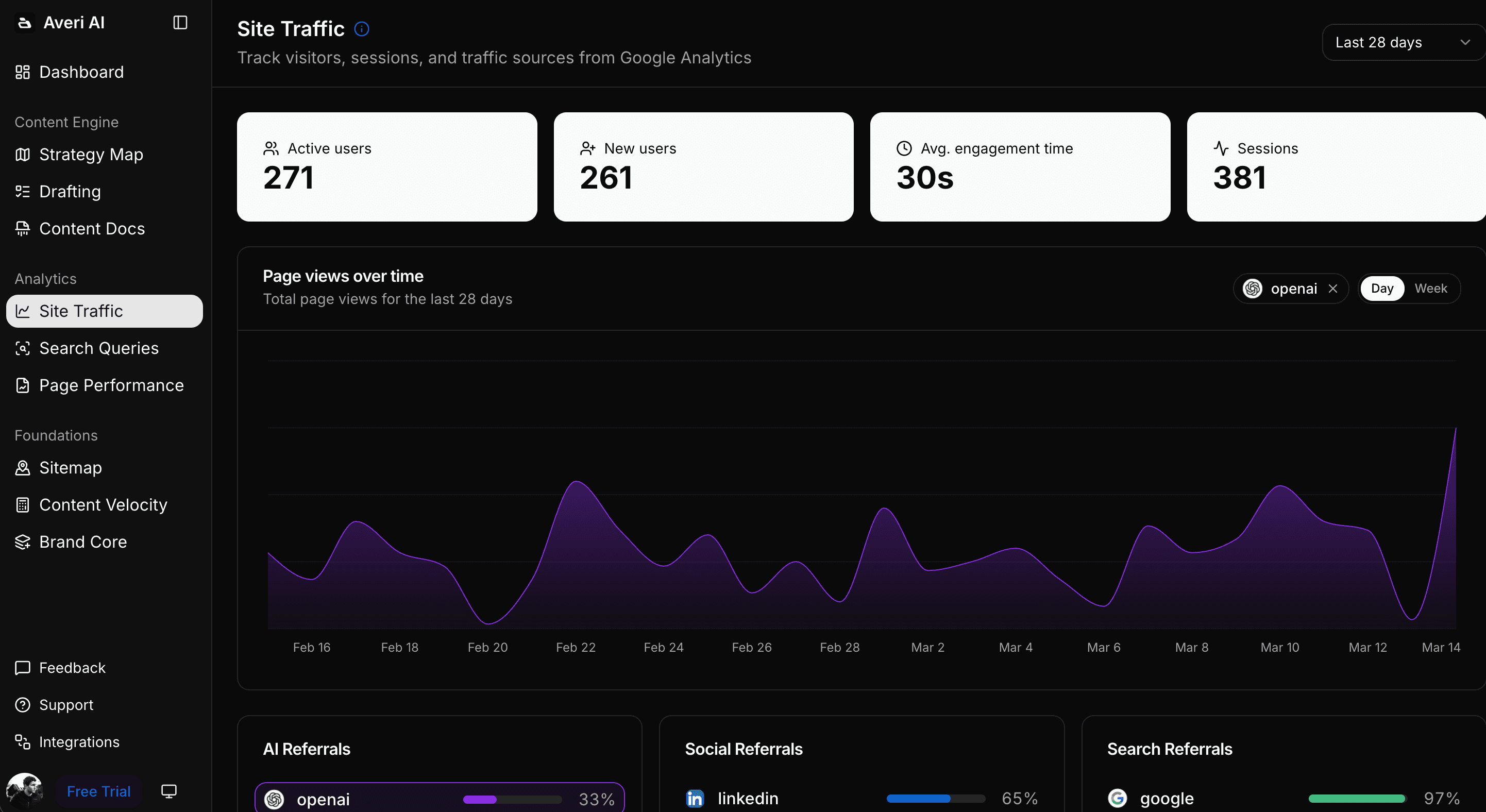

AI referral traffic. Visits from AI platforms tracked in GA4. This requires custom setup (covered below) because most GA4 configurations misattribute AI traffic as "direct."

AI traffic growth rate. Month-over-month change in AI referral sessions. AI referrals increased approximately 1% month over month across all industries through late 2025. Your growth rate should meet or exceed this baseline.

Pages receiving AI traffic. Which specific pages earn AI referral visits. This reveals which content structures and topics generate citations that produce clicks.

Layer 3: Engagement Metrics (Are AI Visitors Engaged?)

AI visitor engagement rate. GA4 engagement rate filtered for AI referral traffic. Compare against your overall site engagement rate. AI-driven visitors spend 68% more time on websites than traditional organic visitors, so AI engagement should outperform your baseline.

AI visitor pages per session. Do AI-referred visitors explore beyond their landing page? Higher pages per session indicates your content ecosystem is working: AI brings them in, internal links keep them moving.

AI visitor conversion rate. The percentage of AI-referred visitors who complete your target action (signup, demo request, email subscribe). AI search traffic converts at 14.2% compared to Google's 2.8%. If your AI conversion rate is below 5%, something in the landing experience or CTA is underperforming the channel's potential.

Layer 4: Business Impact Metrics (Is GEO Affecting Revenue?)

AI-attributed pipeline. Revenue from deals where the customer's journey included an AI referral touchpoint. Track through CRM tagging or self-reported attribution.

Branded search lift. Increase in branded search volume (GSC) correlated with periods of high AI citation frequency. When AI mentions your brand, some users search your name later. Branded search growth is a proxy for AI-driven awareness.

Content-influenced revenue from AI. Revenue from customers who consumed AI-referred content during their buying journey. This requires multi-touch attribution or, more practically, a "How did you hear about us?" survey question.

The Manual Tracking System (Free, 45 Minutes/Month)

You don't need paid tools to measure GEO. This manual system provides actionable data for startups.

Step 1: Build Your Prompt Set (One-Time, 30 Minutes)

Create a list of 20–30 queries that represent how your ICP searches for solutions in your category. Three types:

Category queries (10): "Best [your category] tools," "What is [your category]," "Top [your category] for [your ICP]" Example: "Best AI content tools for startups," "What is a content engine"

Problem queries (10): "[Problem you solve]," "How to [action your product enables]" Example: "How to do content marketing as a solo founder," "How to get more organic traffic for my startup"

Competitor queries (5–10): "[Competitor] alternatives," "[Competitor] vs [your brand]," "Is [competitor] good for [use case]" Example: "Jasper AI alternatives for startups," "HubSpot content marketing for seed stage"

Store these in a spreadsheet. This prompt set stays consistent month to month so you can track trends.

Step 2: Run the Monthly Citation Audit (45 Minutes)

For each prompt in your set:

Enter the query in ChatGPT (with web search enabled)

Enter the same query in Perplexity

Enter the same query in Google (check for AI Overviews / AI Mode)

For each response, record in your spreadsheet:

Column | What to Record |

|---|---|

Query | The prompt you entered |

Platform | ChatGPT / Perplexity / Google AI |

Your Brand Cited (Y/N) | Did the response include a link to your content? |

Your Brand Mentioned (Y/N) | Did the response mention your brand name without a link? |

Citation Position | 1st, 2nd, 3rd... or N/A |

Competitors Cited | Which competitor brands appeared? |

Your URL Cited | Which specific page was cited (if applicable)? |

Date | When you ran this audit |

30 queries × 3 platforms = 90 checks. At 30 seconds per check, this takes about 45 minutes.

Step 3: Calculate Monthly KPIs (10 Minutes)

From your audit data, calculate:

Citation Rate: (Queries where you were cited ÷ Total queries checked) × 100 Example: Cited in 12 of 90 checks = 13.3% citation rate

Mention Rate: (Queries where you were cited OR mentioned ÷ Total) × 100 Example: Cited or mentioned in 18 of 90 = 20% mention rate

Share of Voice: (Your mentions ÷ All brand mentions across your queries) × 100

Platform breakdown: What percentage of your citations come from ChatGPT vs. Perplexity vs. Google AI?

Competitor gap: Which competitors are cited more frequently than you? On which queries?

Step 4: Track Trends Over Time

After 3 months of consistent audits, plot:

Citation rate by month (is it growing?)

Mention rate by month

Share of Voice by month

Citations by platform (is one growing faster?)

Three consecutive months of growing citation rate = the GEO strategy is working.

Flat or declining citation rate after 3+ months of content optimization = something needs adjusting (content structure, topic targeting, or brand authority).

The GA4 Setup for AI Traffic Tracking

Most GA4 configurations misattribute AI referral traffic as "direct" or "organic." Here's how to fix that.

Create a Custom AI Traffic Channel Group

In GA4:

Go to Admin → Data Display → Channel Groups

Click "Create new channel group" or edit your default

Add a new channel called "AI Search"

Set conditions to match these referral sources:

Source/Medium Contains | Platform |

|---|---|

chatgpt.com | ChatGPT |

chat.openai.com | ChatGPT |

perplexity.ai | Perplexity |

gemini.google.com | Gemini |

copilot.microsoft.com | Copilot |

claude.ai | Claude |

you.com | You.com |

Save and apply

This creates a dedicated "AI Search" segment in all GA4 reports. You can now compare AI Search traffic against Organic Search, Direct, and other channels.

Set Up UTM Tracking for AI Referrals

OpenAI added utm_source=chatgpt.com parameters to ChatGPT citation links in mid-2025. Not all AI platforms do this yet. The GA4 channel group above catches the ones that do, and referrer-based matching catches the rest.

Track AI Bot Crawl Activity

In Google Analytics, create a custom audience for AI bot user-agents (GPTBot, PerplexityBot, ClaudeBot). While bot traffic isn't "real" visitors, monitoring crawl frequency indicates whether AI platforms are actively reading your content. Increasing crawl frequency typically precedes increasing citations.

The Attribution Gap

Even with proper GA4 setup, some AI-influenced visits won't be attributed correctly. A user might read your brand name in a ChatGPT answer, Google your brand name later, and arrive via branded search. GA4 credits the branded search. The AI citation gets no credit.

The workaround: add a "How did you first hear about us?" field to your signup or contact form. Include "AI assistant (ChatGPT, Perplexity, etc.)" as an option. Self-reported attribution catches the dark funnel that analytics misses. At low volume, self-reported data is more reliable than algorithmic attribution for GEO.

The Content Citation Readiness Score

Before content is published, score its citation readiness. This predicts whether it's likely to earn citations based on the structural factors that research shows matter.

Factor | Score 0 | Score 1 | Score 2 | Weight |

|---|---|---|---|---|

Answer capsule (40–60 words per section) | None | Some sections | Every section | 2x |

Statistics density | Under 1 per 300 words | 1 per 200–300 | 1 per 150–200 | 2x |

FAQ section | None | 3–4 questions | 5–7 questions | 1.5x |

Schema markup (Article + FAQ) | None | Partial | Complete | 1x |

Source citations (hyperlinked) | Under 5 | 5–15 | 15+ | 1.5x |

Content freshness | Over 6 months | 3–6 months | Under 3 months | 1x |

Extractable blocks per 500 words | 0 | 1–2 | 3+ | 1.5x |

Non-promotional tone | Promotional | Mixed | Informational | 2x |

Scoring: Multiply each factor's score (0, 1, or 2) by its weight. Sum all weighted scores.

Maximum possible score: 25 (every factor at 2 × its weight)

Interpretation:

20–25: High citation readiness. Publish with confidence.

15–19: Moderate. Strengthen the 0-score factors before publishing.

10–14: Low. Needs structural revision.

Under 10: Not citation-ready. Major rewrite needed.

The weights reflect research findings. Answer capsules and non-promotional tone are weighted 2x because answer capsules are the strongest commonality among cited pages and promotional tone correlates -26.19% with citation.

Statistics density is also 2x because adding statistics improves AI visibility by 41%.

This is the manual version of what Averi's content scoring system automates at 55% SEO + 45% GEO.

When to Add Paid Tools

The manual system works for startups tracking 20–30 queries across 3 platforms. When you outgrow it, paid tools automate the process:

What paid GEO tools add:

Automated daily/weekly prompt auditing across 5+ platforms (vs. your monthly manual audit)

Historical trend data with visualization

Competitive share of voice tracking at scale

Sentiment analysis (is AI saying positive or negative things about your brand?)

Alert systems when citation patterns change

Tools in the market (as of April 2026):

Profound: Citation tracking across ChatGPT and other platforms. Built specifically for AI visibility.

SE Ranking: Established SEO suite with integrated AI citation tracking module.

Frase: Content optimization with AI visibility auditing across 8 platforms.

Otterly: AI search monitoring for brand visibility.

Siftly: GEO-specific platform with cross-platform citation tracking and competitive benchmarking.

When to upgrade from manual to paid:

You're tracking 50+ queries and the manual audit exceeds 2 hours

You need weekly or daily tracking frequency (not just monthly)

You need competitive intelligence across 5+ competitors

Leadership requires formatted dashboards rather than spreadsheet reports

For most seed-to-Series-A startups, the manual system is sufficient for the first 6–12 months of GEO implementation. The data quality is the same. The frequency and convenience are the trade-off.

Reporting GEO to Stakeholders

The Monthly GEO Report (5 Minutes to Produce)

For co-founders, investors, or board updates:

Section 1: AI Visibility Summary

Citation rate: X% (↑/↓ from last month)

Brand mention rate: X%

AI Share of Voice: X% vs. [top competitor] at Y%

Section 2: AI Traffic

AI referral sessions: X (↑/↓ from last month)

AI conversion rate: X%

AI-sourced signups/leads: X

Section 3: Competitive Position

Queries where we're cited and [competitor] isn't: X

Queries where [competitor] is cited and we're not: X

Net citation advantage: +/- X queries

Section 4: Actions This Month

Published X new GEO-optimized pieces

Refreshed X existing pieces for citation freshness

Added [platform] presence for brand authority

This report takes 5 minutes to produce from your monthly audit spreadsheet. It translates GEO into language that non-marketing stakeholders understand: visibility, traffic, conversions, competitive position.

Connecting GEO to Revenue

The strongest argument for GEO investment: AI visitors convert at 14.2% versus Google's 2.8%.

Frame it as: "100 AI referral visitors produce the same conversion volume as 500 Google organic visitors. Every citation that produces a click is 5x more valuable per session than a traditional organic visit."

When AI referral traffic is small (under 100 visits/month), the conversion data is too sparse for confident conclusions.

Use organic traffic value instead: multiply AI referral visits by the average CPC of the keywords those visitors would have searched. "Our AI citations generated 200 visits worth $600 in equivalent paid search value" works until the conversion sample size becomes meaningful.

How Averi Approaches GEO Measurement

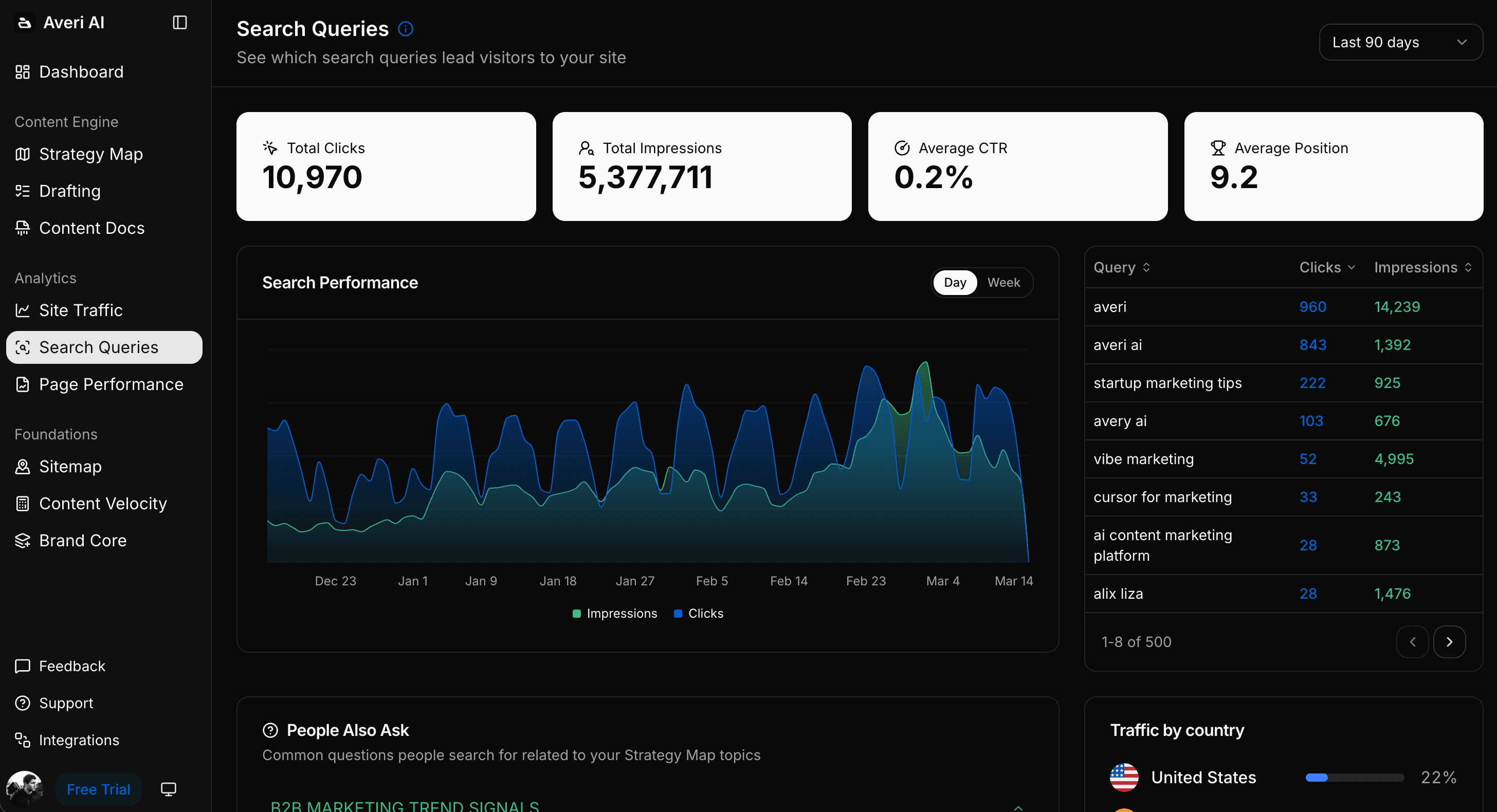

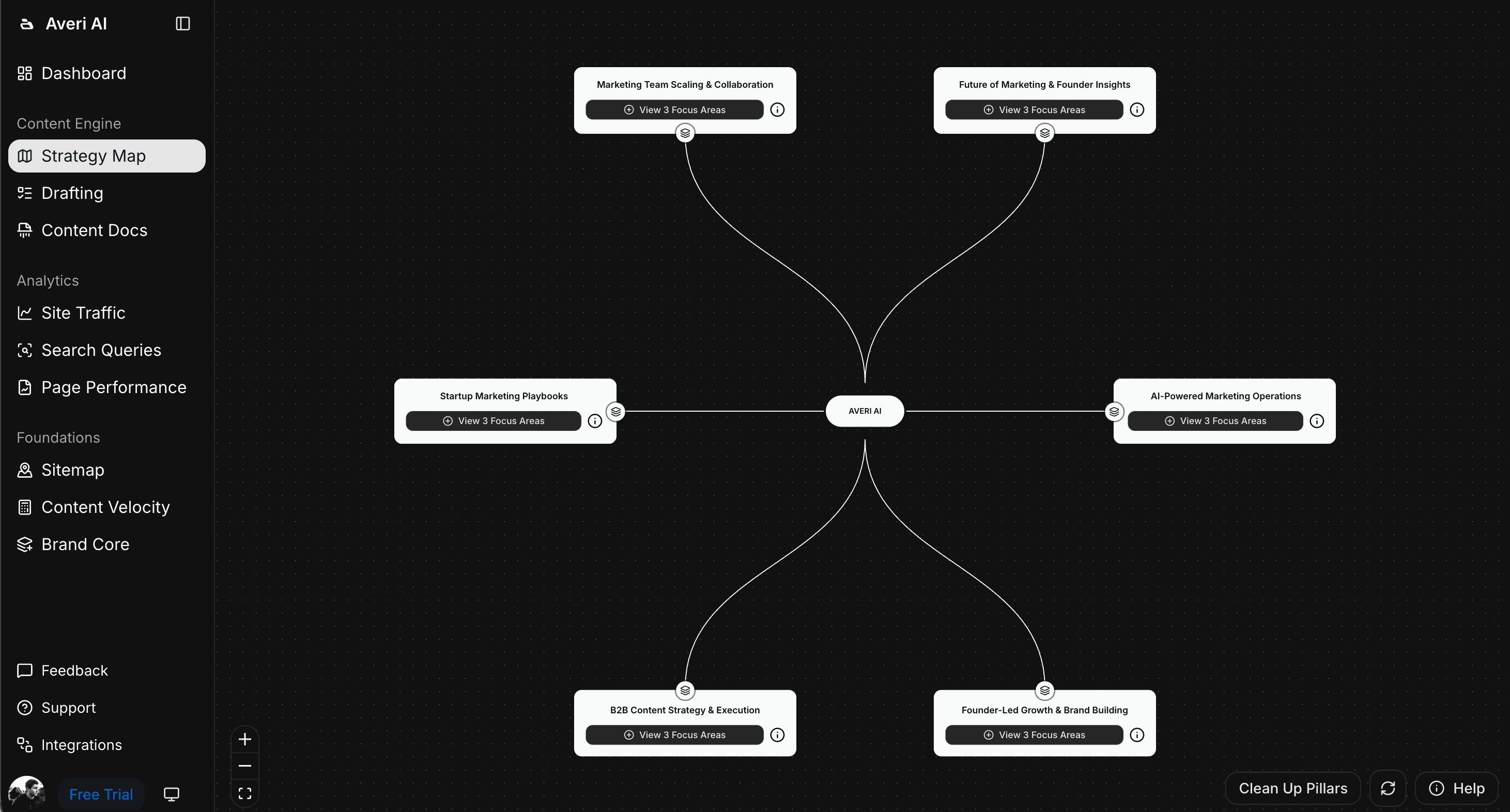

Averi's analytics integration consolidates GEO measurement with content production in one system.

What the integration provides:

Performance tracking across Google Analytics and GSC, surfacing which pages earn organic impressions, clicks, and conversions & filterable based on which LLM referrer

Content scoring at 55% SEO + 45% GEO before publishing, acting as a pre-publish citation readiness check

Freshness monitoring flagging pages approaching the 90-day citation decay window for refresh

Topic cluster tracking showing which clusters produce the strongest ranking and citation performance

The manual framework in this guide works without Averi. Averi makes it faster by connecting the measurement to the content production that drives the numbers.

Start a free 14-day trial. No credit card. See your content's citation readiness score before your first piece publishes.

Related Resources

The Definitive Guide to Generative Engine Optimization (GEO)

GEO Metrics That Matter: How to Track AI Citations (+ Free Tracking Dashboard)

Content ROI Calculator: How to Measure What's Actually Working in 2026

Beyond Google: How to Get Your Startup Cited by ChatGPT, Perplexity, and AI Search

ChatGPT vs. Perplexity vs. Google AI Mode: The B2B SaaS Citation Benchmarks Report

The GEO Playbook 2026: Getting Cited by LLMs, Not Just Ranked by Google

FAQs

How do you measure GEO success?

Measure across four layers: visibility (citation frequency, brand mention rate, AI Share of Voice), traffic (AI referral sessions in GA4), engagement (AI visitor conversion rate, pages per session), and business impact (AI-attributed pipeline, branded search lift). The core metric is citation frequency: run 20–30 target queries across ChatGPT, Perplexity, and Google AI Overviews monthly. Record whether your brand is cited, mentioned, or absent. AI search traffic converts at 14.2% versus Google's 2.8%, making even small volumes high-value.

How do I track AI citations manually?

Build a prompt set of 20–30 queries your ICP searches. Run each across ChatGPT (web search enabled), Perplexity, and Google monthly. For each, record: cited (Y/N), mentioned (Y/N), citation position, competitors cited, and your URL cited. Calculate citation rate (citations ÷ total checks × 100) and Share of Voice (your mentions ÷ all brand mentions × 100). Takes 45 minutes monthly. After 3 months, trends emerge showing whether your GEO strategy is working.

How do I set up GA4 to track AI referral traffic?

Create a custom channel group in GA4 (Admin → Data Display → Channel Groups). Add a channel called "AI Search" with conditions matching these referral sources: chatgpt.com, chat.openai.com, perplexity.ai, gemini.google.com, copilot.microsoft.com, claude.ai. Without this setup, AI traffic hides in your "direct" or "unassigned" channel. OpenAI added UTM parameters to ChatGPT citation links in mid-2025, but not all platforms do this. The custom channel group catches both UTM-tagged and referrer-based AI traffic.

What GEO tools should I use?

Start with the free manual system (45 minutes/month). Upgrade when you track 50+ queries or need daily/weekly frequency. Current options: Profound (ChatGPT citation tracking), SE Ranking (SEO suite with AI tracking module), Frase (content optimization + AI visibility auditing across 8 platforms), Otterly (AI search monitoring), Siftly (GEO-specific cross-platform tracking). Evaluate based on: multi-platform coverage, share of voice tracking, competitive analysis, and whether the tool connects visibility data to on-site analytics.

What is a good citation rate?

Benchmarks are still forming. For seed-stage startups in their first 6 months of GEO: any citation at all is a positive signal. By month 6–12: citation in 10–20% of your tracked queries is strong performance. Beyond month 12: 20–30%+ puts you in a competitive position for your category. More important than the absolute rate is the trend: three consecutive months of growing citation rate confirms the strategy is working. A declining rate after 3+ months of optimization means adjusting content structure, topic targeting, or brand authority building.

How do I measure GEO ROI?

At small traffic volumes: calculate organic traffic value. Multiply AI referral visits by the average CPC of the keywords driving those visits. "200 AI referral visits × $3 average CPC = $600 in equivalent paid search value." At larger volumes: track AI-attributed conversions and revenue. AI visitors convert 4.4x higher than standard organic. Frame it as: "100 AI visits produce the same conversions as 500 organic visits." Add self-reported attribution ("How did you hear about us?") to capture the dark funnel that analytics misses.

How often should I run a citation audit?

Monthly for manual tracking (45 minutes per audit). This frequency captures meaningful trends while being sustainable for founders managing multiple priorities. If you upgrade to paid tools, weekly tracking provides faster signal on content changes. Daily tracking is available from most paid platforms but only necessary for companies with active GEO campaigns where rapid iteration matters. After publishing a new piece or refreshing an existing one, run an ad-hoc check 2–3 weeks later to see if the specific piece earned citations. This supplements your regular monthly audit.