GPT-5.5 Cites Brand Sites 10 Points Less Than GPT-5.4. Here's What That Actually Means for Your Marketing.

8 minutes

TL;DR

📉 The headline data (Writesonic, April 27, 2026): GPT-5.5 cites brand sites 47% of the time, down 10 points from GPT-5.4's 57%. The mechanism is

site:operator usage in fan-out queries collapsing from 40.5% to 12.6% — a 70% drop in brand-domain-targeted searches between versions🔄 The longer arc: GPT-5.3 cited brands 8% of the time. GPT-5.4 jumped to 56-57%. GPT-5.5 pulled back to 47%. Model-by-model citation behavior is volatile in ways that make any single playbook structurally risky

🎯 What's still true: third-party authority signals (G2, Capterra, Reddit, YouTube) carry the same weight they did before, possibly more. The brands that built third-party presence in 2025 are the brands that still get cited when the model temporarily stops

site:-querying their domain🚨 What changed for content engines specifically: the citation surface is splitting. Brand domain citations dropped 10pp. Third-party citations expanded to fill the gap. Teams optimizing only for brand-domain citations are now optimizing for ~47% of the citation pool instead of ~57%

⚙️ The 3 engine adjustments we're making in 72 hours: (1) reweighting third-party citation building back to 50%+ of GEO effort, (2) tightening the schema and FAQ structure on existing pillar pieces so non-

site:citations are easier to extract, (3) accelerating the Reddit/G2/YouTube production cadence that supports brand visibility whensite:queries don't trigger📊 The bigger signal: model-version volatility is now the defining feature of AI citation strategy. Single-version optimization is structurally risky. Multi-platform, multi-format, multi-source content architecture is the only resilient response

Zach Chmael

CMO, Averi

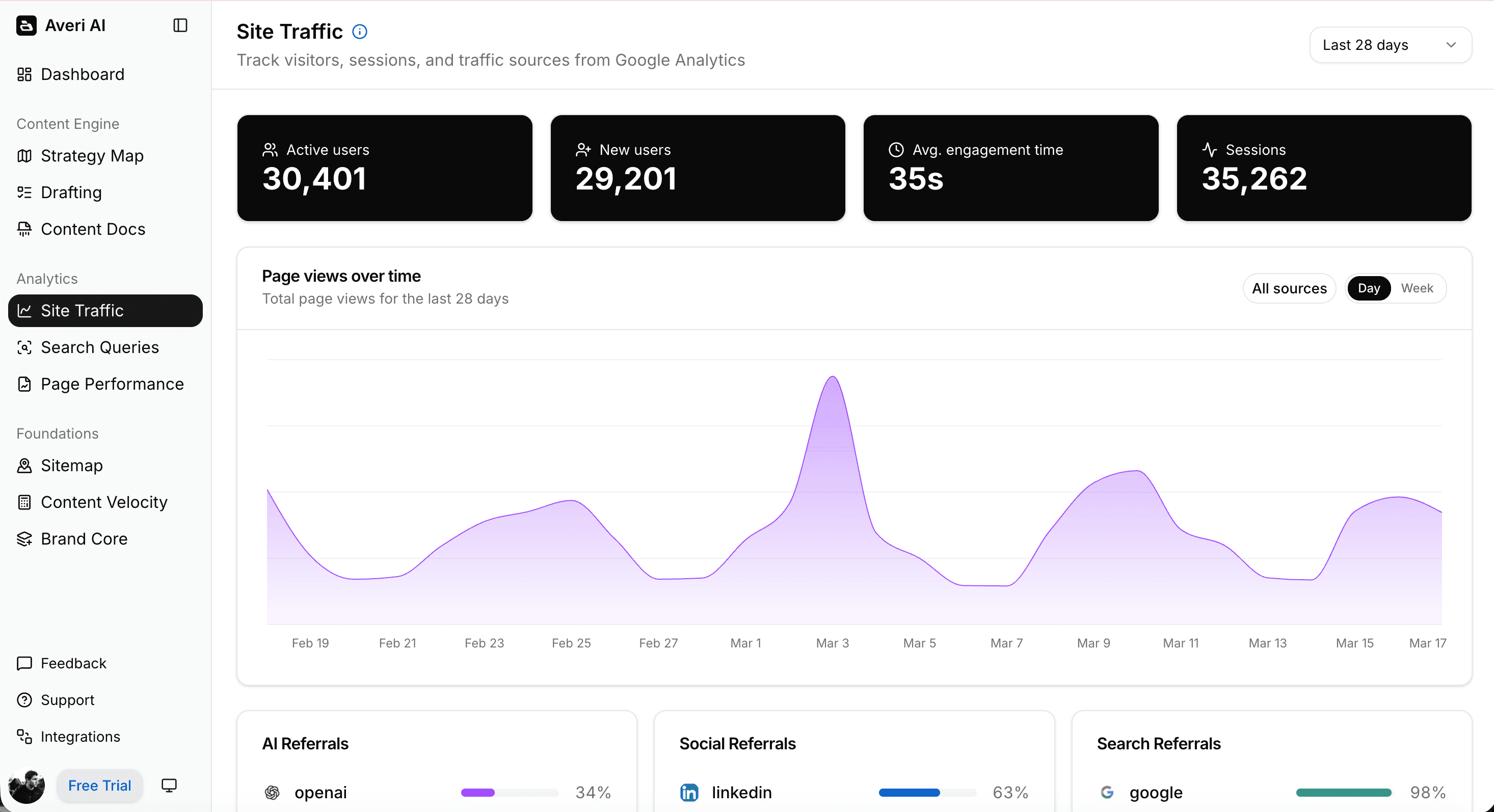

"We built Averi around the exact workflow we've used to scale our web traffic over 6000% in the last 6 months."

Your content should be working harder.

Averi's content engine builds Google entity authority, drives AI citations, and scales your visibility so you can get more customers.

GPT-5.5 Cites Brand Sites 10 Points Less Than GPT-5.4. Here's What That Actually Means for Your Marketing.

On April 27, Writesonic published a study that should be reshaping every B2B SaaS GEO program this week, and is somehow still getting almost no airtime outside the GEO-tracking bubble.

The headline finding… GPT-5.5 cites brand sites 47% of the time. GPT-5.4 did 57%.

A 10-percentage-point drop in first-party citation rate — measured across 50 prompts × 3 models × 150 conversations — between two model versions launched roughly two months apart.

The mechanism isn't subtle.

GPT-5.4 used the site: operator on 40.5% of its fan-out queries. GPT-5.5 cut that to 12.6%.

Three out of four site: queries — the queries that pull citations directly from the brand domain a buyer just asked about — vanished between versions. The model didn't stop searching the web (search behavior is still near-universal across both versions). It stopped specifically searching the asked-about brand's own domain.

For B2B SaaS teams that built their entire GEO playbook around the GPT-5.4 finding from March (where brand citations jumped from 8% on GPT-5.3 to 56% on GPT-5.4, confirmed by Search Engine Journal), the GPT-5.5 pullback is a directional reversal.

Not a complete one — 47% is still dramatically higher than the 8% baseline on GPT-5.3 default. But the model behavior is unstable.

The question isn't "what's the new optimization rule."

The question is… what's the right way to architect a GEO program when the rules shift this fast, and what specifically should change in the engine this week?

This piece walks through the Writesonic data honestly, names what's actually shifting (and what isn't), and lays out the three engine adjustments we're making in our content workflow inside Averi in the 5 days following the study's publication.

What the Writesonic study actually measured (and didn't)

Worth being precise here, because the headline number gets misread quickly.

The methodology (Writesonic study, April 27, 2026) 50 prompts spanning 16 categories (consumer + B2B research queries), each prompt run once per model across GPT-5.5, GPT-5.4, and GPT-5.4 Thinking — for 150 total conversations.

Conversations pulled directly from ChatGPT's /backend-api/conversation/<id> endpoint, which exposes every fan-out query the model issued, every web result returned, and every URL cited in the final answer. Citations classified as first-party (the brand the user asked about) or third-party (review sites, blogs, Reddit, retailers, media) using Claude Haiku 4.5 with detailed instructions and 50+ in-prompt examples. Calibration check: GPT-5.4's first-party rate measured 56.8%, matching the 56% from Writesonic's previous March study.

Methodology sound. Sample limitations real (single account, single geography, single run per prompt) but the core finding is robust enough to act on.

What it measured: the rate at which ChatGPT cited the brand a buyer asked about on their own domain. "Tell me about HubSpot" → did the answer cite hubspot.com or did it cite TechRadar, G2, and Reddit instead?

What it didn't measure: third-party citation share by name, exact citation volume per query, conversion impact, or whether the change is permanent. The study is a snapshot of citation direction, not a complete map of citation behavior.

The critical finding the headline buries: the site: operator collapse. GPT-5.4 ran 40.5% of its fan-out queries with the site: operator scoped to the brand's domain. GPT-5.5 ran 12.6%. That's a 70% reduction in brand-domain-targeted searches between versions. The brand citation drop isn't because GPT-5.5 decided brand sites are less trustworthy. It's because GPT-5.5 stopped specifically searching brand sites at the rate GPT-5.4 did. The model still cites the brand when it finds the brand in general web search results — it just doesn't go looking for the brand on its own domain as aggressively.

This distinction matters for what you actually do about it.

If GPT-5.5 had decided brand sites were less trustworthy, the answer would be "improve your brand site." Since GPT-5.5 is just searching brand sites less aggressively, the answer is "make sure your content gets surfaced in general web search and on third-party platforms, because that's now the dominant citation path again."

For broader context on platform divergence specifically, see our Platform Divergence Playbook and B2B SaaS Citation Benchmarks Report.

The longer arc: model-version volatility is the new normal

This is the second time in two months that ChatGPT model behavior has materially shifted citation patterns.

March 5, 2026: OpenAI replaced GPT-5.2 with GPT-5.4 as the default model. The Writesonic March study found GPT-5.4 cited brand sites 56% of the time vs GPT-5.3's 8% — a 7x increase in first-party citation rate. The strategic interpretation at the time: "ChatGPT just decided brand sites are now the source of truth. Optimize your brand content because the model is now reading it." Most B2B SaaS GEO programs reweighted toward brand-domain optimization in March/April based on this signal.

April 27, 2026: The new Writesonic study shows GPT-5.5 reverted partially. 47% brand citation, down from 57%. The exact interpretation that drove March/April optimization decisions is no longer fully accurate.

The trend isn't a trend yet — it's two data points. But the implication for strategy is clear: model behavior is volatile in ways that make any single-version playbook structurally risky. Teams that fully reweighted toward brand-domain optimization in March based on the GPT-5.4 finding are now over-indexed on a behavior the model partially walked back. Teams that stayed balanced across brand-domain and third-party citation building are largely uneffected.

ChatGPT now drives 87.4% of all AI referral traffic, which means model-version volatility on ChatGPT specifically has outsized impact on overall AI search visibility.

A team that misallocates ChatGPT optimization is misallocating most of their AI search investment.

The lesson isn't "always optimize for the latest model." It's "build a content architecture resilient to the next model change you can't predict yet."

For more on the click-collapse and AI search shift specifically, see our Click Collapse Playbook and AI Citation Tracking guide.

What's still true (the structural patterns that survived)

Three things that didn't change between GPT-5.4 and GPT-5.5, and that are worth treating as the durable layer of GEO strategy through year-end 2026.

1. Third-party authority signals still carry weight. G2, Capterra, Trustpilot, Reddit, YouTube, and major media coverage are the citation sources that filled the gap when GPT-5.5 stopped site:-querying brand domains as aggressively. Domains with strong third-party platform presence are cited 3-4x more often by ChatGPT regardless of model version. The brands that invested in third-party presence in 2025 are the brands that maintained citation share through the GPT-5.5 pullback. The ones that skipped that work and bet on brand-domain optimization alone are now scrambling.

2. Schema and structural extractability still produce citation lift. Pages with proper schema markup see 36% higher AI citation rates, and the lift compounds when schema is layered (Article + FAQPage + ItemList + VideoObject + ImageObject). This wasn't affected by the GPT-5.5 update. The structural patterns that make content extractable to one version of the model make it extractable to the next. Schema is the most version-resilient single optimization investment in GEO.

3. FAQ + direct-answer headlines still get cited disproportionately. Pages with headlines that directly answer the question get cited by ChatGPT 41% of the time across both versions, and 120-180 word sections between headings produce 70% more ChatGPT citations than shorter sections. This pattern survived the GPT-5.4 → GPT-5.5 transition cleanly. Content architected for direct extraction continues to outperform content architected for traditional SEO across both versions.

For the foundational frameworks specifically, see our Multimodal Content Cluster guide, FAQ Optimization for AI Search, and Building Citation-Worthy Content guide.

The 3 engine adjustments we're making in 72 hours

What we're tightening in our content workflow at Averi in response to the Writesonic data, in the 5-day window following publication.

Each adjustment is calibrated to the structural pattern the study surfaces, not to the specific 47%/57% numbers (which will shift again as ChatGPT iterates).

Adjustment 1: Reweight third-party citation building back to 50%+ of GEO effort

Through March and early April, our internal allocation had drifted toward roughly 60% on-domain content optimization (driven by the March GPT-5.4 finding) and 40% third-party signal building. The GPT-5.5 study makes that allocation structurally risky.

The new allocation: 50% third-party, 50% on-domain. This brings the strategy back closer to the 2025 balance, with active third-party investment treated as a non-negotiable part of weekly content production rather than a quarterly initiative.

Specific tactics we're accelerating:

G2/Capterra review momentum: weekly outreach to recent customers asking for reviews, with templates that surface specific use cases the AI engines look for

Reddit participation cadence: founder-voice participation in 3-5 relevant subreddits per week, with substantive contributions that get organically referenced rather than corporate-Reddit posts that don't

YouTube content production: 1 companion video per pillar piece going forward, with structured transcripts and chapter markers (per our Multimodal Content Cluster framework)

The reweighting isn't reactive to GPT-5.5 specifically — it's recognition that any single ChatGPT version's behavior is unstable, and the third-party layer is the most version-resilient citation surface available.

Adjustment 2: Tighten schema + FAQ structure on existing pillar pieces

If GPT-5.5 is searching brand domains less aggressively via site: operators, the brand domain content that does get cited is the content that surfaces in general web search and gets extracted cleanly when the model encounters it.

That makes structural extractability more important, not less, for the content already on the brand domain.

The audit pass we're running this week:

Top 20 pillar pieces — verify FAQ structure (7+ questions, 40-60 word self-contained answers, FAQPage schema applied)

Top 20 pillar pieces — verify schema layering (Article + FAQPage + ItemList + VideoObject + ImageObject + Organization + Person)

Direct-answer H2s on every piece (questions phrased the way buyers actually ask them, with the answer in the first 40-60 words after the heading)

Section length verification: 120-180 words between headings, no longer, no shorter

This is a 2-day audit with mechanical fixes.

The total cost is roughly 16 hours of work. The lift it produces compounds across both GPT-5.5 and whatever GPT-5.6/5.7 brings later.

For the technical patterns specifically, see our Schema Markup for AI Citations: Technical Implementation Guide.

Adjustment 3: Accelerate the Reddit/G2/YouTube production cadence

The structural insight from the Writesonic data: third-party citation surfaces are now a leading indicator of resilience, not a nice-to-have layer.

The brands that maintained citation share through the GPT-5.4 → GPT-5.5 transition are the brands with deep third-party presence. The brands that didn't are now scrambling to build that presence in a 7-day window.

The cadence we're shifting to in 72 hours:

Reddit: 5+ substantive comments or posts per week from the founder voice, in 3-5 subreddits where Averi's actual buyers are active. Not corporate posts. Real participation that earns organic references

G2 + Capterra: weekly review outreach. Even 2-3 new reviews per week on each platform, sustained over 8-12 weeks, materially shifts citation share

YouTube: 1 companion video per published pillar piece, with structured transcripts. The asset compounds across both GPT-5.5 ChatGPT (training data exposure) and Google AI Overviews (where YouTube is the most-cited single domain from outside the top 100, per ALM Corp's analysis)

Editorial features: pursuing 1-2 thought-leadership placements per month in publications ChatGPT treats as authoritative — Search Engine Land, MarTech, B2B SaaS publications with strong domain authority

The cadence isn't new in concept — we covered the framework in our GEO Playbook 2026 earlier this year. What's new is treating the cadence as a continuous weekly operational rhythm rather than a quarterly initiative. The Writesonic data is the forcing function.

What this signals about citation strategy through year-end 2026

Three predictions, calibrated to the GPT-5.4 → GPT-5.5 trajectory and the structural patterns underneath it.

Prediction 1: Model-version volatility will continue, and will accelerate. OpenAI is shipping major model updates roughly every 60-90 days. Each update has the potential to materially shift citation behavior. The teams that survive (and win) through year-end 2026 will be the teams that built content architectures resilient to model-version changes, not the teams that optimized for whichever version was current when the strategy was set. Single-version optimization is now structurally a worse strategy than balanced multi-source architecture.

Prediction 2: Third-party citation building becomes a non-negotiable continuous function. The 2024-2025 pattern of "we'll do G2 reviews next quarter" is operationally dead. Third-party presence needs to be a weekly production cadence with the same operational discipline as blog publishing. The teams treating it as a continuous function will compound visibility through every model transition. The teams treating it as a project will scramble in the 7-day windows after each transition.

Prediction 3: The "ChatGPT optimization" category fragments into "ChatGPT version-specific optimization" by Q4 2026. Today, teams talk about optimizing for ChatGPT as a single surface. By the end of 2026, the practice will fragment into version-specific tactics — what works for GPT-5.5 default, what works for GPT-5.5 Thinking, what works for whatever GPT-6 brings. GEO platforms like Writesonic, Profound, OtterlyAI, and Peec AI will need to track version-specific citation behavior as a primary feature, not an aside. Teams without this granular view will misallocate effort against averaged data that no longer reflects how any specific version actually behaves.

For the broader take on 2026 trends, see our Vibe Marketing Q2 2026 piece and The State of AI Content Marketing 2026 Benchmarks Report.

Common mistakes teams will make in the next 30 days

Five patterns I expect to see widely as the GPT-5.5 study propagates through B2B SaaS marketing in May 2026:

Mistake 1: Treating the 10-point drop as the new permanent state. The headline number is dramatic, but it's a single snapshot. The right interpretation is "model behavior is volatile" not "GPT-5.5 will permanently cite brands 47%." Teams that lock in optimization decisions to specifically match the 47% number will be misallocating again when GPT-5.6 ships in June or July with different behavior.

Mistake 2: Reactively cutting brand-domain content investment. The GPT-5.5 finding doesn't mean brand-domain content matters less. It means brand-domain content needs to be findable in general web search (not just via site: queries) for it to maintain citation share. The fix is structural improvement — schema, extractability, FAQ structure — not reduced investment.

Mistake 3: Over-rotating to third-party citation building at the expense of on-domain quality. The opposite mistake. Teams that read the GPT-5.5 study and rip resources from on-domain content to dump entirely into third-party signal building will produce thin brand sites that don't get cited even when the model does encounter them. The right move is balanced reweighting (50/50), not full rotation.

Mistake 4: Single-platform tracking becoming critically misleading. Only 11% of domains cited by ChatGPT are also cited by Perplexity. Teams measuring only ChatGPT citation rates will see the GPT-5.5 drop and assume their AI visibility collapsed. Teams measuring across ChatGPT, Perplexity, and Google AI Overviews will see the actual picture: GPT-5.5 brand citation dropped, but Perplexity and Google AI Overviews behavior is unaffected. Multi-platform tracking is a prerequisite for accurate response to the study.

Mistake 5: Skipping the third-party investment because "we're not a Reddit company." The category-most-vulnerable-to-this-mistake is enterprise B2B SaaS where the cultural posture is "we don't do Reddit." That posture was already costing citation share in 2025. The GPT-5.5 study makes it operationally indefensible. Even modest, founder-voice participation in 3-5 relevant subreddits — sustained over 8-12 weeks — produces measurable citation share growth that text-only blog content cannot match.

What to do this week

If you want to operationalize the response to the Writesonic GPT-5.5 study without overcorrecting:

Verify your AI citation tracking is multi-platform. If you're measuring only ChatGPT, you don't have enough signal to interpret what the GPT-5.5 data means for your specific brand. Add Perplexity and Google AI Overviews tracking before doing any other reactive work.

Audit your top 20 pillar pieces against the structural patterns. FAQ structure, direct-answer H2s, 120-180 word sections, layered schema. The patterns that survived the GPT-5.4 → GPT-5.5 transition are the patterns that will survive the next one. 16 hours of work, compounds across model versions.

Reweight your GEO allocation to 50% on-domain / 50% third-party. Document the new allocation. Make it visible to the team. The discipline of explicit reweighting prevents drift back to the over-indexed state.

Start a continuous Reddit + G2 + YouTube production cadence. Not a project. A weekly operational rhythm with the same discipline as blog publishing. The teams that build this in May 2026 are the teams that compound visibility through the next 3-4 model transitions.

Pull this weekend or Monday morning. The 5-day perspective window on the Writesonic study is closing. Teams that publish a thoughtful response in the next 72 hours capture the trend traffic; teams that wait two weeks miss it. Treat model-update studies as time-sensitive content opportunities, not background information.

Write the GPT-5.5 audit document for your CMO/CFO. A 1-page summary: what the study found, what we changed, what we didn't change and why, what we'll watch for in the next 60 days. This is the document that demonstrates strategic agility to leadership when budget season hits.

Set up the tracking for GPT-5.6 in advance. OpenAI will ship GPT-5.6 by Q3 2026 (high confidence). The teams measuring citation rate by model version before the next update lands are the teams that catch the shift in week 1; the teams scrambling to set up version-specific tracking after the update will be 3-4 weeks behind.

That's the playbook for the 5-day window.

The Writesonic GPT-5.5 study is an unusually clear signal about both the volatility of AI citation behavior and the durability of certain structural patterns.

The teams that interpret it correctly — not as a permanent new state, but as evidence that resilient content architecture beats single-version optimization — will compound visibility through every model transition through year-end 2026 and beyond.

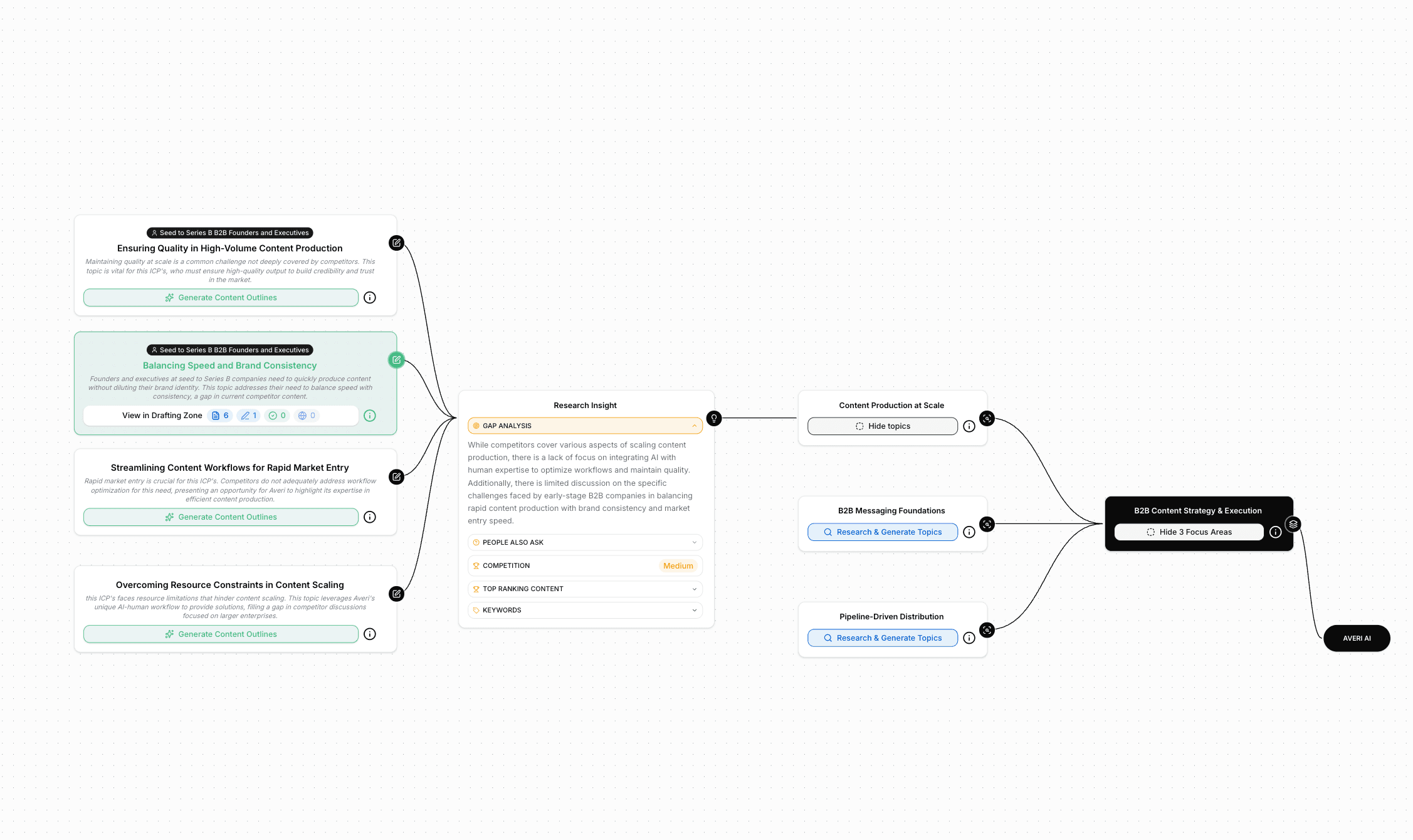

If you want this baked into your stack — Brand Core that captures the multi-source citation worldview, Strategy Map that organizes content for both on-domain and third-party citation surfaces, Content Scoring that flags structural extractability gaps before publish, native publishing with schema-by-default, and unified analytics that tracks citation rate across ChatGPT, Perplexity, and Google AI Overviews — start a free 14-day Averi trial.

30 minutes to set up. The first piece you produce inside Averi will be architecturally calibrated for the model-version volatility this study describes.

FAQs

What did the Writesonic GPT-5.5 study find?

The Writesonic study published April 27, 2026 found that GPT-5.5 cites brand websites 47% of the time, compared to GPT-5.4's 57% — a 10-percentage-point drop. The mechanism was a collapse in site: operator usage in fan-out queries, from 40.5% on GPT-5.4 to 12.6% on GPT-5.5. Methodology: 50 prompts × 3 models × 150 conversations, run from a single ChatGPT Plus account on April 27, 2026, with citations classified using Claude Haiku 4.5. Calibration check: GPT-5.4's first-party rate measured 56.8%, in line with Writesonic's earlier March study.

Why does GPT-5.5 cite brand sites less than GPT-5.4?

The mechanism is site: operator usage — GPT-5.4 used site:-scoped queries on 40.5% of its web searches, while GPT-5.5 cuts that to 12.6%. The model didn't decide brand sites are less trustworthy; it stopped specifically searching brand-scoped domains as aggressively. The implication: brand citations now depend more on content surfacing in general web search and on third-party platforms (G2, Capterra, Reddit, YouTube), not on the model going looking for the brand on its own domain.

Should I cut my brand-domain content investment because of GPT-5.5?

No. The GPT-5.5 finding doesn't mean brand-domain content matters less; it means brand-domain content needs structural extractability (FAQ structure, direct-answer H2s, 120-180 word sections, layered schema) to maintain citation share when the model isn't site:-querying as aggressively. The fix is structural improvement to existing brand content, not reduced investment.

What's the right GEO allocation between on-domain and third-party in May 2026?

Roughly 50/50 is the resilient default for B2B SaaS. The 2024-2025 pattern of heavy on-domain weighting was reasonable when GPT-5.4 was citing brand sites at 56-57% rates. The GPT-5.5 pullback to 47% makes balanced allocation more defensible. Categories with heavy Reddit conversation (developer tools, fintech, crypto) lean further toward third-party. Categories with mature SEO programs already running can extract more value from on-domain optimization with less marginal investment.

Why does the site: operator collapse matter so much?

Because ChatGPT drives 87.4% of all AI referral traffic. A change in how ChatGPT searches the web has outsized impact on overall AI search visibility. The site: operator collapse means the citation path shifts: instead of the model going directly to your brand domain via scoped searches, it relies on your content surfacing in general web search and third-party platforms. The structural implication: third-party signal building becomes more important, not less.

Will GPT-5.6 reverse the GPT-5.5 changes?

Unknown, but model-version volatility is now the defining feature of citation strategy. Two consecutive ChatGPT model updates (GPT-5.3 → GPT-5.4 in March, GPT-5.4 → GPT-5.5 in April) materially shifted citation behavior in opposite directions. The right strategic response isn't trying to predict the next model's behavior — it's building a content architecture (multi-platform, multi-format, multi-source) resilient to whatever the next model does.

How does Averi handle model-version volatility?

Averi's content engine is architected for multi-source citation resilience. Brand Core captures the brand's worldview consistently across formats. Strategy Map organizes content for both on-domain and third-party citation surfaces. Content Scoring evaluates pieces on structural patterns (FAQ + schema + direct answers + 120-180 word sections) that survive model transitions cleanly. Unified analytics tracks citation rate across ChatGPT, Perplexity, and Google AI Overviews — surfacing version-specific shifts when they happen so teams can respond in week 1 instead of week 4. The architecture is designed to compound visibility through every model transition rather than optimize for whichever version is current.

Related Resources

The Source Material

Writesonic: GPT-5.5 Cites Brand Sites 47% of the Time. GPT-5.4 Did 57%

Writesonic March study: 56% of GPT-5.4's Citations Go to Brand Websites. Only 8% of GPT-5.3's Do

Search Engine Journal coverage: ChatGPT's Default & Premium Models Search The Web Differently

The Citation Architecture

The Platform Divergence Playbook: Three Plays for ChatGPT, Perplexity, Google AI Mode

ChatGPT vs Perplexity vs Google AI Mode: B2B SaaS Citation Benchmarks Report (2026)

AI Citation Tracking: How to Measure Citation Frequency Across ChatGPT, Perplexity, and Claude

The Methodology

Schema Markup for AI Citations: Technical Implementation Guide

Building Citation-Worthy Content: Making Your Brand a Data Source for LLMs

The Measurement Layer

Strategic Context

Vibe Marketing in Q2 2026: What's Working, What's Hype, and What's Next

Topic Targeting vs Keyword Targeting: The Framework Killing the Old SEO Playbook

Real Receipts

Architect for the next model transition, not just this one. Averi's content engine handles multi-source citation resilience by default — Brand Core, Strategy Map, Content Scoring across SEO + GEO, native publishing with schema, and unified analytics across ChatGPT, Perplexity, and Google AI Overviews. The architecture compounds visibility through every model update. $99/mo, no contract, 14-day free trial. Start your free trial →