AI Citation Tracking: How to Measure Citation Frequency Across ChatGPT, Perplexity, and Claude

6 minutes

TL;DR

🎯 Citation frequency is the percentage of buyer-relevant AI prompts where your content appears as a cited source. It's the clearest signal of AI search authority and the primary input to Brand Visibility Score.

📊 Target for B2B SaaS: 20-30% citation rate across tracked prompts. Below 10% = invisible. Above 40% = category-leading. Seed-stage startups realistically start at 2-8%.

🔀 Platform fragmentation is extreme. Only 11% of sites are cited by both ChatGPT and Perplexity. Measuring one platform doesn't predict the others. Track all four primary engines (ChatGPT, Perplexity, Claude, Google AI Mode) or you miss 60-80% of the picture.

⚡ Free measurement is possible. A prompt library + spreadsheet + 30 minutes weekly across 3-4 platforms produces defensible citation tracking data. Enterprise platforms ($500-$2,000/month) automate this but aren't required at startup stage.

🚨 The #1 measurement mistake: interpreting a single week's data as a trend. Citation rates are volatile — 40-60% of citations change monthly. Track for 8+ weeks before drawing conclusions.

Zach Chmael

CMO, Averi

"We built Averi around the exact workflow we've used to scale our web traffic over 6000% in the last 6 months."

Your content should be working harder.

Averi's content engine builds Google entity authority, drives AI citations, and scales your visibility so you can get more customers.

AI Citation Tracking: How to Measure Citation Frequency Across ChatGPT, Perplexity, and Claude

ChatGPT cites sources 87% of the time. Google AI Mode cites 76.3% of the time. Perplexity visits approximately 10 pages per query and cites 3-4 of them. Google AI Overviews cite 84.9% of responses.

Yet most B2B SaaS teams don't know their citation rate on any of these engines.

They know organic impressions, CTR, bounce rate. They can tell you the position of their top 10 keywords.

Ask them how often ChatGPT cites their brand when buyers ask category questions — silence.

Citation frequency is the single clearest signal of AI search authority.

It's the metric that tells you whether AI engines treat your content as a trusted source when generating answers for your buyers.

It's the leading indicator of AI-referred traffic, pipeline influence, and brand recognition inside AI-mediated research.

And it's the easiest AI visibility metric to measure because the answer is binary: were you cited in this response or not?

This piece is the tactical playbook for measuring citation frequency. Platform-by-platform mechanics. The free-tool tracking method that works for startups. Paid tool options for Series B+ scale. The template for running weekly measurement in under 60 minutes. How to interpret trends. What to do when citation rate is stuck.

For the strategic context on how citation frequency fits into the broader AI visibility program, see The Complete Guide to AI Visibility for B2B SaaS.

What Is AI Citation Tracking?

AI citation tracking is the practice of measuring how often AI engines (ChatGPT, Perplexity, Claude, Google AI Mode) cite your content as a source when generating answers to buyer-relevant queries.

It involves running a fixed prompt library across each engine on a regular cadence, logging whether each response cites your domain, and calculating citation frequency as a percentage of total prompts.

The metric tells you whether AI engines treat your brand as a trusted source for questions your buyers actually ask.

Citation is specifically the act of an AI engine attributing information to your site — typically with a clickable link or domain reference in the response. This is different from a brand mention (where the AI names your brand without attribution) and different from a brand reference (where the AI describes your company without citing your content).

Citation is the strongest signal because it requires the AI to have:

(1) indexed your content,

(2) retrieved it as relevant to the query,

(3) judged it authoritative enough to source, and

(4) included it in the final response.

Mention rates can inflate from brand recognition alone; citation rates reflect content authority specifically.

For the broader metric context including how citation frequency combines with placement, sentiment, and link presence into Brand Visibility Score, see Brand Visibility Score: The Only AI Search Metric That Actually Matters.

Why Citation Frequency Matters More Than Mention Rate

Both citations and mentions drive buyer awareness.

Both feed into Brand Visibility Score.

But citation frequency is the leading indicator — and the one you can most directly influence through content work.

Mentions can happen for many reasons: brand recognition from training data, category dominance, name-matching coincidence. An AI saying "tools like Averi help startups with content" is a mention, but it might happen because Averi is well-known, not because any specific Averi content was extracted.

Citations require extraction.

When an AI cites your page, it's telling you: "This specific content was relevant enough, structured enough, and authoritative enough to attribute the answer to."

That's a measurable signal of content quality that you can directly act on. Write better content, improve citation rate. Write structurally worse content, citation rate drops.

AirOps research shows brands that earn both citations and mentions are 40% more likely to resurface across multiple AI answers than citation-only brands. Neither metric works in isolation. But citation frequency is where your content optimization work shows up first — typically 2-4 weeks before mention rate starts climbing.

For early-stage startups with low brand recognition, citation frequency is disproportionately important because mentions are thin. You haven't earned the category recall that drives organic mentions yet. What you can earn faster is citation rate through structural content optimization.

Platform-Specific Citation Mechanics

Each AI engine cites differently. Understanding the mechanics tells you where to focus optimization effort.

Platform | Citation Rate | Index Source | Primary Signal | Optimization Priority |

|---|---|---|---|---|

ChatGPT | 87% of responses | Bing index + live web | Authoritative, structured content | Bing indexing + answer capsules |

Perplexity | ~3-4 sources per query | Proprietary index + live web | Freshness, fact density | Content refresh cadence |

Claude | Variable, precision-focused | Structured retrieval | Clear sourcing, technical precision | Fact-dense analytical content |

Google AI Mode | 76.3% of responses | Google index + knowledge graph | E-E-A-T signals, schema | Schema markup + author signals |

Google AI Overviews | 84.9% of responses | Google index | Entity density, top-10 rankings | Match traditional SEO + AI structure |

ChatGPT citation mechanics

ChatGPT pulls primarily from Bing's index. If your site isn't in Bing, ChatGPT can't cite it — this is the single biggest technical failure most teams miss. Check Bing Webmaster Tools for index coverage before doing any ChatGPT optimization.

ChatGPT weights structured, authoritative content. 72.4% of ChatGPT-cited pages contain answer capsules — 40-60 word self-contained answers under H2 headings. Citation is Bing-indexable content that passes the extractability test.

Perplexity citation mechanics

Perplexity runs its own crawler and index. Content updated within the past 12 months earns 3.2x more citations on Perplexity specifically. Freshness is weighted more heavily than on any other major engine.

Perplexity visits approximately 10 candidate pages per query and cites 3-4. Scoring factors include topical relevance, content freshness, source authority, and structural extractability. If you want fast citation feedback loops (2-4 weeks), optimize for Perplexity first.

Claude citation mechanics

Claude's retrieval is less publicly documented than ChatGPT's or Perplexity's, but citation patterns suggest precision is weighted heavily. Content with clear logical argument, well-defined terms, careful sourcing, and technical depth earns Claude citations at higher rates than promotional or hype-heavy content.

Claude is also more likely to cite analytical content (research reports, comparative analyses) than transactional content (pricing pages, product-focused landing pages).

Google AI Mode and AI Overviews

Google's AI products weight E-E-A-T signals heavily. 96% of AI Overview citations come from sources with strong E-E-A-T. Schema markup, named authors, verified expertise, and domain authority all contribute more to Google AI citations than to ChatGPT or Perplexity.

Traditional SEO authority still helps — pages ranking in the top 10 organically are 65% more likely to earn AI Overview citations than pages outside the top 10.

The practical implication: optimizing for one platform doesn't predict citation rate on the others. Only 11% of sites are cited by both ChatGPT and Perplexity. Platform-specific tracking is non-negotiable.

Check your GEO readiness

How to Measure Citation Frequency (Free Tool Method)

A 20-person B2B SaaS startup can track citation frequency weekly using free tools and a spreadsheet in under 60 minutes. Here's the process.

Step 1: Build your prompt library

A prompt library is a fixed list of 25-50 buyer-relevant queries that remain consistent week to week. This gives you longitudinal data — you can see whether citation rate is trending up or down on the same queries.

Mix three prompt types:

Category prompts: "Best [category] tools," "Top [category] platforms," "How to choose a [category] tool"

Comparison prompts: "[Competitor 1] vs [competitor 2]," "Alternatives to [competitor]"

Use-case prompts: "How to [core job your product solves]," "Best way to [outcome customers want]"

For the complete methodology including prompt type balance, update cadence, and how to avoid prompt bias, see How to Build an AI Visibility Prompt Library.

Step 2: Set up your tracking spreadsheet

Create a Google Sheet with these columns:

Prompt text

Platform (ChatGPT / Perplexity / Claude / Google AI Mode)

Date run

Cited? (Yes/No)

Mentioned? (Yes/No)

Placement (Headline / Body / Footnote / Not cited)

Sentiment (Positive / Neutral / Negative / Not cited)

Notes (competitors cited, surprising context)

This becomes your longitudinal dataset.

Step 3: Run the prompts weekly

Every Monday, paste each prompt into:

ChatGPT (with web search enabled — this is critical; without search, ChatGPT won't cite recent content)

Perplexity

Google AI Mode

Claude (with web search enabled)

For each response, log whether your domain appears as a citation, the placement, and basic sentiment. Most prompts take 30-45 seconds per platform to evaluate.

For 25 prompts × 4 platforms = 100 data points per week, budget 45-60 minutes of focused time.

Step 4: Calculate citation frequency

At the end of each week:

Calculate this separately per platform because the numbers will differ substantially. Also calculate a blended average for the top-line report.

Step 5: Track trends over time

Single-week data is noise. Track for 8+ weeks before drawing conclusions. Plot citation frequency per platform over time. Look for:

Rising trends: Optimization work is paying off

Falling trends: Competitors are out-optimizing you, or your content freshness lapsed

Flat trends: Current work isn't moving the needle — time to try different tactics

The Weekly AI Visibility Report Template formalizes this process into a repeatable workflow.

Paid Tool Options (Series B+ Scale)

Manual tracking works up to roughly 50 prompts across 4 platforms. Past that scale, automated tools become worth the investment.

Entry-level ($99-$299/month): Otterly.ai, Goodie AI, Semrush AI Visibility Toolkit. These run your prompt library on schedule and alert when citation rate shifts significantly. Good for teams spending 5+ hours per week on manual tracking who want to reclaim that time.

Mid-market ($299-$999/month): Profound, LLM Pulse, Visiblie. These add competitive benchmarking, historical trend analysis, and platform-specific breakdown. Worth it when you need to report AI visibility to leadership or investors regularly.

Enterprise ($1,000-$2,500/month): Full platform suites with multi-brand management, custom integrations, and API access. Only makes sense for enterprise teams managing 200+ prompts across 6+ engines.

The honest recommendation: most B2B SaaS startups under $10M ARR don't need paid tools. The manual spreadsheet method produces the same strategic insight at 1/20th the cost. Upgrade when measurement time exceeds 5 hours per week.

Interpreting Citation Frequency Trends

Raw citation rate numbers mean less than their trend direction. Three patterns to watch.

Pattern 1: Rising citation rate

If citation frequency is climbing 2-5 percentage points per month, your optimization work is compounding. Typically driven by:

Content refresh cycles adding freshness signals

Answer capsule retrofits on existing pages

Schema markup updates across the site

New content earning citations on emerging queries

Continue the current strategy. Don't change tactics when the trend is working.

Pattern 2: Flat citation rate

If citation frequency stays within a 2-point band for 8+ weeks, your current work isn't moving the needle. Possible causes:

Content structure isn't extractable (no answer capsules, weak fact density)

Platform mismatch (optimizing for ChatGPT while Bing indexing is broken)

Category saturation (competitors are all optimizing at equal pace)

Attribution issue (citations happening but misattributed to wrong domain)

Diagnostic steps: run a platform-by-platform audit, check your 10 most-cited and 10 least-cited pages structurally, and verify Bing indexing status.

Pattern 3: Falling citation rate

If citation frequency drops 5+ points over 4-8 weeks, something specific broke. Possible causes:

Content freshness lapsed (no refresh cycles in 6+ months)

Competitor launched a major content refresh that displaced your citations

Algorithm update changed platform weighting

Technical issue (robots.txt blocking AI crawlers, schema broken)

Immediate diagnostics: check robots.txt for AI crawler permissions (GPTBot, PerplexityBot, ClaudeBot, Google-Extended), verify schema markup is still rendering, and audit competitor content in your category for major recent updates.

For the full diagnostic framework including when to restructure your prompt library vs. when the problem is platform-side, see The Complete Guide to AI Visibility for B2B SaaS.

Common Citation Tracking Mistakes

Six measurement mistakes that produce misleading citation data.

Mistake 1: Running different prompts each week

Teams rotate prompts thinking it gives broader coverage. Actually it destroys longitudinal comparability. You can't tell if citation rate changed because of your work or because of different prompts. Fix: lock your prompt library for at least 8 weeks before any updates.

Mistake 2: Not running across multiple platforms

Teams measure only ChatGPT and miss that they're being heavily cited on Perplexity. Or vice versa. Fix: run every prompt across at least ChatGPT, Perplexity, and Google AI Mode minimum.

Mistake 3: Confusing brand mentions with citations

An AI saying "tools like [your brand]..." is a mention, not a citation. A response with a clickable link to your domain is a citation. Teams conflate these and report inflated "AI visibility" numbers. Fix: log mentions and citations in separate columns — they measure different things.

Mistake 4: Ignoring platform-specific failures

Citation rate averaged across platforms hides that you're 30% on Perplexity and 0% on ChatGPT. The blended average looks fine while ChatGPT (with 800M weekly users) is treating you as invisible. Fix: report citation rate per platform, not just blended.

Mistake 5: Running prompts without web search enabled

ChatGPT and Claude can run in "no web search" mode where they pull only from training data. These responses won't cite your recent content. Fix: always enable web search / browsing before running prompts for citation tracking.

Mistake 6: Declaring victory or defeat at week 2

Citation rates are volatile. A single week's reading can swing 10+ points based on random prompt sampling. Fix: measure for 8 weeks before drawing any conclusion about trend direction.

How to Improve Citation Frequency When It's Stuck

If your citation rate has been flat for 8+ weeks, here's the prioritized intervention list.

Highest-impact: Answer capsules

Add 40-60 word self-contained answer capsules directly under every H2 on your top 10 highest-impression pages. 72.4% of ChatGPT-cited pages contain answer capsules. This is typically the single largest citation-rate improvement available.

High-impact: Content freshness cycles

Refresh dates, statistics, and sources on pages older than 12 months. Content updated within the past 12 months earns 3.2x more citations on Perplexity specifically. Don't fake freshness — genuine updates required.

High-impact: Schema markup

Add FAQPage, Article, and Organization schema to pages that don't have it. Structured data improvements typically show measurable citation impact within 14-21 days on Google AI Mode and AI Overviews.

Medium-impact: Fact density

Increase cited statistics to at least 1 fact per 80 words of body content. Content with 3+ statistics per 300 words achieves 2.1x higher citation rates than sections with zero statistics.

Medium-impact: Bing indexing

For ChatGPT specifically, verify your entire site is indexed in Bing Webmaster Tools. Teams that focus only on Google often have Bing coverage gaps that kill ChatGPT citation rate.

Lower-impact (longer timeline): Source diversity

Pursue mentions and links on G2, Capterra, Reddit, industry publications, and earned media. Brands cited across 4+ different domain types are 78% more likely to maintain consistent AI visibility.

Content Engine Integration

Running citation tracking manually is the right starting point. Manual measurement forces you to understand the signal. But at the 50+ prompt scale or multi-platform tracking cadence, the process becomes workflow overhead that eats editorial time.

A content engine builds citation tracking into the production workflow:

GEO scoring on every draft evaluates structural citation-eligibility (answer capsules, fact density, schema presence) before publishing — pages ship already optimized

Freshness monitoring flags pages older than 9 months for refresh cycles automatically

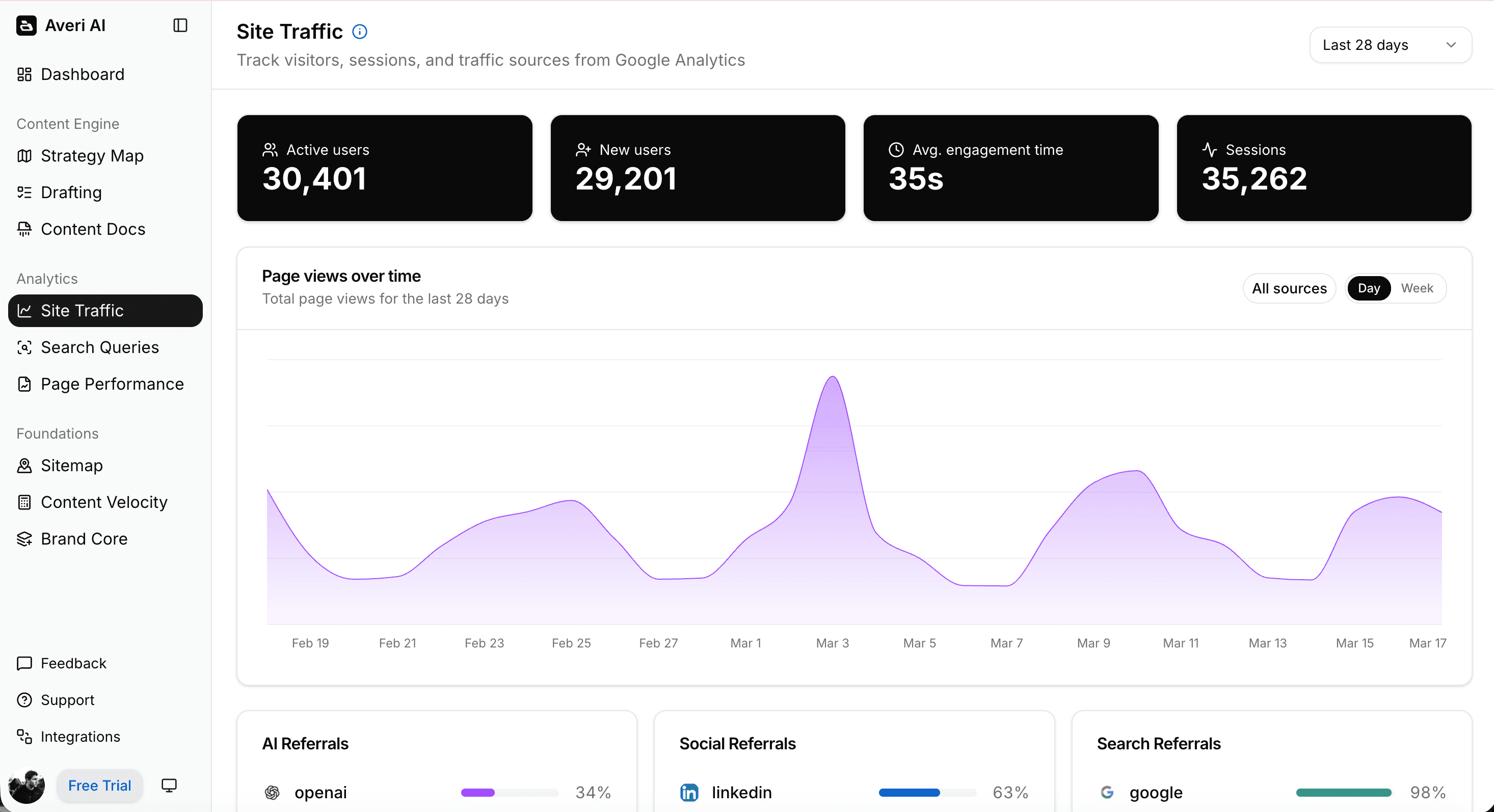

Citation rate dashboards integrate the measurement output with content production decisions — you see which pages are earning citations and which aren't

AI-referred traffic attribution connects citation rate to downstream conversion and pipeline (see Attribution for AI-Referred Traffic)

The result: the 90-minute weekly measurement ritual becomes a 20-minute review of what the engine already tracked, and optimization priorities become clear from the data rather than requiring separate analysis.

For the broader operational framework, see The Weekly AI Visibility Report Template.

FAQs

What is a citation in AI search?

A citation is the attribution of an answer to a specific source by an AI engine, typically shown with a clickable link or domain reference in the response. Citations are different from brand mentions (where the AI names a brand without attribution) and from brand references (where the AI describes a company without citing its content). Citations are the strongest signal of content authority because they require the AI to have indexed, retrieved, judged authoritative, and included your specific content in the response.

What's a good citation frequency for a B2B SaaS startup?

Stage-dependent. Pre-seed companies with minimal content typically score 0-2%. Seed-stage with 3-12 months of content see 2-8%. Series A companies hit 8-20%. Series B+ companies with 3+ years of content run 20-35%. Category leaders achieve 35-50%. Focus on trend direction rather than absolute number — a startup growing from 3% to 9% in a quarter is winning.

How is citation frequency different from brand mention rate?

Citation frequency tracks when AI engines cite your specific content as a source (typically with a clickable link). Brand mention rate tracks when AI engines name your brand at all, with or without attribution. Both are useful but measure different things — citations reflect content authority and structural quality, while mentions reflect brand recognition and category presence.

Which AI engines should I track citation rate for?

At minimum: ChatGPT, Perplexity, and Google AI Mode. These three cover the vast majority of B2B research queries. Add Claude if your category skews technical or analytical. Avoid aggregating citation rates across platforms — report separately because the numbers will differ substantially. Only 11% of sites are cited by both ChatGPT and Perplexity simultaneously, so single-platform tracking misses 60-80% of the picture.

How often should I measure citation frequency?

Weekly is the minimum viable cadence for tracking trends accurately. Daily produces noise. Monthly misses early optimization signals. The ideal cadence: 25-50 prompts × 3-4 platforms × 60 minutes = weekly measurement that takes one focused hour. Anything less frequent means you won't catch optimization impacts until 4-8 weeks after they happen.

Why is my citation rate different on ChatGPT vs. Perplexity?

Each AI engine runs its own retrieval system, index, and ranking algorithm. ChatGPT pulls primarily from Bing's index, Perplexity uses its own proprietary crawler with heavy freshness weighting, Claude uses structured precision retrieval, and Google AI Mode uses Google's index with E-E-A-T signals. Your content structure might match one engine's preferences and miss another's. Platform-specific optimization is necessary — treating all engines as one target guarantees mediocre results across all four.

When should I upgrade from manual to paid citation tracking?

When manual tracking exceeds 5 hours per week. That's typically the inflection point where automation pays back its subscription cost. For most B2B SaaS startups under $10M ARR with 25-50 prompts across 4 platforms, the manual spreadsheet method produces the same strategic insight at 1/20th the cost of enterprise platforms. Upgrade to Otterly ($99/month entry) or Profound ($299/month mid-tier) once you're spending more time measuring than optimizing.

Related Resources

Core AI Visibility Framework

The Complete Guide to AI Visibility for B2B SaaS — the pillar this piece sits under

Brand Visibility Score: The Only AI Search Metric That Actually Matters

AI Share of Voice: The Competitive Metric Most SaaS Teams Aren't Tracking Yet

Measurement Operations

How to Build an AI Visibility Prompt Library (25-50 Prompts That Actually Matter)

Attribution for AI-Referred Traffic: Fixing the "Direct Traffic" Problem in GA4

The Weekly AI Visibility Report: A 90-Minute Template for Startup Teams

SEO Visibility vs. AI Visibility: The Two Metrics Every B2B SaaS Needs in 2026

Platform-Specific Optimization

ChatGPT vs. Perplexity vs. Google AI Mode: The B2B SaaS Citation Benchmarks

Google AI Overviews Optimization: How to Get Featured in 2026

Citation Optimization Tactics

The GEO Playbook 2026: Getting Cited by LLMs, Not Just Ranked by Google

Building Citation-Worthy Content: Making Your Brand a Data Source for LLMs

Schema Markup for AI Citations: The Technical Implementation Guide