We Get 37 Visitors a Day From AI Chatbots. Here's What They're Pulling.

6 minutes

TL;DR:

📈 AI chatbots sent us 37 visitors in a single day on March 31. Two weeks earlier, weekends were sitting at 14. The growth is real, and the trajectory is up

🔍 Perplexity sends far fewer visitors than ChatGPT or Gemini, but those visitors browse 5+ pages per session. Four people generated 22 pageviews. The intent behind a Perplexity referral appears to be a different thing entirely

📊 LLMs consistently surface our interactive scoring tool, our GEO guides, and our benchmark report. Opinion content rarely shows up. The pattern is tools, structure, data

🔄 Our guide about getting cited by AI search engines is itself getting cited by AI search engines. We are genuinely unsure what to make of this

📉 AI referrals are 3-4% of daily traffic. That sounds like background noise until you learn that AI-referred visitors produce 12.1% more signups than organic visitors (Ahrefs data). A small channel with high intent beats a large channel with shrinking clicks

Zach Chmael

CMO, Averi

"We built Averi around the exact workflow we've used to scale our web traffic over 6000% in the last 6 months."

Your content should be working harder.

Averi's content engine builds Google entity authority, drives AI citations, and scales your visibility so you can get more customers.

We Get 37 Visitors a Day From AI Chatbots. Here's What They're Pulling.

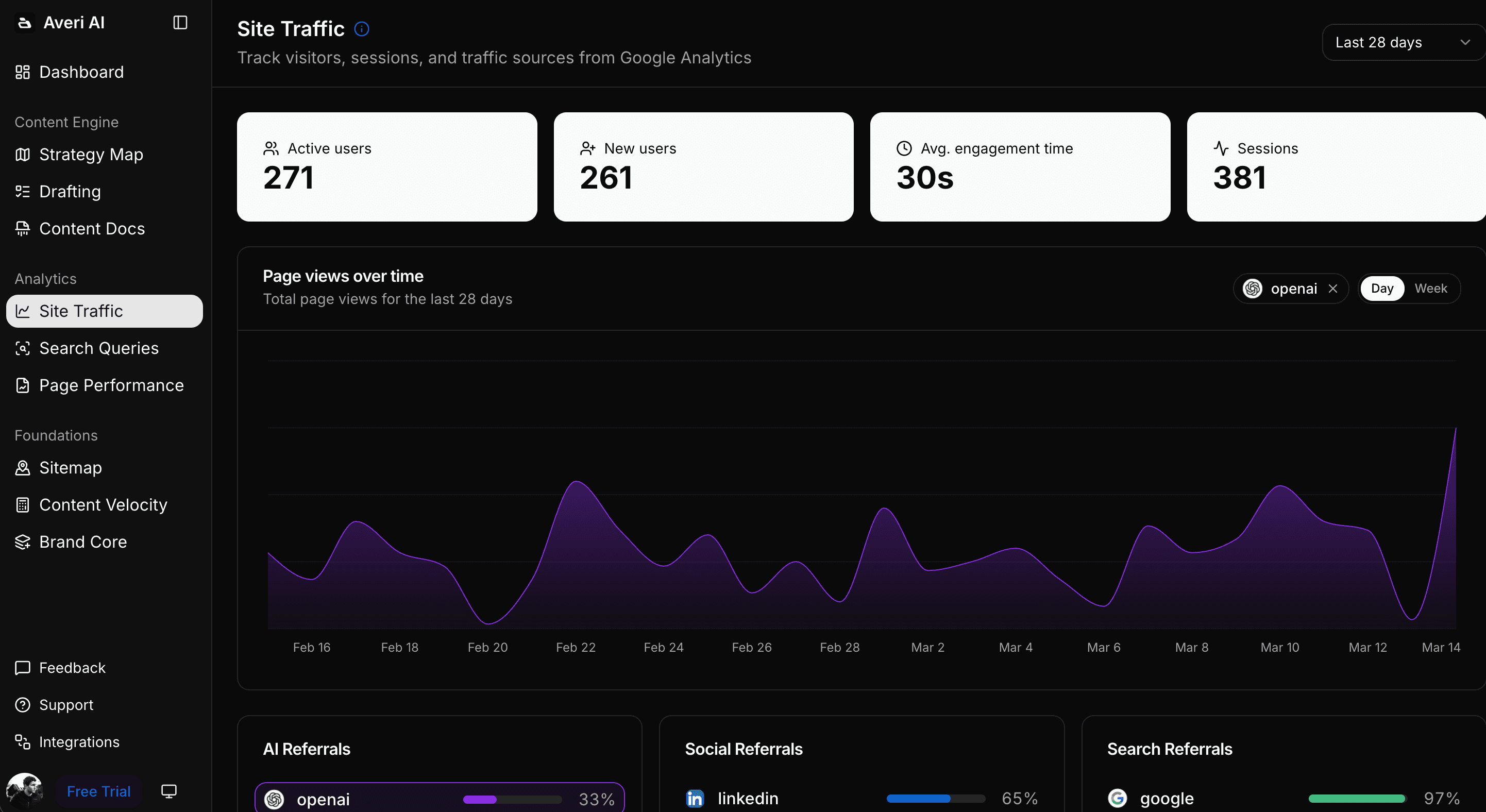

We started paying close attention to AI chatbot referrals in August 2025.

Not for any strategic reason, honestly. Fathom Analytics started showing referral sources we didn't immediately recognize, and when we dug in, they were ChatGPT and Gemini. Small numbers at first. Easy to dismiss.

Then they weren't small anymore.

We now get 25-37 AI referrals per day across ChatGPT, Gemini, Perplexity, Claude.ai, and Copilot.

That's 3-4% of daily traffic on a site that runs around 700-900 visitors per day total.

On March 31, AI sources sent us 37 people in a single day. Two weeks before that, a typical weekend was 14-20.

This post is what we're actually seeing: the source breakdown, the engagement gaps between platforms, which pages keep getting cited, and some things we noticed that we can't fully explain yet.

The Breakdown by Source

Not all AI referrers behave the same way, and the differences matter more than the volume numbers suggest.

ChatGPT sends us 8-11 visitors per day on average. Moderate pageviews per visit. The most consistent source by far, showing up every day without much variance.

Gemini is actually outpacing ChatGPT on certain days, with 7-13 visitors. There's more day-to-day swing. Some days it leads everything, other days it falls off. We don't have a clean explanation for why yet.

Perplexity sends 3-8 visitors per day, which sounds modest next to the other two. It's not. We'll get into the engagement numbers below.

Claude sends 2-4 visitors per day, consistent but low volume.

Copilot and Bing together range from 0 to 5, with some days showing nothing at all.

The week-over-week trajectory is the part worth watching.

Sunday March 22: 14 AI visitors total.

Monday March 23: 25 visitors, up 79% in 24 hours.

Thursday March 26: 24 visitors.

The weekend of March 28-29: roughly 19-20 each day.

Tuesday March 31: 37 visitors, the peak so far.

Thursday April 2: 31 visitors.

We don't know if this keeps climbing or if we're watching an early-adopter spike that levels off.

Right now the direction is up. That's as much as we can say honestly.

Perplexity's Engagement Ratio Doesn't Make Sense at First

Here's the number that made us stop and look twice.

On a day when Perplexity sent us 4 visitors, those 4 people generated 22 pageviews. That's roughly 5.5 pages per person.

For comparison, most referral traffic sits somewhere around 1.5-2 pageviews per visitor.

Perplexity's ratio is not a data artifact. We've seen it repeat.

Our best guess is that Perplexity users arrive already mid-research. Perplexity cited us in an answer, they clicked through ready to actually read something, and then they kept reading.

ChatGPT and Gemini seem to send more casual traffic. People land, scan a page, move on. Which is fine. But the intent behind a Perplexity referral appears to be qualitatively different, not just quantitatively smaller.

Whether that ratio holds at higher volumes or falls apart, we can't say yet. The sample size is small. But four people generating 22 pageviews is not nothing, and we've seen the pattern repeat enough times that we're paying attention to it.

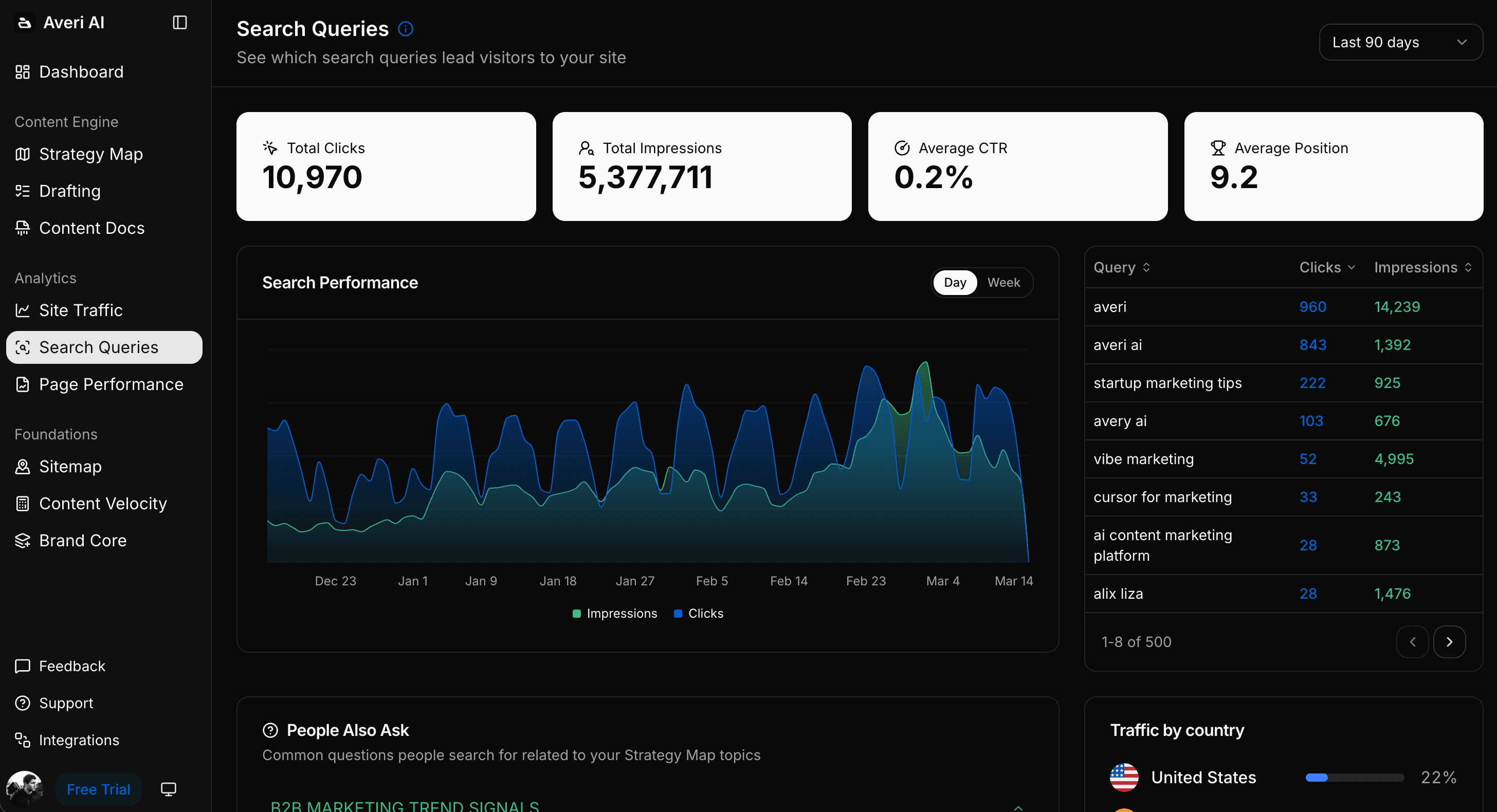

What Content AI Is Actually Surfacing

We have 300+ published pages. LLMs are not pulling randomly from all of them.

The pages that consistently show up in AI referral data:

Our /widget/content-engine-score interactive tool. This scores your content against AI citation factors, and it seems to get referenced in answers about content quality and GEO optimization. An interactive tool getting AI citations surprised us.

The homepage, mostly on brand queries.

Our guide on optimizing blog content for ChatGPT, Perplexity, and Gemini.

Our how-to on tracking AI citations and measuring GEO success.

Our 2026 AI content marketing benchmarks report.

What's not on this list: the vast majority of our blog posts. Even ones with solid Google traffic.

The AI-cited pages skew toward tools, structured guides, and data reports. The format matters.

A January 2026 analysis of 1,400+ LLM citations on r/seogrowth found that 77% of citations go to corporate websites and 75% go to listicle-style content.

YouTube gets cited 0.3% of the time. Opinion posts and general thought leadership, at least in our data, don't show up in AI referrals much at all.

The takeaway aligns with what we've been writing about in our GEO playbook: AI systems cite content that's structured for extraction, rich in specific data, and organized around clear questions and answers.

They don't cite content that's interesting to read but hard to pull a fact from.

The Part We Still Don't Fully Understand

One of our consistently AI-cited pages is a guide titled "What LLMs pull from your website (and what they ignore)" at /guides/llms-pull-from-website-ignore.

That guide is about how to get cited by AI search engines. AI search engines are citing it.

There are a few ways to read this.

Maybe LLMs pull content that's semantically well-structured and answers specific questions clearly, and we wrote that guide with those properties in mind. Maybe LLMs are slightly more likely to reference content that's literally about them.

Maybe both. We don't actually know.

What we do know: we wrote that page explicitly thinking about GEO principles, and it's getting GEO traffic.

We're not ready to declare a playbook from a single data point, but it's a better outcome than we expected.

Why 3-4% of Traffic Might Matter More Than It Looks

On paper, 3-4% of daily traffic sounds like background noise. Google sends us 150-200 visitors per day. Direct and unknown traffic accounts for 50-57% of everything. AI referrals are a rounding error by raw volume.

But there's an intent argument worth taking seriously.

Ahrefs published a study on AI referral traffic that found 12.1% more signups came from the 0.5% of visitors who arrived via AI sources.

The conversion rate differential is significant.

Someone who arrived because an AI tool told them "this site answers your question" is in a different mental state than someone who clicked a result mid-browse.

The context around this is also worth being honest about.

SparkToro has been tracking the decline in Google click-through rates for years, and Rand Fishkin's zero-click search data tells a consistent story… the pool of people who actually click a search result is shrinking.

AI Overviews answer questions without anyone clicking anywhere. Featured snippets eat clicks before they happen. The Google referral pie is getting smaller, not bigger.

So the comparison we should probably be making isn't "AI referrals vs. all of Google."

It's more like: a small and growing channel with measurably higher intent, versus a large but increasingly click-averse one. That framing changes the calculus.

We're not pulling back from SEO.

Google's 150-200 daily referrals represent real traffic from real people, and that doesn't change overnight. But if the quality gap between AI referral visitors and organic visitors holds as we get more data, it changes how we'd prioritize content investment going forward.

What This Data Suggests About Content Strategy

The pattern in what AI systems cite vs. what they ignore has practical implications for how startups build their content libraries.

Structured, data-rich content gets cited. Our benchmark report and GEO guides show up consistently. Generic blog posts with opinions and advice don't. If you're producing content and want AI visibility, the structure and data density of each piece matters as much as the topic.

Interactive tools get cited. This surprised us. The content-engine-score widget getting AI referrals suggests that LLMs can reference tools, not just articles. If you have calculators, scoring tools, or diagnostic widgets on your site, they may be earning citations you're not tracking.

Content about AI gets cited by AI. This could be a coincidence or a genuine pattern. Either way, content that explicitly addresses how AI systems work and what they look for appears to perform well in AI referrals. Writing about the system may help the system find you.

E-E-A-T signals seem to matter for AI citation too. The pages getting cited have named authorship, specific data with attribution, and clear expertise signals. The pages not getting cited tend to be more generic, unsigned, and opinion-driven.

This tracks with what we've written about GEO optimization: AI systems use credibility signals as citation filters.

What We're Still Trying to Figure Out

We can't yet see which queries generated our AI citations. Search Console gives us keyword-level data for Google. AI referrers don't.

We know Perplexity sent someone to our site, but we have no idea what they asked Perplexity.

That's a real gap in the data, and there's no clean workaround right now without significant software investment.

We also don't yet know whether AI referral visitors convert at higher rates than organic visitors on our site specifically.

The Ahrefs study points at something real, but we need our own conversion data over a longer window before we'd make budget decisions based on it.

We're collecting that data now.

The source-level variance is also going to get more complicated. Superlines published data showing a 615x citation volume difference between Grok and Claude for certain content types.

Six hundred and fifteen times.

That number is so large it suggests we're not really talking about one "AI search" channel. We're talking about multiple very different systems that happen to all be described as chatbots. Treating them as a single category probably breaks down at some point.

We'll post updates when the data develops further. If you're tracking AI referrals on your own site and seeing patterns that contradict what we've described here, we'd actually want to hear about it.

How Averi Helps You Earn AI Citations

The patterns in our own data are the same patterns we've built into Averi's content engine.

SEO + GEO Optimization structures every article for the signals AI systems use to select citation sources: answer-first formatting, attributed statistics, FAQ sections with schema markup, and the semantic clarity that makes content extractable. The pages on our own site that earn AI citations were built using these principles.

Content Scoring evaluates your content against the same citation factors before you publish. Our content-engine-score widget (one of the pages AI keeps citing, incidentally) is a public version of the diagnostic that runs inside the platform.

Analytics track AI referral traffic alongside traditional organic, so you see which pages are earning citations and which are invisible to AI systems. The 3-4% of traffic we described in this post? We spotted it because the analytics surface it rather than burying it in a separate tab.

The data in this post is early and we said so. But the pattern is clear enough that building for AI citation now, before it's the obvious thing everyone's doing, is the move.

Start building AI-citable content →

FAQs

How many visitors do AI chatbots send to websites?

It varies by site and content type. Our site (700-900 total daily visitors) receives 25-37 AI referrals per day across ChatGPT, Gemini, Perplexity, Claude.ai, and Copilot. That's 3-4% of daily traffic. The number has been growing week over week since we started tracking in February 2026, with a high of 37 on March 31.

Which AI chatbot sends the most referral traffic?

In our data, ChatGPT and Gemini are roughly tied at 8-13 visitors per day each. ChatGPT is more consistent day to day. Gemini has wider swings. Perplexity sends fewer visitors (3-8/day) but with dramatically higher engagement. Each platform behaves differently, which suggests optimizing for "AI search" as a single channel may be the wrong frame.

Why does Perplexity referral traffic have higher engagement?

Our best explanation: Perplexity users arrive mid-research with high intent. Perplexity cited a specific page, the user clicked expecting depth, and they kept reading. The result is 5+ pageviews per session vs. the 1.5-2 average from other referrers. This is consistent with what Ahrefs found about AI-referred visitors converting at higher rates than organic visitors.

What type of content do AI chatbots cite most?

In our data: interactive tools, structured guides, benchmark reports, and data-rich how-to content. Blog posts with opinions, general thought leadership, and content without specific data rarely appear in AI referrals. The format that works: clear structure, attributed statistics, answer-density, and schema markup.

How do I track AI chatbot referrals on my own site?

Check your analytics platform for referral sources including chatgpt.com, gemini.google.com, perplexity.ai, claude.ai, and copilot.microsoft.com. Fathom Analytics surfaces these natively. In Google Analytics 4, filter the referral report by these domains. Averi's Analytics dashboard tracks AI referral traffic alongside traditional organic so you see both channels in one view.

Is AI referral traffic worth optimizing for?

The volume is small (3-4% of our traffic) but the intent signal is strong. Ahrefs data shows 12.1% more signups from AI-referred visitors. If that conversion differential holds, the per-visitor value of AI traffic is significantly higher than organic. Meanwhile, Google CTRs are declining as AI Overviews absorb more clicks. Optimizing for AI citations now is building for where discovery is heading.

How do I get my content cited by AI chatbots?

Structure content for extraction: answer-first formatting, 40-60 word answer blocks after each heading, FAQ sections with schema markup, statistics with named source attribution, and strong E-E-A-T signals. Publish data-rich content (benchmarks, original research, tools) rather than opinion-driven posts. Ensure your robots.txt allows AI crawlers (GPTBot, ClaudeBot, PerplexityBot).

Related Resources

The GEO Playbook 2026: Getting Cited by LLMs (Not Just Ranked by Google)

Beyond Google: How to Get Your Startup Cited by ChatGPT, Perplexity, and AI Search

Building Citation-Worthy Content: Making Your Brand a Data Source for LLMs

How to Optimize Blog Content for ChatGPT, Perplexity, and Gemini

Platform-Specific GEO: How to Optimize for ChatGPT vs. Perplexity vs. Google AI Mode

Schema Markup for AI Citations: The Technical Implementation Guide