SEO Visibility vs. AI Visibility: The Two Metrics Every B2B SaaS Needs in 2026

6 minutes

TL;DR

🎯 SEO visibility measures ranking position, impressions, clicks, and CTR on traditional search results. AI visibility measures citations, brand mentions, placement, and share of voice inside AI-generated answers. They're related disciplines measuring different buyer-journey paths.

⚖️ 7 key differences: measurement unit, feedback speed, what gets rewarded, competitive structure, attribution clarity, zero-click dynamics, and content freshness weighting. Ignoring any of these produces misleading conclusions.

🔀 Weak correlation between the two. Only 11% of sites cited by both ChatGPT and Perplexity simultaneously. Strong SEO rankings don't predict AI visibility — and vice versa. 47% of AI Overview citations come from pages ranking below position 5 organically.

📊 Both need to be tracked in 2026. 75% of search queries still go through traditional search results. 25%+ now go through AI-generated answers. Measuring only one produces incomplete data. Measuring both produces the full buyer-journey picture.

✅ Dual-metric reporting: lead executive reports with AI visibility metrics (fastest-growing buyer-journey slice), retain SEO metrics as supporting indicators (largest overall search volume), and flag queries where the two metrics diverge as optimization opportunities.

Zach Chmael

CMO, Averi

"We built Averi around the exact workflow we've used to scale our web traffic over 6000% in the last 6 months."

Your content should be working harder.

Averi's content engine builds Google entity authority, drives AI citations, and scales your visibility so you can get more customers.

SEO Visibility vs. AI Visibility: The Two Metrics Every B2B SaaS Needs in 2026

Your top page ranks #3 for its primary keyword. Organic impressions up 18% year-over-year. CTR looks steady at 2.4%. By every traditional SEO measure, that page is performing.

Now run the same topic through ChatGPT, Perplexity, and Google AI Mode. Your brand isn't cited. Three competitors appear in the AI response instead.

Both things are true simultaneously.

Your SEO visibility is healthy. Your AI visibility is broken.

And the buyer journey has split in a way that makes each metric measure a different half of your real reach.

This is the core confusion facing B2B SaaS measurement in 2026.

Teams treat SEO and AI visibility as interchangeable (they're not). They report only one (which hides half the picture). They declare either SEO is dead (overstated) or AI visibility is noise (also wrong).

What's actually happening is that two related-but-distinct disciplines now both need to be tracked — because the buyer journey routes through both traditional search results and AI-generated answers, and a brand can thrive in one while being invisible in the other.

This piece is the clear comparison. Seven specific differences between SEO visibility and AI visibility. When each metric tells you the truth and when each one lies. How to report on both without your dashboard becoming noise. Which shared foundations both disciplines rely on. Common mistakes teams make in trying to unify the measurement stack.

For the full AI visibility framework, see The Complete Guide to AI Visibility for B2B SaaS.

What Is SEO Visibility vs. AI Visibility?

SEO visibility and AI visibility are two distinct measurement disciplines tracking different slices of the same buyer journey.

SEO visibility measures presence in traditional search engine results — ranking position, organic impressions, CTR, clicks — where buyers evaluate results across a page of 10+ links.

AI visibility measures presence inside AI-generated answers from ChatGPT, Perplexity, Claude, and Google AI Mode — citation frequency, brand mentions, placement, and share of voice — where buyers receive a synthesized response that names 3-6 brands.

Both disciplines share foundational principles (quality content, E-E-A-T, technical optimization) but reward structurally different signals. Most B2B SaaS companies need to measure both because the buyer journey routes through both channels.

The key insight: neither metric replaces the other.

A brand with excellent SEO visibility can be invisible in AI answers.

A brand winning AI citations can have middling organic rankings.

The disciplines overlap partially, diverge frequently, and produce contradictory signals often enough that teams tracking only one produce structurally incomplete reports.

What's your current Marketing Maturity?

The 7 Key Differences

Side-by-side comparison of what each discipline measures, rewards, and produces.

Dimension | SEO Visibility | AI Visibility |

|---|---|---|

Primary measurement unit | Ranking position, impressions, clicks, CTR | Citation frequency, brand mentions, placement, share of voice |

Buyer evaluates | 10-position search results page | Synthesized answer naming 3-6 brands |

Feedback loop speed | 6-12 weeks for rank changes | 2-4 weeks (Perplexity), 6-12 weeks (ChatGPT) |

Primary reward signals | Backlink authority, keyword relevance, domain authority | Structural extractability, fact density, freshness, answer capsules |

Competitive structure | Gradient (position 3 beats position 5) | Binary (in the response or not in the response) |

Attribution clarity | Clear click source, UTM-trackable | |

Conversion dynamics | Organic visitors convert at ~2-4% for B2B SaaS |

Each of these differences has practical implications for measurement and strategy.

Difference 1: Measurement unit

SEO counts clicks. AI visibility counts citations and mentions. The units aren't interchangeable — a citation in ChatGPT drives downstream brand awareness even when it doesn't produce a click, while a click from Google produces an immediate visitor but no lasting brand impression. Reporting one as a substitute for the other distorts both.

Difference 2: Buyer evaluation surface

SEO buyers evaluate a page of 10+ results and choose which to click. AI buyers evaluate a synthesized 3-6 brand answer and decide which 1-3 to research further. The AI-mediated evaluation surface is narrower, more curated, and more decisive. Being mentioned in an AI response is closer in value to being position 1-3 on Google than to being position 5-10.

Difference 3: Feedback loop speed

SEO rankings take 6-12 weeks to shift after content changes due to crawl cycles and algorithm lag. Perplexity citation changes typically appear in 2-4 weeks due to its active crawler and freshness weighting. Google AI Mode also updates in 2-4 weeks. ChatGPT takes 6-12 weeks due to Bing-index dependency. This means AI visibility often provides faster optimization feedback than SEO for half the engines.

Difference 4: What gets rewarded

SEO rewards backlink authority, keyword relevance, domain strength, and technical optimization. AI visibility rewards structural extractability (answer capsules), fact density, content freshness, schema markup, and source diversity. The reward signals overlap partially (both benefit from E-E-A-T, technical cleanliness, and content quality) but diverge sharply on structural formatting. A page can rank #1 on Google while being structurally unextractable for AI engines.

Difference 5: Competitive structure

SEO is gradient — position 3 beats position 5 beats position 8 in a measurable way, and multiple brands coexist on each SERP. AI visibility is closer to binary — you're either in the 3-6 brand response or you aren't. This makes AI SOV more zero-sum than SEO, a dynamic explored in detail in AI Share of Voice: The Competitive Metric Most SaaS Teams Aren't Tracking Yet.

Difference 6: Attribution clarity

SEO attribution is clean — Google passes referrer data, UTM parameters work, GSC shows exact query matches. AI visibility attribution is messy — referrers strip frequently, mobile apps lose attribution entirely, and 30-50% of AI-referred traffic misclassifies as Direct in GA4. Clean reporting requires different setup for each.

Difference 7: Conversion dynamics

Organic search traffic converts at typical B2B SaaS rates of 2-4%. AI-referred traffic converts at approximately 14% — roughly 5x higher. The quality gap reflects the difference in buyer intent: AI-referred visitors arrive pre-qualified by the AI's synthesis, while Google organic traffic includes high-funnel researchers alongside ready-to-buy prospects.

Signal Types Each Discipline Measures

The disciplines don't just differ in metrics — they measure structurally different buyer behavior.

SEO measures intent-expressed behavior

Every click on a Google organic result is an active choice. The user saw the title, snippet, and URL, and decided to click that result specifically over 9+ alternatives. SEO traffic is behavior data — the user made a decision.

AI visibility measures intent-influenced behavior

Most buyer exposure to AI responses doesn't produce a click. The buyer reads the synthesized answer, forms an impression of the 3-6 named brands, and may take action days or weeks later. AI visibility is influence data — the user absorbed the information, even if no measurable action followed immediately.

Both types of data matter. SEO traffic data tells you what's producing immediate revenue. AI visibility data tells you what's shaping the consideration set before direct conversion happens. Optimizing for one at the expense of the other produces short-term or long-term blind spots.

When SEO Metrics Are Right

Six scenarios where SEO visibility metrics are the primary signal to act on.

Scenario 1: Commercial transactional queries

Pricing, demo request, signup, "buy [product]" type queries are still dominated by traditional search. Buyers at this stage have already decided on a brand and are trying to find the specific page to act on. SEO position and CTR are the right metrics.

Scenario 2: Long-tail informational queries

Specific, technical questions with fewer than 100 monthly searches often don't trigger AI Overviews and aren't heavily surfaced in ChatGPT. Traditional SEO captures this traffic cleanly.

Scenario 3: Branded search

People searching your brand name directly are a known-intent audience. SEO rankings for branded queries matter because competitors can buy your brand name terms or outrank you on comparison pages. AI visibility is less relevant here because branded search usually doesn't trigger AI responses at all.

Scenario 4: Navigational queries

"Login," "support," "documentation," "pricing page" — users navigating your own site or its features. These are SEO queries where AI visibility produces no useful signal because the user isn't researching anything.

Scenario 5: High-volume, low-AI-Overview-triggered queries

Some categories still see low AI Overview trigger rates (highly niche technical topics, some regulatory topics, very fresh news). SEO metrics remain more predictive for these categories until AI Overview coverage catches up.

Scenario 6: Legacy content audits

When auditing content that was built for traditional SEO and may or may not perform in AI search, start with SEO metrics (rank position, click share, CTR) before layering in AI visibility measurement. SEO metrics identify pages worth investing in; AI visibility metrics tell you how to optimize them.

When AI Visibility Metrics Are Right

Six scenarios where AI visibility metrics are the primary signal to act on.

Scenario 1: Category research queries

"Best [category] tools," "top [category] platforms," "what [category] should I use" — these increasingly produce AI Overviews and heavy ChatGPT/Perplexity engagement. Buyers are researching the category before they know which brand to evaluate. AI visibility determines whether you're in the shortlist — SEO rank alone doesn't.

Scenario 2: Comparison queries

"X vs Y," "alternatives to X," "best X for Y audience." AI engines synthesize comparisons by pulling from multiple sources. Being cited in comparison responses is a leading indicator of being considered in actual buyer deliberation.

Scenario 3: High-level educational content

"How does [concept] work," "what is [category]," "why does [pattern] matter." AI engines increasingly own educational query responses. Traditional SEO may still rank you, but AI Overviews displace most of the clicks, making AI visibility more predictive of brand awareness impact than SEO position.

Scenario 4: Emerging topics

When a new topic or term emerges in your category, AI engines often pick up the shift 2-4 weeks before Google ranks newly-published content for the term. Perplexity's freshness weighting gives early-mover content rapid visibility. Measuring AI citations on emerging topics catches the signal early.

Scenario 5: Content marketing ROI reporting

When reporting the impact of content investment to leadership, AI visibility metrics increasingly correlate better with pipeline contribution than SEO metrics do. The 14% AI-referred conversion rate vs 2.8% Google Organic rate means a smaller number of AI-referred sessions often produces larger pipeline impact than traditional organic traffic at equal volume.

Scenario 6: Category-level brand strategy

When measuring whether your brand is becoming a "category default" — the brand buyers think of first when the category comes up — AI visibility and AI share of voice are more direct signals than SEO. Being the #1 ranking doesn't mean you're the #1 brand mention in AI responses.

When the Two Metrics Contradict

The interesting signal lies in the contradictions. Four specific patterns to recognize.

Pattern 1: High SEO visibility, low AI visibility

What it means: Your content ranks well but isn't structurally extractable by AI engines.

Why it happens: Strong domain authority and keyword optimization, but weak structural formatting — no answer capsules, thin fact density, long preamble before core content, weak schema markup.

What to do: Audit your top-10 SEO pages for AI extractability. Add answer capsules under every H2, front-load data density in the first 300 words, implement FAQPage and Article schema. Most high-SEO-low-AI pages can be brought into AI visibility within 4-8 weeks of structural improvements.

Pattern 2: Low SEO visibility, high AI visibility

What it means: Your content is being cited despite not ranking well in traditional search.

Why it happens: Structurally excellent content (answer capsules, fact density, schema) on a domain without strong backlink authority. Perplexity specifically rewards this pattern because it weights freshness and extractability over authority.

What to do: This is a strong position to be in — use it. Double down on AI visibility while working on domain authority (backlinks, citations on third-party domains, PR) in parallel. The AI visibility is producing pipeline today; the SEO authority will compound over 12-24 months.

Pattern 3: Both rising together

What it means: Your content foundation is solid and both disciplines reward it.

Why it happens: Quality content + strong backlinks + extractable structure + freshness discipline = both metrics climbing.

What to do: Keep doing what's working. Don't reorient toward either discipline alone — the combined pattern is rare and valuable. Focus on scaling the approach to more topic areas.

Pattern 4: Both falling together

What it means: Content quality has degraded, authority is eroding, or a competitor has overtaken you on both dimensions.

Why it happens: Content freshness lapsed, technical issues (indexing problems, broken schema), competitive content pushes, or algorithm shifts affecting both channels.

What to do: Diagnose platform-by-platform. If SEO is falling first and AI follows, the underlying issue is content quality or authority. If AI falls first and SEO follows, the issue is probably structural (extractability, freshness) spreading to affect overall signal quality.

The Dual-Metric Reporting Framework

How to report on both disciplines without your dashboard becoming unreadable.

The 3-tier reporting structure

Tier 1: Headline metric (every report)

Brand Visibility Score — the composite AI visibility number

Organic traffic + pipeline influence (the top-line SEO view)

Tier 2: Diagnostic metrics (weekly review)

AI citation frequency per platform

AI share of voice vs competitors

Ranking position for top 20 commercial keywords

Organic CTR and click share

Tier 3: Deep-dive metrics (monthly analysis)

Page-level citation rate vs. ranking position (flags Pattern 1 and Pattern 2)

AI-referred traffic attribution breakdown

Competitor SOV changes

New content ranking progression

What to lead with in executive reports

Lead with AI visibility metrics because they represent the fastest-growing buyer-journey slice and the metric executives know least about. Retain SEO metrics as supporting indicators because they represent the largest overall search volume and the metric executives already understand.

The opening line of every executive report should be: "AI visibility [grew/held/declined] [X%] this [period], and organic search visibility [grew/held/declined] [Y%]." Those two sentences cover the full search-mediated buyer journey.

For the operational weekly reporting rhythm, see The Weekly AI Visibility Report: A 90-Minute Template for Startup Teams.

Shared Foundations Both Disciplines Reward

Despite the differences, several foundational practices benefit both SEO and AI visibility simultaneously.

Quality content. Both disciplines reward content that actually answers the query well. Thin content, keyword-stuffed pages, and low-value summaries perform poorly on both.

E-E-A-T signals. Experience, expertise, authoritativeness, trustworthiness help rankings on Google and citation probability on Google AI Mode specifically. Author bios, citation diversity, factual accuracy, and credential signals all carry over.

Technical basics. Schema markup, correct robots.txt, XML sitemaps, mobile-friendliness, page speed, and crawlability are baseline requirements for both. AI engines use many of the same technical signals to decide what to index.

Content freshness. Both disciplines reward updated content, though AI visibility (especially Perplexity) weights freshness more heavily than traditional SEO does. Pages refreshed in the past 12 months earn 3.2x more AI citations — and also benefit from traditional "freshness" ranking signals.

Source diversity. Being cited across multiple domain types (G2, Capterra, industry pubs, Reddit) helps SEO backlink profiles AND AI visibility consistency. The overlap is substantial enough that source diversity investments produce returns in both dimensions.

The practical implication: don't treat AI visibility and SEO as separate workstreams requiring entirely different content. Build content on shared quality foundations, then add AI-specific structural optimizations (answer capsules, fact density, schema) as an additional layer.

The result is content that performs on both measurement axes rather than requiring a pick between them.

Common Measurement Mistakes

Mistake 1: Treating one metric as a substitute for the other

Teams report SEO metrics and assume AI visibility is correlated, or vice versa. Fix: separate reporting, dual-metric framework, acknowledge the weak correlation.

Mistake 2: Declaring SEO dead

Teams read about AI Overviews displacing clicks and conclude SEO doesn't matter anymore. 75% of search queries still go through traditional search. Fix: recognize SEO is evolving, not disappearing. Impression and position data still matters for most commercial and navigational queries.

Mistake 3: Declaring AI visibility noise

Teams dismiss AI visibility as unmeasurable or too volatile to report on. Fix: the 90-minute weekly measurement methodology produces defensible data. The volatility narrative is overstated once sample size (25-50 prompts) is adequate.

Mistake 4: Optimizing for one at the expense of the other

Teams focus obsessively on AI citations and let SEO technical debt accumulate, or keep chasing backlinks while ignoring structural extractability. Fix: shared-foundations approach — quality content + technical cleanliness + extractable structure = both metrics benefit.

Mistake 5: Ignoring the contradictions

Teams see pages with high SEO and low AI visibility (or vice versa) and don't investigate. Fix: contradictions are where the clearest optimization signals live. Pages with high SEO but low AI visibility typically need 60-90 minutes of structural work to become AI-extractable.

Mistake 6: Reporting them at different cadences

Teams run monthly SEO reports and quarterly AI visibility reviews, losing the ability to connect the two. Fix: integrate both into the same weekly rhythm. AI visibility review and SEO health check happen on the same Monday morning.

Content Engine Integration

Measuring two disciplines manually roughly doubles the operator time required. Weekly AI visibility measurement (60-90 minutes) + weekly SEO health check (60 minutes) + monthly reporting (2-3 hours) = 6-8 hours per week on measurement alone.

A content engine unifies the measurement workflow:

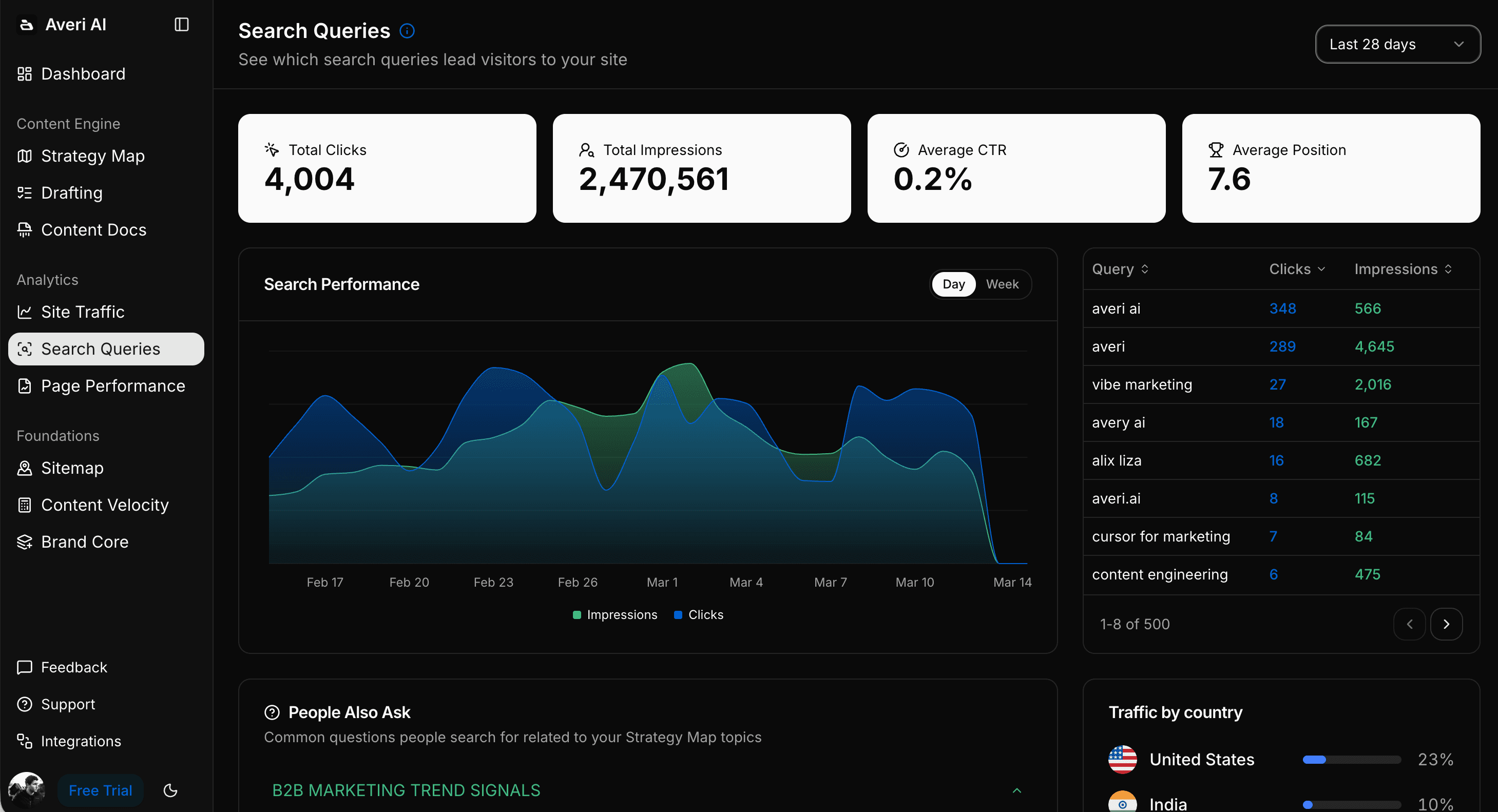

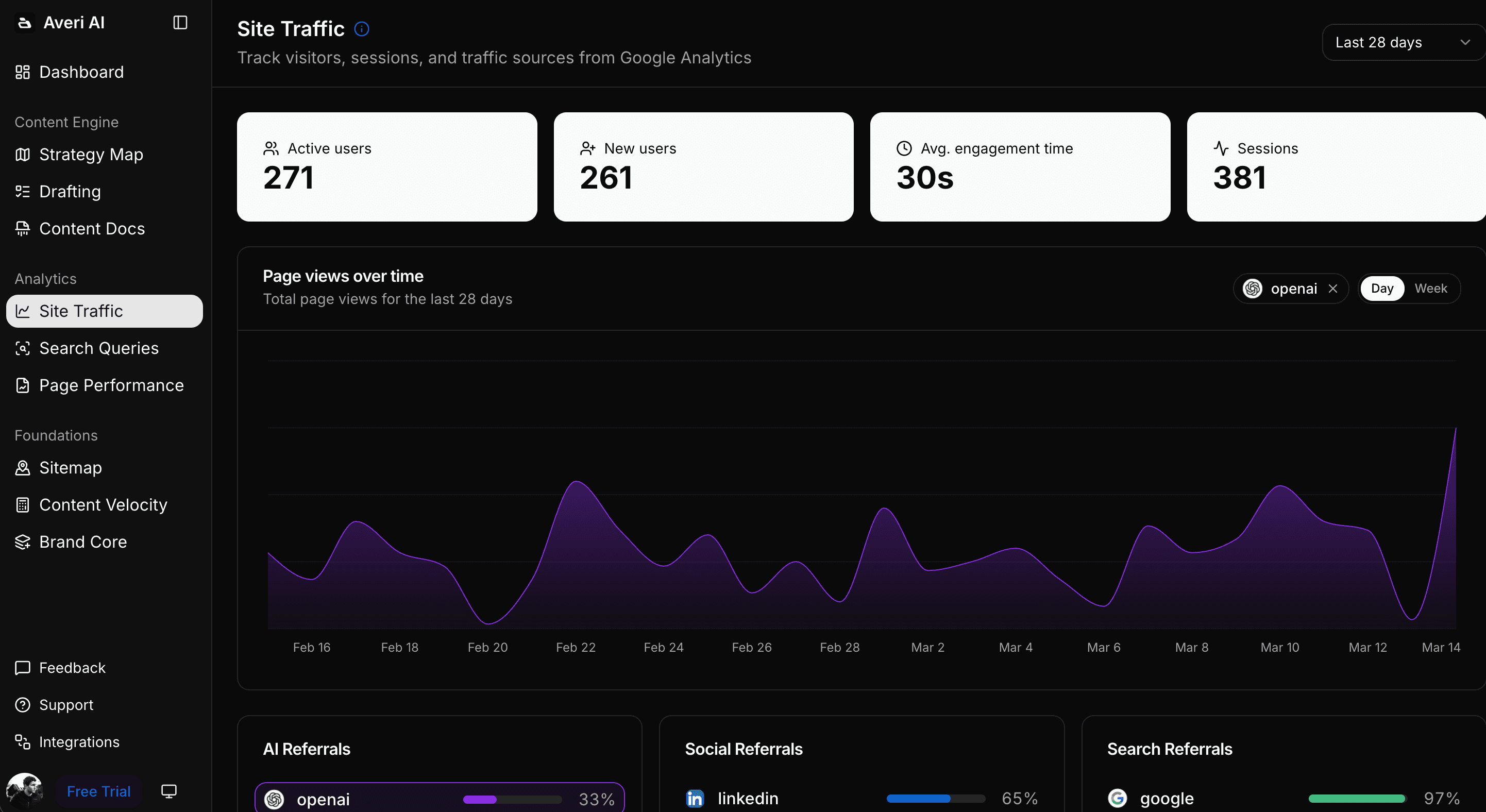

GSC integration surfaces SEO metrics (impressions, clicks, position) alongside AI visibility metrics in a single dashboard

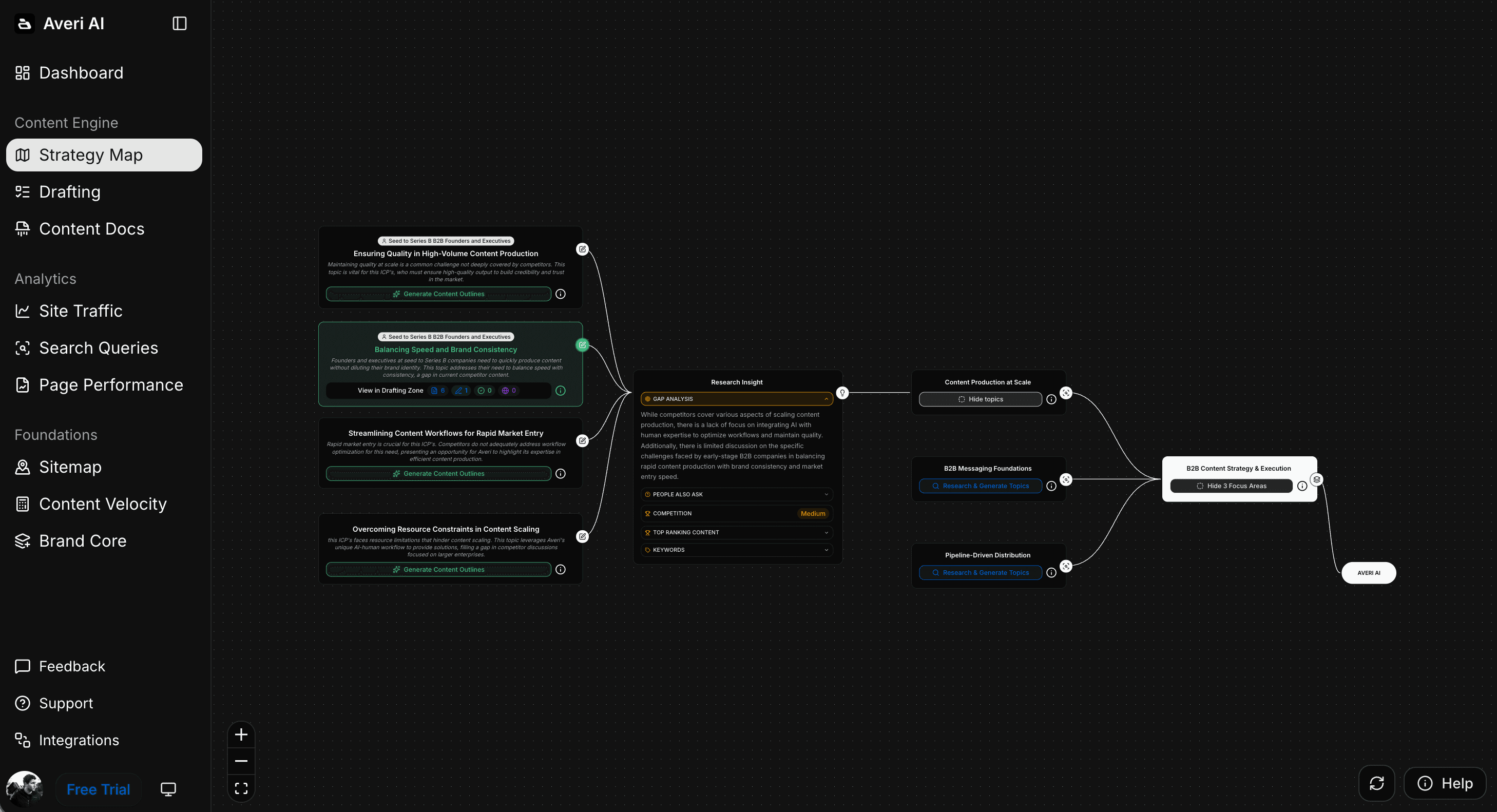

Page-level dual scoring shows each page's SEO performance and AI citation status side-by-side, flagging Pattern 1 and Pattern 2 contradictions automatically

Striking-distance analysis overlays the two disciplines — pages ranking position 4-15 that are also AI-cited get prioritized for structural refresh first

Dual-metric reporting generates the 3-tier structure weekly without requiring separate report-building

Integrated optimization queue surfaces which pages need SEO work, which need AI visibility work, and which need both — saving the diagnostic time

The result: measurement time drops from 6-8 hours weekly to 45-60 minutes of review. The strategic decisions (which topics to pursue, which angles to take) stay human. The mechanical integration work disappears.

FAQs

How is AI visibility different from traditional SEO?

SEO measures ranking position, clicks, and CTR across traditional search results where buyers evaluate 10+ links on a results page. AI visibility measures citation frequency, brand mentions, and share of voice inside AI-generated answers where buyers see 3-6 synthesized brand options. The two disciplines share foundational practices (quality content, E-E-A-T, technical basics) but reward structurally different signals — SEO rewards backlink authority and keyword relevance; AI visibility rewards extractability, fact density, and freshness.

Do SEO and AI visibility correlate?

Weakly. Only 11% of sites are cited by both ChatGPT and Perplexity simultaneously, and 47% of AI Overview citations come from pages ranking below position 5 organically. Strong SEO rankings don't guarantee AI visibility, and vice versa. The weak correlation is why B2B SaaS teams need to measure both independently rather than assuming one predicts the other.

Is SEO dead?

No. 75% of search queries still go through traditional search engines where SEO metrics (rank, impressions, CTR) remain the primary signal. What's changed is that the remaining 25%+ of queries now route through AI-generated answers where SEO metrics don't measure what matters. SEO is evolving rather than disappearing. The dashboards that treat SEO as the complete picture are increasingly incomplete, but the underlying discipline remains necessary.

Should I still invest in traditional SEO?

Yes, with a shift in focus. Commercial transactional queries, branded search, navigational queries, and long-tail informational content still reward traditional SEO investment. Category research queries, comparison queries, and high-level educational content increasingly reward AI visibility investment. The ideal 2026 allocation for most B2B SaaS teams is roughly 50-60% of content effort on foundations that benefit both disciplines, with platform-specific optimization layered on top.

How do I know if a page is failing at AI visibility but succeeding at SEO?

Check three signals: (1) the page ranks in top 10 organically for its target keyword, (2) the query triggers an AI Overview or strong ChatGPT/Perplexity response, and (3) your brand isn't cited in the AI response. Pages matching all three patterns are the Pattern 1 contradictions — strong SEO, weak AI visibility, and typically fixable in 60-90 minutes of structural work (answer capsules, fact density, schema).

Which metric should I prioritize in executive reports?

Lead with AI visibility metrics as the top-line, with SEO as the supporting indicator. AI visibility represents the fastest-growing buyer-journey slice, converts at 5x the rate of Google organic, and is the metric most executives know the least about. Retaining SEO metrics in the report shows the larger overall traffic picture while keeping AI visibility front-and-center for the evolving half of the buyer journey.

How often should I measure each?

AI visibility should be measured weekly using a 25-50 prompt library. SEO health should be reviewed weekly (rank position, GSC impressions, CTR) and deep-audited monthly. Both fit into the same weekly rhythm — a 90-minute Monday morning session can cover AI visibility, SEO health check, and combined reporting for most B2B SaaS teams at startup scale.

Related Resources

Core AI Visibility Framework

The Complete Guide to AI Visibility for B2B SaaS — the pillar this piece sits under

Brand Visibility Score: The Only AI Search Metric That Actually Matters

AI Citation Tracking: How to Measure Citation Frequency Across ChatGPT, Perplexity, and Claude

AI Share of Voice: The Competitive Metric Most SaaS Teams Aren't Tracking Yet

Measurement Operations

Attribution for AI-Referred Traffic: Fixing the "Direct Traffic" Problem in GA4

How to Build an AI Visibility Prompt Library (25-50 Prompts That Actually Matter)

The Weekly AI Visibility Report: A 90-Minute Template for Startup Teams

SEO Tactical Context

SEO for Startups: How to Rank Higher Without a Big Budget in 2026

Google AI Overviews Optimization: How to Get Featured in 2026

The Complete Guide to GEO Search: How to Rank in the Age of LLMs

Platform-Specific Context

ChatGPT vs. Perplexity vs. Google AI Mode: The B2B SaaS Citation Benchmarks

The GEO Playbook 2026: Getting Cited by LLMs, Not Just Ranked by Google