Attribution for AI-Referred Traffic: Fixing the "Direct Traffic" Problem in GA4

6 minutes

TL;DR

🎯 AI-referred traffic attribution is the measurement practice of identifying visitors who arrive from AI engines (ChatGPT, Perplexity, Claude, Gemini, Bing Copilot) and separating them from Direct traffic where most of it currently lands misclassified.

📉 30-50% of AI-driven pipeline hides in Direct traffic at typical B2B SaaS companies because referrer data strips in transit. Unfixed attribution means you can't prove the ROI of your AI citation work even when citations are climbing.

🔧 The fix is a GA4 custom channel grouping with referrer-based regex matching (chatgpt.com, perplexity.ai, claude.ai, gemini.google.com, bing.com/copilot). One-time setup in under 30 minutes, permanent visibility improvement.

📊 Landing page pattern analysis is the backup method when referrers are fully stripped (mobile AI apps, Safari). Pages that get cited by AI but have no organic ranking = signal that Direct sessions to those pages are likely AI-referred.

⚡ Fixed attribution reveals the real ROI story. Teams that fix GA4 attribution typically see AI-referred traffic grow from "invisible" to 8-15% of total organic-equivalent traffic, with 3-5x the conversion rate of branded Google Organic.

Zach Chmael

CMO, Averi

"We built Averi around the exact workflow we've used to scale our web traffic over 6000% in the last 6 months."

Your content should be working harder.

Averi's content engine builds Google entity authority, drives AI citations, and scales your visibility so you can get more customers.

Attribution for AI-Referred Traffic: Fixing the "Direct Traffic" Problem in GA4

Open your GA4 Acquisition report. Look at Direct traffic for the last 90 days. It's probably up 30-60% year-over-year.

Your team is almost certainly attributing this to brand recognition.

"More people are typing our URL directly — our brand is growing." That story is comfortable and mostly wrong.

A significant share of your Direct traffic is actually AI-referred — visitors clicking through from ChatGPT, Perplexity, Claude, and Gemini whose referrer data got stripped somewhere between the AI engine and your analytics.

Forrester estimates AI-generated traffic is 2-6% of total organic traffic and growing 40%+ per month. AI referrals to the top 1,000 websites reached 1.13 billion visits in June 2025 — a 357% year-over-year growth rate.

That traffic converts differently from Direct.

AI-referred visitors convert at 14.2% versus Google's 2.8%. When it's misclassified as Direct traffic, your dashboard tells you that Direct is converting at roughly 8-10% — an average of the true 14%+ AI-referred conversion and the 2-3% typical Direct rate.

Every channel metric downstream becomes dishonest.

This piece fixes the attribution problem.

What AI referrer data actually looks like per engine. How to build the GA4 custom channel grouping that separates AI-referred traffic from Direct. Landing-page pattern analysis for when referrers are fully stripped. The weekly report template that turns fixed attribution into an ROI case your leadership team can't ignore.

For the broader AI visibility framework this attribution layer supports, see The Complete Guide to AI Visibility for B2B SaaS.

What Is AI-Referred Traffic Attribution?

AI-referred traffic attribution is the practice of identifying visitors who arrive from AI engines (ChatGPT, Perplexity, Claude, Gemini, Bing Copilot, Google AI Mode) and classifying them as a distinct channel in your analytics stack.

It requires detecting AI referrer hostnames, building custom channel groupings, and supplementing with landing-page pattern analysis when referrer data is stripped.

The goal is to separate AI-referred traffic from Direct traffic (where it currently misclassifies by default) so you can measure conversion rate, pipeline contribution, and content ROI accurately.

Attribution sits at the end of the AI visibility measurement stack.

The other five AI visibility metrics (Brand Visibility Score, citation frequency, brand mention rate, AI share of voice, LLM conversion rate) measure what's happening inside AI responses. Attribution measures what happens after the click — when an AI-influenced buyer actually visits your site.

Without attribution, your citation work produces invisible ROI.

With attribution, citation rate improvements correlate directly to pipeline lifts.

The difference between a CMO who gets AI visibility budget and one who doesn't usually comes down to whether the attribution layer is working.

Why AI Traffic Gets Misclassified as Direct

Five mechanical reasons AI referrer data disappears in transit.

Reason 1: Mobile app referrer stripping

ChatGPT's mobile apps (iOS and Android), Perplexity's mobile apps, and Claude's mobile apps often don't pass referrer data when the user taps a citation link. The session opens in an in-app browser or system browser, and the referrer shows as empty — which GA4 classifies as Direct.

Reason 2: HTTPS-to-HTTPS redirect chains

When an AI engine sends a user through a redirect chain (common on citation tracking links), some browsers strip the referrer for privacy. The click originates at chat.openai.com, redirects through an intermediate URL, and arrives at your site with an empty referrer field.

Reason 3: Privacy-first browser defaults

Safari, Brave, and some Chrome privacy modes strip referrer data by default for cross-origin requests. A macOS Safari user clicking a Perplexity citation arrives at your site with "Direct" traffic classification even though the click originated from an AI citation.

Reason 4: Search-engine embedded AI

Google AI Overviews and Google AI Mode appear inside google.com search results. When a user clicks a citation link from an AI Overview, the referrer is google.com — identical to clicking a traditional organic blue link. This is unsolvable at the referrer level because Google doesn't differentiate the two click sources technically.

Reason 5: Desktop browser settings

Users with strict referrer policies configured in browser settings get their referrers stripped on all clicks. This is a small percentage of traffic but affects enterprise IT environments where corporate policies lock down browser behavior.

The combined effect: 30-50% of actual AI-referred traffic lands in your GA4 Direct traffic channel. Some is recoverable through custom channel grouping configuration. Some requires landing-page proxy analysis. Some is structurally unrecoverable and stays hidden.

The AI Engine Referrer Map

Different AI engines pass referrer data differently. This table shows what's detectable in GA4 by default.

Engine | Desktop Referrer | Mobile App Referrer | Detection Reliability |

|---|---|---|---|

ChatGPT | chatgpt.com, chat.openai.com | Often stripped | 60-70% of clicks captured |

Perplexity | perplexity.ai, www.perplexity.ai | Usually passes | 80-90% of clicks captured |

Claude | claude.ai | Often stripped | 50-65% of clicks captured |

Gemini | gemini.google.com | Passes | 75-85% of clicks captured |

Bing Copilot | bing.com, copilot.microsoft.com | Passes | 70-80% of clicks captured |

Google AI Mode / AI Overviews | google.com (identical to organic) | google.com | Not reliably distinguishable from Organic Search |

Perplexity is the most reliable for attribution purposes. Its mobile apps and desktop experience consistently pass referrer data. If you're just starting attribution work, Perplexity gives you the cleanest signal.

Google AI products are the hardest because they operate inside google.com. AI Overview clicks look identical to traditional organic clicks in your referrer data. Landing-page pattern analysis (below) is the primary workaround.

Mobile apps are the universal gap. Every engine loses 30-50% of mobile citation clicks to referrer stripping. This is why fixed attribution typically recovers 50-70% of true AI traffic, not 100%.

For the full platform-specific breakdown of citation mechanics that produces these referrers, see AI Citation Tracking: How to Measure Citation Frequency Across ChatGPT, Perplexity, and Claude.

Setting Up the GA4 Custom Channel Grouping (Step-by-Step)

A one-time GA4 configuration separates AI-referred traffic into its own channel forever. Here's the exact setup.

Step 1: Open custom channel groupings

In GA4, go to Admin → Data Display → Channel Groups. Click "Create new channel group." Name it "AI-Aware Channels" or similar.

Step 2: Create the "AI Referrals" channel

Above the Direct channel in the priority order, create a new channel called "AI Referrals." Set the condition as:

This regex matches the most common AI engine hostnames. Add any additional engines your category uses (some B2B categories see heavy Phind, You.com, or specialized AI tool referrers).

Step 3: Adjust channel priority order

Place the "AI Referrals" channel above Organic Search and Direct in the channel priority order. GA4 evaluates channels top-to-bottom — a session with a chatgpt.com referrer needs to match AI Referrals before it can fall through to any other channel.

Step 4: Apply to historical data

GA4 applies custom channel groupings to both new and historical data. After creating the group, run your standard acquisition reports using the new "AI-Aware Channels" group to see how much AI traffic was hidden in Direct for the last 12 months.

Step 5: Set up a permanent custom segment

Create a GA4 segment called "AI-Referred Visitors" using the same regex. This lets you filter any report (landing pages, events, conversions, revenue) to just AI-referred sessions.

Expected result: Most B2B SaaS accounts see 3-8% of total sessions re-classified from Direct to AI Referrals immediately. The exact number varies based on your content mix, category, and how often buyers use AI for research in your space.

Landing Page Pattern Analysis (The Backup Method)

Custom channel groupings recover 50-70% of true AI-referred traffic. The remaining 30-50% arrives with stripped referrers and legitimately shows up as Direct. Landing-page pattern analysis surfaces this hidden traffic.

The core logic

Pages that are heavily cited by AI engines but have low organic search rankings shouldn't get much Direct traffic. When they do, that Direct traffic is almost certainly AI-referred with stripped attribution.

The 4-step process

Step 1: From your citation tracking work, identify your 10-20 most-cited pages in AI responses.

Step 2: In Google Search Console, check each page's organic ranking for its primary target keyword. Flag pages ranking below position 10 organically but appearing in AI citations.

Step 3: In GA4, filter Direct traffic to those flagged pages. Direct traffic shouldn't materialize organically for pages that don't rank — which means most of it is AI-referred.

Step 4: Calculate the share of your total Direct traffic that lands on AI-cited-but-poorly-ranked pages. That's your landing-page-inferred AI traffic.

Example calculation:

You have 3,000 Direct sessions/month total

600 of them land on pages that rank below position 10 organically but get cited by AI

200 of them land on pages ranking on page 1 organically (legitimate brand search)

The remaining 2,200 are truly ambiguous

Inferred AI-referred traffic from landing-page pattern analysis: 600 sessions (20% of Direct). Combined with your custom channel grouping recovery, you now have a defensible total AI-referred number that's 70-85% accurate.

What this reveals

Most B2B SaaS teams running this analysis for the first time discover their AI-referred traffic is 2-4x larger than their custom channel grouping showed. The total typically lands at 6-12% of total organic-equivalent traffic for active AI visibility programs.

Building Your Weekly AI Attribution Report

Attribution only matters if it feeds decisions. Here's the weekly report structure that turns attribution into editorial and strategic action.

The 4-section report

Section 1: AI traffic volume and trend

Total AI-referred sessions (from custom channel group)

Week-over-week and month-over-month growth

AI traffic as % of total organic-equivalent traffic

Landing page pattern-inferred Direct-but-AI traffic

Section 2: AI traffic by engine

Sessions broken down by referrer source (chatgpt.com vs perplexity.ai vs claude.ai, etc.)

Conversion rate per engine

Which engines are driving the most revenue

Section 3: AI traffic by landing page

Top 20 pages receiving AI-referred sessions

Conversion rate per page

Pages gaining or losing AI-referred traffic week-over-week

Section 4: AI ROI summary

AI-referred pipeline contribution

AI-referred revenue (if ecommerce or self-serve)

Comparison to other channels (Organic Search, Paid, Direct excluding AI)

This report is the attribution-layer output that feeds the full weekly AI visibility report. Attribution provides the downstream ROI signal; citation tracking and share of voice provide the upstream visibility signals.

The executive takeaway pattern

Most weekly reports get skimmed and filed. The attribution report earns attention because it converts AI visibility work into dollars. One sentence at the top of each report: "AI-referred traffic grew X% this week, converting at Y% vs. Z% for [comparison channel]."

That sentence, delivered weekly for 6-8 weeks, is what turns AI visibility from "experimental tactic" into "primary growth channel" in leadership's mental model.

Common AI Attribution Mistakes

Six mistakes that destroy attribution accuracy.

Mistake 1: Not creating a custom channel group

Teams use GA4's default channels and accept Direct traffic growth as mystery. Fix: the 30-minute setup above eliminates 50-70% of misattribution immediately.

Mistake 2: Forgetting to update the regex

AI engines launch new domains. Bing Copilot's referrer patterns changed twice in 2025. Your regex from Q1 may miss Q3 traffic. Fix: review and update the regex quarterly as new engines and domain patterns emerge.

Mistake 3: Conflating AI Overview clicks with AI Referrals

Google AI Overview clicks pass google.com as referrer, making them indistinguishable from traditional organic. Including them in AI Referrals inflates the number incorrectly. Fix: AI Overview clicks stay in Organic Search by default. Use landing-page pattern analysis on your top-cited pages if you need to estimate them separately.

Mistake 4: Ignoring mobile-app traffic

Teams build GA4 attribution and assume it captures everything. Fix: accept that 30-50% of AI traffic arrives through mobile apps with stripped referrers. Use landing-page pattern analysis as the backup method to surface this traffic.

Mistake 5: Reporting attribution in isolation

Attribution data without upstream context (citation rate, share of voice) produces observations but not decisions. Fix: combine attribution with citation tracking in the same report. "AI-referred sessions up 15%" × "citation frequency up 8 points" = clear causal story.

Mistake 6: Not connecting to revenue

Attribution that stops at sessions tells half the story. The missing half is conversion and pipeline contribution. Fix: every attribution report should include conversion rate and, where possible, revenue or pipeline attributed to AI-referred traffic. This is where AI visibility ROI gets proved.

Content Engine Integration

Manual attribution work — regex updates, landing page pattern analysis, weekly report generation — takes 2-3 hours weekly at sustainable scale. Over 6 months, that's 70+ hours of operator time that could be going to content production.

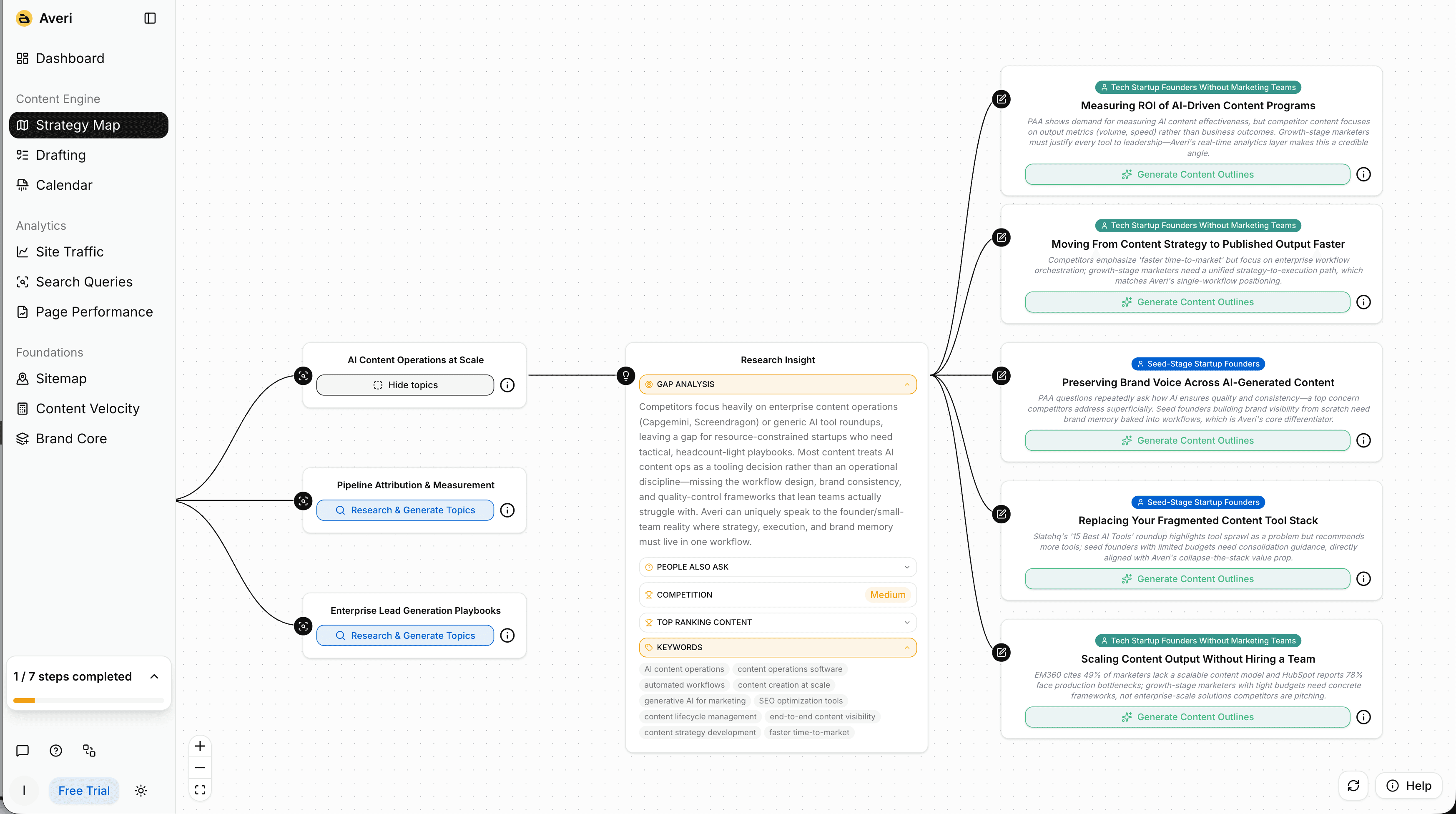

A content engine builds attribution into the analytics layer:

Pre-built AI referrer channels update automatically as new engines launch

Landing page pattern analysis runs across your full content library continuously, flagging Direct traffic to AI-cited pages

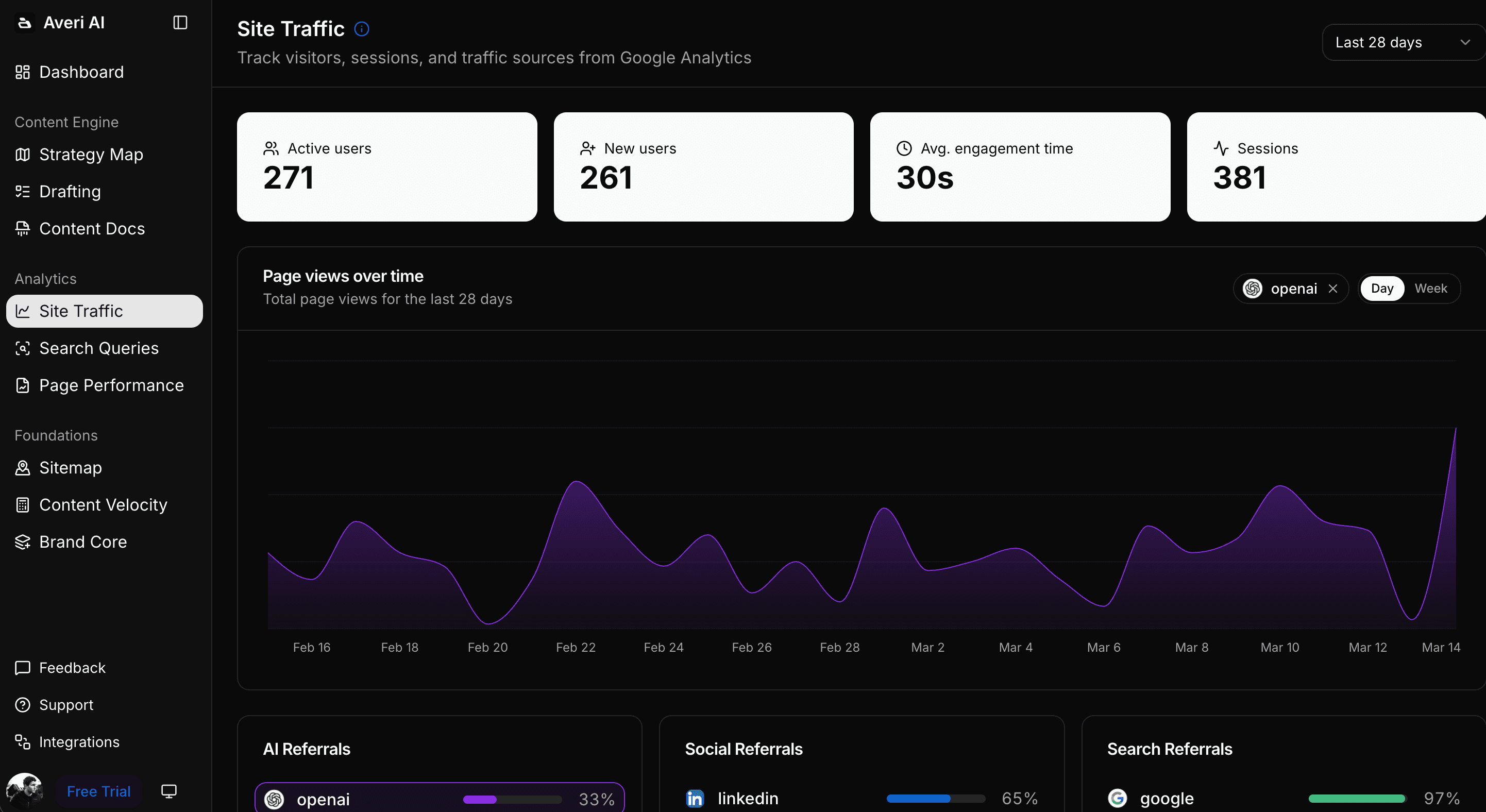

Conversion tracking for AI-referred sessions flows through to Averi's Analytics Dashboard without manual segment-building

Weekly reports generate automatically with the 4-section structure above

Causal linking connects citation rate changes to attribution outcomes, showing which content produced which traffic gains

The measurement discipline stays identical. The mechanical work disappears. You still review the reports weekly and make editorial decisions — but the 2-3 hours becomes 20 minutes.

For the broader operational framework that attribution plugs into, see The Weekly AI Visibility Report: A 90-Minute Template for Startup Teams.

FAQs

What is AI-referred traffic attribution?

AI-referred traffic attribution is the practice of identifying website visitors who arrive from AI engines (ChatGPT, Perplexity, Claude, Gemini, Bing Copilot) and classifying them as a distinct channel separate from Direct traffic. It involves detecting AI engine hostnames in referrer data, building custom channel groupings in GA4, and using landing-page pattern analysis for sessions where referrer data is stripped. Without attribution, AI-driven pipeline stays invisible in Direct traffic and AI citation work looks ineffective on dashboards.

Why does AI traffic show up as Direct in GA4?

Five mechanical reasons: mobile AI apps often strip referrer data, HTTPS redirect chains lose referrers during transit, privacy-first browsers (Safari, Brave) strip referrers by default, Google AI Overviews operate inside google.com (indistinguishable from organic search), and strict browser/IT referrer policies affect 5-10% of traffic. The combined effect is that 30-50% of true AI-referred traffic lands in Direct traffic by default in most B2B SaaS accounts.

How do I set up AI referrer tracking in GA4?

Create a custom channel group in GA4 (Admin → Data Display → Channel Groups) with a new channel called "AI Referrals" using a regex that matches AI engine hostnames (chatgpt.com, perplexity.ai, claude.ai, gemini.google.com, copilot.microsoft.com, and others). Place this channel above Direct and Organic Search in priority order so qualifying sessions get classified correctly. The setup takes roughly 30 minutes and applies retroactively to historical data.

Can I distinguish Google AI Overviews from regular Google organic traffic?

Not directly. AI Overview clicks pass google.com as the referrer, identical to traditional organic clicks. Landing-page pattern analysis is the workaround: pages heavily cited in AI Overviews but ranking below position 10 organically shouldn't receive meaningful Google Organic traffic, so that traffic is inferentially AI Overview-driven. This gives you estimated rather than exact numbers for the Google AI products specifically.

How much AI-referred traffic should I expect to find?

Depends on your AI visibility level and content mix. Accounts with an active AI visibility program typically see 6-15% of total organic-equivalent traffic classified as AI-referred after fixing attribution. Accounts with no active AI optimization see 1-3%. The gap between those numbers is where AI visibility ROI gets demonstrated to leadership teams.

Should I still track Direct traffic separately after fixing AI attribution?

Yes. After AI Referrals are separated, remaining Direct traffic is closer to genuine brand search, bookmarked sessions, and organic word-of-mouth visits. Its conversion rate should now run closer to typical brand-search rates (5-15% for B2B SaaS) rather than the inflated 8-12% average that misclassified AI traffic produced. Cleaner Direct traffic is more useful for measuring brand awareness distinctly from AI visibility.

How often should I update the AI referrer regex?

Quarterly at minimum. AI engines launch, rebrand, and shift domain patterns frequently. Bing Copilot's referrer patterns changed twice in 2025. New engines (Phind, You.com, Komo) may become relevant in specific B2B categories. Review your regex against a list of AI engines mentioned in industry research every 90 days and add new hostnames as needed.

Related Resources

Core AI Visibility Framework

The Complete Guide to AI Visibility for B2B SaaS — the pillar this piece sits under

Brand Visibility Score: The Only AI Search Metric That Actually Matters

AI Citation Tracking: How to Measure Citation Frequency Across ChatGPT, Perplexity, and Claude

AI Share of Voice: The Competitive Metric Most SaaS Teams Aren't Tracking Yet

Measurement Operations

How to Build an AI Visibility Prompt Library (25-50 Prompts That Actually Matter)

The Weekly AI Visibility Report: A 90-Minute Template for Startup Teams

SEO Visibility vs. AI Visibility: The Two Metrics Every B2B SaaS Needs in 2026

Analytics & Metrics

ChatGPT vs. Perplexity vs. Google AI Mode: The B2B SaaS Citation Benchmarks

The Top 5 Marketing Metrics Every Startup Should Track in 2026

How to Measure Marketing Success: The Most Important KPIs and Metrics