How to Build an AI Visibility Prompt Library (25-50 Prompts That Actually Matter)

5 minutes

TL;DR

🎯 An AI visibility prompt library is a locked set of 25-50 buyer-relevant queries run consistently across AI engines to measure citation frequency, brand mentions, and share of voice over time. It's the foundation every AI visibility metric stands on.

📊 The 25-50 prompt sweet spot: fewer than 25 produces volatile data (single-prompt noise dominates). More than 50 becomes unmaintainable without automation. Most B2B SaaS teams hit accuracy plateau at 30-40 prompts.

⚖️ 3-category balance: 30-40% category prompts ("best X tools"), 30-40% comparison prompts ("X vs Y," "alternatives to X"), 20-30% use-case prompts ("how to do X"). Monocultures of any single type distort the picture.

🚫 5 bias patterns destroy accuracy: brand name in prompt, leading phrases, feature-specific queries, overly-broad queries, and prompts with built-in answers. Most first-time libraries contain at least 3 of these.

📅 Lock for 90 days minimum before updating. Changing prompts mid-measurement destroys longitudinal comparability and makes citation trend analysis meaningless.

Zach Chmael

CMO, Averi

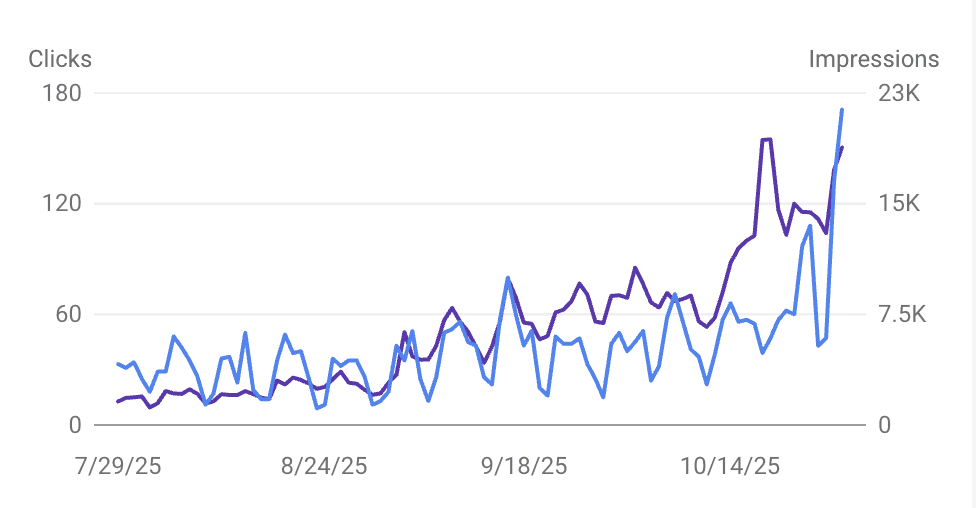

"We built Averi around the exact workflow we've used to scale our web traffic over 6000% in the last 6 months."

Your content should be working harder.

Averi's content engine builds Google entity authority, drives AI citations, and scales your visibility so you can get more customers.

How to Build an AI Visibility Prompt Library (25-50 Prompts That Actually Matter)

Every AI visibility measurement you run is only as good as the prompts you test.

Bad prompts produce bad data. No prompt library means you're measuring noise and calling it strategy.

Most teams skip this step.

They ask ChatGPT a few category questions, see whether their brand gets mentioned, and declare their AI visibility either "good" or "concerning."

Six weeks later the number fluctuates and nobody knows why — because the prompts weren't locked, weren't balanced across intent types, and weren't designed to avoid the five biases that make AI visibility data unreliable.

A proper prompt library fixes this. It's the foundation every other AI visibility metric stands on.

Citation tracking needs a locked prompt set to measure longitudinally. AI share of voice can't be calculated without one. The weekly AI visibility report runs off it. Brand Visibility Score depends on it entirely.

This piece is the methodology.

What a prompt library actually is. Why random prompts produce unreliable data. The 3-category framework that balances intent types. The exact number of prompts you need (25-50). Five bias patterns to eliminate. A sample 30-prompt library you can adapt in under an hour. Testing and validation before committing. When and how to update.

For the broader AI visibility framework this library supports, see The Complete Guide to AI Visibility for B2B SaaS.

What Is an AI Visibility Prompt Library?

An AI visibility prompt library is a locked set of 25-50 buyer-relevant queries designed to measure how often AI engines cite your content, mention your brand, and include you in competitive responses.

The library stays fixed for 90+ day measurement cycles so citation rate, brand mention rate, and share of voice numbers remain comparable week over week. Each prompt belongs to one of three intent categories (category, comparison, use-case) and avoids five specific bias patterns that distort results.

The library is the instrument.

Just as a thermometer needs consistent calibration to produce comparable readings over time, an AI visibility measurement needs a consistent prompt set to produce comparable data.

Teams that run "whatever prompts feel relevant this week" are taking temperature readings with a different thermometer each time — the numbers don't mean anything.

Three things the library does mechanically:

Reveals which queries your brand appears in (basic visibility signal)

Tracks trends over time when kept fixed across measurement cycles (trend signal)

Enables competitive comparison when run across platforms where competitors are also being measured (SOV signal)

Without this instrument, you're running AI visibility work on vibes. With it, you have defensible data that can survive leadership questioning and justify editorial resource allocation.

Why Random Prompts Produce Unreliable Data

Three mechanical reasons random prompting breaks the measurement.

Reason 1: Prompt variance dominates signal

A single category prompt can produce wildly different responses depending on phrasing. "Best content marketing tools for startups" returns a different set of brands than "top content marketing platforms for startups" returns a different set than "what content marketing software should startups use." If you're changing prompts week to week, what looks like citation rate change might just be prompt-change noise.

Reason 2: Intent type imbalance distorts the picture

Run only comparison prompts ("X vs Y") and your data will overweight brands that show up in comparison content. Run only category prompts and you'll miss specialized tools that dominate use-case queries. Run only use-case prompts and you'll ignore the buyer-research phase where shortlists get built. Unbalanced libraries measure unbalanced slices of the buyer journey.

Reason 3: Sample size problems at low prompt counts

With 5 prompts, a single strong or weak citation swings your citation rate 20 points. With 10 prompts, the swing is 10 points. With 30 prompts, it's 3.3 points. Citation rates are naturally volatile — you need sample size to separate real trends from random noise.

The practical implication: spend the upfront hour building a proper 30-prompt library once, then run it consistently for 6-12 months. The upfront work saves 50+ hours of second-guessing noisy data downstream.

The 3-Category Prompt Framework

Every prompt in your library belongs to one of three categories. Each category measures a different slice of the buyer journey.

Category 1: Category prompts (30-40% of library)

Queries where the buyer is researching the category itself — looking for shortlists of tools, platforms, or approaches in your space.

Pattern: "Best [category] tools/platforms/software for [audience]," "Top [category] for [use case]," "What [category] tools should [audience] consider"

Examples for a content marketing tool:

"Best content marketing tools for B2B SaaS startups"

"Top AI content platforms for lean marketing teams"

"What content marketing software works for seed-stage founders"

What it measures: Your brand's presence in the AI's category consensus list. The 3-6 brands that consistently show up here are the brands buyers consider. Outside this list = invisible.

Category 2: Comparison prompts (30-40% of library)

Queries where the buyer is actively evaluating options against each other — comparing specific tools, looking for alternatives, or researching head-to-head differences.

Pattern: "[Competitor A] vs [competitor B]," "Alternatives to [competitor]," "[Brand] compared to [other brand]," "Is [tool] better than [tool]"

Examples:

"Jasper vs Copy.ai for startups"

"Alternatives to ChatGPT for content marketing"

"Is Writesonic better than Jasper for SaaS blogs"

What it measures: Your brand's presence in active-evaluation comparison sets. These are bottom-of-funnel queries where buyers are close to decisions. Missing from comparison responses = missing from decision shortlists.

Category 3: Use-case prompts (20-30% of library)

Queries where the buyer is looking for how-to guidance on the core job your product solves. These queries don't name tool categories directly — they describe problems.

Pattern: "How to [core outcome]," "Best way to [desired result]," "How do I [specific job]"

Examples:

"How to scale blog content for a SaaS startup"

"Best way to produce SEO content with a small team"

"How do I build a content marketing workflow"

What it measures: Your brand's presence in problem-solution queries — the queries where buyers may not know they need a tool yet but are about to. Citations here come from content authority on the underlying job, not category recall.

Why the balance matters

A library heavy on category prompts will over-represent well-known brands. A library heavy on comparison prompts will over-represent brands with strong comparison content. A library heavy on use-case prompts will over-represent brands with deep how-to libraries.

The 30-40/30-40/20-30 balance produces the most representative picture of true AI visibility across the buyer journey. For the competitive set definition that feeds into this, see AI Share of Voice: The Competitive Metric Most SaaS Teams Aren't Tracking Yet.

Want to check your GEO Readiness?

How Many Prompts You Actually Need

The 25-50 prompt range has empirical backing across B2B SaaS measurement programs.

Library Size | Signal Quality | Maintenance Burden | Recommended For |

|---|---|---|---|

Under 15 prompts | Poor — single-prompt noise dominates | Minimal | Not recommended |

15-25 prompts | Moderate — workable for quick pilots | 30-45 min/week | Pre-seed teams running a 2-month pilot |

25-35 prompts | Strong — accuracy plateau reached | 45-60 min/week | Seed to Series A (the sweet spot) |

35-50 prompts | Excellent — full buyer journey coverage | 60-90 min/week | Series B+ with competitive complexity |

50+ prompts | Diminishing returns, needs automation | 2+ hours/week | Enterprise with multi-brand or multi-market |

The accuracy plateau hits around 30 prompts because most B2B SaaS categories have a finite set of truly distinct buyer queries. Past 30-35, you start producing variations of existing prompts rather than covering new intent.

The maintenance plateau hits around 50 prompts because running each prompt across 4 AI engines (ChatGPT, Perplexity, Claude, Google AI Mode) takes roughly 30-45 seconds of focused operator time.

50 prompts × 4 engines × 45 seconds = 60 minutes of pure measurement work weekly, plus 15-30 minutes of logging and analysis. That's the ceiling most teams can sustain manually.

Start at 30 prompts with the 30-40/30-40/20-30 balance. Adjust based on what your first 8 weeks of data reveal.

Avoiding Prompt Bias: 5 Patterns to Eliminate

Five patterns that destroy prompt library validity. Most first-time libraries contain at least 3 of these.

Bias 1: Brand name in the prompt

Bad: "What does [your brand] do better than Jasper?" Why it's biased: You're testing brand search, not buyer discovery. Of course [your brand] will appear in the response — you named it in the question. Fix: Remove your brand from all prompts. Your library measures whether AI engines surface you without prompting. That's the actual test.

Bias 2: Leading phrases

Bad: "Which is the best AI content engine, [Brand A] or [Brand B]?" Why it's biased: Asks the AI to pick between two pre-selected options, constraining the response to those two. Excludes the buyer's actual decision surface. Fix: Open-ended comparison prompts. "[Brand A] vs [Brand B]" or "Best AI content engines for [audience]" produce broader, more accurate responses.

Bias 3: Feature-specific queries that only match your product

Bad: "What tool has a Strategy Map feature?" Why it's biased: Strategy Map is Averi's feature name. The prompt effectively asks "what tool is Averi?" — inflating citation rate for queries no real buyer would run. Fix: Translate features into buyer language. "What tool helps me plan my content strategy" tests the actual buyer intent.

Bias 4: Overly-broad queries

Bad: "What is marketing software?" Why it's biased: Too general — captures hundreds of tools across dozens of subcategories. Your citation rate will look artificially low because the response space is too wide for any single brand to dominate. Fix: Narrow to the specific category and audience. "What content marketing software works for B2B SaaS startups" gives a constrained, measurable response space.

Bias 5: Prompts with built-in answers

Bad: "Why is [Your Brand] the best content engine for startups?" Why it's biased: Asks the AI to rationalize a conclusion you've already stated, not to discover whether the conclusion is true. Fix: Ask the AI to assess without presupposition. "Is [your brand] a good content engine for startups" or just "what's the best content engine for startups."

The bias audit checklist

Before locking your library, scan each prompt against these 5 questions:

Does my brand name appear? (Remove it.)

Does the phrasing lead to a predetermined answer?

Does the prompt use feature names only I use?

Is the query too broad to produce focused responses?

Does the prompt assume its conclusion?

If any prompt fails even one check, rewrite or cut it.

Sample AI Visibility Prompt Library (30 Prompts)

Below is a complete prompt library template for a hypothetical "content marketing tools for B2B SaaS startups" category. Adapt it to your category by replacing the content-marketing terminology with your own.

Category prompts (12)

# | Prompt |

|---|---|

1 | Best content marketing tools for B2B SaaS startups in 2026 |

2 | Top AI content platforms for lean marketing teams |

3 | What content marketing software do seed-stage founders use |

4 | Best content automation tools for Series A SaaS companies |

5 | Top AI marketing platforms for startups with no content team |

6 | Best SEO content tools for B2B SaaS in 2026 |

7 | What AI writing tools produce content that actually ranks |

8 | Top content marketing platforms for non-technical founders |

9 | Best content production tools for solo marketers |

10 | What AI content tools help startups scale blog production |

11 | Top AI marketing software for bootstrapped startups |

12 | Best content engines for B2B SaaS startups |

Comparison prompts (10)

# | Prompt |

|---|---|

13 | Jasper vs Copy.ai for B2B SaaS content |

14 | ChatGPT vs dedicated AI content platforms |

15 | Jasper alternatives for startup marketing teams |

16 | Copy.ai vs Writesonic comparison |

17 | Best alternatives to ChatGPT for content marketing |

18 | Surfer SEO vs Clearscope for SaaS content |

19 | AI content tools compared for startup budgets |

20 | Contently vs modern AI content platforms |

21 | AirOps alternatives for content marketing |

22 | Writer vs Jasper for B2B content teams |

Use-case prompts (8)

# | Prompt |

|---|---|

23 | How to scale blog content for a SaaS startup |

24 | Best way to produce SEO content with a small marketing team |

25 | How to build a content marketing workflow for founders |

26 | How do I publish consistent content without a content team |

27 | How to produce SEO-optimized content at startup speed |

28 | Best way to automate content production for B2B SaaS |

29 | How to build topical authority as a seed-stage startup |

30 | How do I get my blog content cited by AI search engines |

This 30-prompt structure gives you the 40/33/27 category/comparison/use-case balance — slightly heavier on category than the minimum, which works well for B2B SaaS where category research is the most common buyer starting point.

How to adapt this template

Replace "content marketing tools for B2B SaaS startups" with your category

Substitute your actual competitors in the comparison prompts

Translate your product's core jobs into use-case language for section 3

Run the bias audit checklist against each adapted prompt

Lock the library for 90 days before any changes

Validating Your Prompt Library Before Committing

Before running 12+ weeks of measurement against your library, validate it in three ways.

Validation 1: Run it across all 4 platforms once

Run the full 30-prompt set across ChatGPT, Perplexity, Claude, and Google AI Mode once. Log how many distinct brands appear in total responses. If you're seeing:

3-6 brands per prompt average across all platforms: Healthy. Your prompts are producing focused enough responses to measure against.

1-2 brands per prompt: Prompts may be too narrow or branded. Revisit for Bias 1 and Bias 3.

10+ brands per prompt: Prompts are likely too broad. Revisit for Bias 4.

Validation 2: Check intent balance in responses

Do the response patterns match your intent categories? Category prompts should return brand lists. Comparison prompts should return side-by-side discussion. Use-case prompts should return process explanations with tools mentioned contextually.

If your "use-case" prompts are actually returning brand lists identical to your category prompts, they're not really use-case queries — rewrite or replace.

Validation 3: Gut-check against real buyers

Show the prompt list to 3-5 actual buyers (customers, prospects, sales team members familiar with customer research). Ask: "Would you actually type any of these into ChatGPT or Perplexity when researching our category?" If more than a few prompts get "no," replace them with phrasings that match real buyer behavior.

This gut-check catches the subtle issue of prompts that sound like marketing queries rather than buyer queries. "What is the ROI of content marketing automation" sounds analytical. "How do I make content marketing not suck" might be what a real founder types.

When to Update Your Prompt Library

Prompt libraries need periodic refresh, but not weekly and not without discipline.

Update trigger 1: Quarterly refresh cycle

Every 90 days, review the library for:

Dead prompts — ones that consistently produce no brand mentions from anyone. Replace with more discriminating queries.

Outdated phrasings — queries referencing old category terminology that buyers no longer use

Missing emerging topics — new buyer queries that didn't exist when you built the library

Update trigger 2: Competitive set change

If a major new competitor enters your category (funded launch, category-adjacent expansion, merger), add 2-4 new comparison prompts that include them. This doesn't require replacing existing prompts — it extends the library.

Update trigger 3: Product or category repositioning

If your company's positioning shifts substantially (new ICP, new category definition, new core use case), rebuild the library to match. In this case, the previous library becomes comparison baseline rather than continued tracking data.

What to avoid

Don't update during active measurement. Changes mid-quarter destroy longitudinal data. Complete the cycle, then update.

Don't add prompts incrementally. Adding 3 prompts this week and 2 next week produces a constantly-moving library. Batch updates at quarterly refresh cycles.

Don't remove prompts because you don't like the results. If a prompt shows you losing citations, that's signal, not a reason to cut the prompt. Fix the content, not the measurement.

Common Prompt Library Mistakes

Mistake 1: Starting with too few prompts

Teams launch with 10-15 prompts to "test the methodology" and never scale up. Fix: start at 25-30 prompts minimum. The accuracy difference is too large to justify the measurement confusion of a small library.

Mistake 2: Loading up on comparison prompts

Because comparison queries feel most actionable ("we need to beat Competitor X"), teams over-weight this category. Fix: hold to the 30-40% ceiling on comparison prompts. Balance matters more than tactical focus.

Mistake 3: Changing prompts every week

Teams feel urgency to iterate the library. Fix: lock for 90 days minimum. Iteration produces shorter-term feelings of progress but destroys the measurement's validity.

Mistake 4: Not running across multiple platforms

Teams build a 30-prompt library and only run it on ChatGPT. Fix: run the full library across at least ChatGPT, Perplexity, and Google AI Mode. Only 11% of sites are cited by both ChatGPT and Perplexity — single-platform measurement misses most of the picture.

Mistake 5: Not logging competitor brand mentions

Teams track only their own brand appearances. Fix: log every brand that appears in every response. This is the foundation for AI share of voice calculations — without it, you can't measure competitive position.

Mistake 6: Treating the library as "set and forget"

Teams build once, never review, never adjust. Fix: quarterly refresh cycles catch drift without destroying longitudinal comparability. Schedule the refresh before you need it.

Content Engine Integration

Manual prompt library management works at startup scale. The 30-prompt × 4-platform weekly cycle takes 60-75 minutes of focused time — sustainable if treated as disciplined measurement work.

A content engine builds library management into the workflow:

Pre-built category templates give you starting prompts for common B2B SaaS categories (content marketing, SEO tools, project management, CRM, etc.) that you adapt rather than build from scratch

Bias detection flags prompts that match the 5 bias patterns before you lock the library

Platform orchestration runs your library across ChatGPT, Perplexity, Claude, and Google AI Mode automatically, logging citations and brand mentions in structured form

Longitudinal tracking maintains the 90-day lock discipline automatically — the system prevents mid-cycle changes

Refresh reminders surface at quarterly intervals with data-driven suggestions for prompts to add, cut, or modify

The judgment layer stays manual. You decide which categories matter, which competitors to include, and which emerging topics to track. The mechanical work (running the prompts, logging the data, detecting drift, enforcing discipline) disappears.

Combined with the other layers — citation tracking, share of voice, attribution, and weekly reporting — the prompt library becomes the anchor point a full AI visibility program runs off.

FAQs

What is an AI visibility prompt library?

An AI visibility prompt library is a locked set of 25-50 buyer-relevant queries run consistently across AI engines (ChatGPT, Perplexity, Claude, Google AI Mode) to measure citation frequency, brand mentions, and competitive share of voice over time. The library stays fixed for 90+ day measurement cycles so trend data remains comparable week over week. It's the foundation every other AI visibility metric — Brand Visibility Score, citation tracking, share of voice — runs off.

How many prompts should my library contain?

25-50 prompts, with 30 as the recommended starting point for B2B SaaS. Fewer than 25 produces volatile data where single-prompt noise dominates trend signal. More than 50 becomes unmaintainable without automation — each prompt × 4 platforms takes roughly 30-45 seconds of operator time weekly. The accuracy plateau typically hits around 30 prompts because most B2B SaaS categories have a finite set of truly distinct buyer queries.

What's the right balance across prompt categories?

30-40% category prompts ("best X tools for Y audience"), 30-40% comparison prompts ("X vs Y," "alternatives to X"), 20-30% use-case prompts ("how to do X"). Unbalanced libraries measure unbalanced slices of the buyer journey. A comparison-heavy library over-represents brands with strong comparison content. A category-heavy library over-represents well-known brands. The balance produces the most representative AI visibility measurement.

How often should I update my prompt library?

Quarterly refresh cycles at minimum. Every 90 days, review for dead prompts, outdated phrasings, and missing emerging topics. Don't update mid-cycle — changes during active measurement destroy longitudinal comparability and make trend data meaningless. Major updates (new positioning, major competitor entry, category repositioning) are the only reasons to rebuild outside the quarterly refresh cadence.

What prompts should I never include?

Five bias patterns to eliminate: prompts that name your brand (tests brand search, not discovery), leading phrases that constrain answers to pre-selected options, feature-specific queries using names only you use, overly-broad queries that produce unfocused responses, and prompts with built-in answers asking the AI to rationalize a conclusion. Run the 5-point bias audit on every prompt before locking the library.

Should I use the same library across all AI engines?

Yes. Running the same prompt across ChatGPT, Perplexity, Claude, and Google AI Mode is how you detect platform-specific visibility gaps. Only 11% of sites are cited by both ChatGPT and Perplexity simultaneously — a platform-specific prompt library would miss the central insight that engines disagree about which brands to cite. Same prompts, different engines, separate tracking per engine.

How do I know if my prompt library is working?

Three validation tests. First, responses should return 3-6 brands per prompt on average — tight enough for measurement, broad enough for fair representation. Second, intent patterns in responses should match prompt categories (category prompts return brand lists, comparison prompts return side-by-sides, use-case prompts return process explanations). Third, gut-check with 3-5 real buyers: would they actually type these queries into AI engines? If any of the three tests fails, revise the library before locking it.

Related Resources

Core AI Visibility Framework

The Complete Guide to AI Visibility for B2B SaaS — the pillar this piece sits under

Brand Visibility Score: The Only AI Search Metric That Actually Matters

AI Citation Tracking: How to Measure Citation Frequency Across ChatGPT, Perplexity, and Claude

AI Share of Voice: The Competitive Metric Most SaaS Teams Aren't Tracking Yet

Measurement Operations

Attribution for AI-Referred Traffic: Fixing the "Direct Traffic" Problem in GA4

The Weekly AI Visibility Report: A 90-Minute Template for Startup Teams

SEO Visibility vs. AI Visibility: The Two Metrics Every B2B SaaS Needs in 2026

Platform-Specific Context

ChatGPT vs. Perplexity vs. Google AI Mode: The B2B SaaS Citation Benchmarks

Building Citation-Worthy Content: Making Your Brand a Data Source for LLMs